Dashun Wang

3.2K posts

@dashunwang

Kellogg Chair of Technology at Kellogg, Founding director, Center for Science of Science and Innovation, Northwestern University

New paper in Nature. The more a government controls its domestic media, the more it dominates AI training data, the more pro-regime outputs we get from AI. By scraping the open web, LLMs are unwittingly laundering state-coordinated narratives into seemingly objective answers.

📄 Excited to share our latest preprint: the first cross-field audit of LLM-hallucinated citations in science ⚠️ Across arXiv, bioRxiv, SSRN & PMC, we estimate 147K fake citations in 2025 alone — threatening both the quality and equity of scientific work.

Excited to announce the 2026 iteration of the Communication & Intelligence Symposium at UChicago! We have an amazing lineup of speakers @Diyi_Yang @johnhewtt @dashunwang @TomerUllman We have a simple call for abstract that is due on Apr 15 (links 👇). Please come and share your research! Co-organized with the awesome @universeinanegg and @divingwithorcas

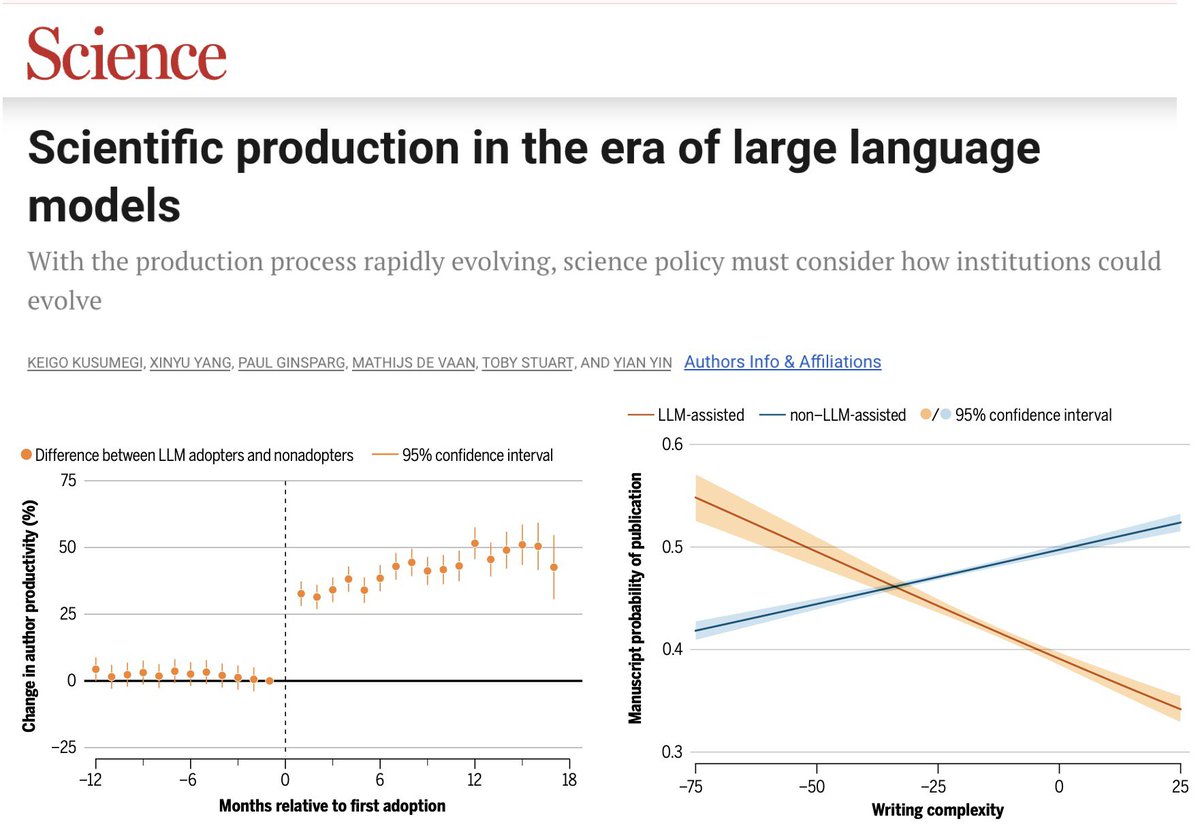

How are large language models impacting the submission and review process at high-impact journals? Severely. Since the release of ChatGPT in 2022, AI-generated and AI-assisted papers, identified by Pangram, drove a 42% increase in submission volume at Organization Science (figure below). While the journal rejected the majority of these submissions, there is a human cost to reviewing papers, which volunteer reviewers are shouldering. AI-generated content is also showing up in reviews, which similarly suffer in quality because of it -- editors at Organization Science found that AI-generated reviews are lower quality, less specific, and less topically diverse than human-written ones. The problem is not isolated. Earlier this year, ICML desk-rejected 497 papers from authors who submitted AI-generated reviews, after those authors opted into a policy that disallowed the use of AI. Grant funders also saw a surge in applications: the Marie Skłodowska-Curie Actions, a set of major research fellowships for the EU, received 142% more proposals in 2025 compared to 2022. Many scientific and academic systems implicitly rely on friction as a barrier to entry. LLMs have removed that friction, allowing for a deluge of AI slop that is straining the capacity of these institutions.

A belated self-promotion of our new paper in @PNASNexus. We ask how the interdisciplinarity of a supporting grant and that of the focal paper jointly shape the paper's scientific impact (academic.oup.com/pnasnexus/arti…). Much assumed, rarely tested; so we tested it at scale! (1/n)

Excited to announce the 2026 iteration of the Communication & Intelligence Symposium at UChicago! We have an amazing lineup of speakers @Diyi_Yang @johnhewtt @dashunwang @TomerUllman We have a simple call for abstract that is due on Apr 15 (links 👇). Please come and share your research! Co-organized with the awesome @universeinanegg and @divingwithorcas

The abstract submission window for ICSSI has been extended by a week to April 6, 2026 @ 11:59 PM AoE! Find guidelines, templates, and submission link here: icssi.org/guidelines/ See you in Boulder June 29 - July 1, or come on June 28 for the hackathon!

🚨Our March issue is now live, including an AI collaborator for science of science, a method for property-guided molecule generation, a Comment on the future of density functional theory, and much more! nature.com/natcomputsci/v… 📰Cover: nature.com/articles/s4358…