We're excited to welcome 28 new AI2050 Fellows! This 4th cohort of researchers are pursuing projects that include building AI scientists, designing trustworthy models, and improving biological and medical research, among other areas. buff.ly/riGLyyj

David Ifeoluwa Adelani 🇳🇬

4.2K posts

@davlanade

Assistant Professor @mcgillu, Core Academic Member @Mila_Quebec, Canada CIFAR AI Chair @CIFAR_News | interested in multilingual NLP | Disciple of Jesus

We're excited to welcome 28 new AI2050 Fellows! This 4th cohort of researchers are pursuing projects that include building AI scientists, designing trustworthy models, and improving biological and medical research, among other areas. buff.ly/riGLyyj

In our new paper, we reinterpret tokenisation as a problem in high-dimensional geometry (100M dims to be precise!), which we can solve efficiently to get a globally near-optimal tokeniser! Our method consistently improves language models over BPE. See 🧵for details.

🚨 Paper alert! We introduce COPSD: Crosslingual On-Policy Self-Distillation for Multilingual Reasoning 🌍🧠 LLMs have made remarkable progress in mathematical reasoning, but this ability is not equally accessible across languages, especially for low-resource languages. COPSD lets the model teach itself: 👩🎓 student: reasons from the low-resource problem 👨🏫 teacher: same model + privileged English context 🎯 learning: dense token-level self-distillation on on-policy rollouts 🌉 Intuition: transfer a model’s own high-resource reasoning behavior into low-resource language reasoning. 📄 Paper: arxiv.org/pdf/2605.09548 💻 Code: github.com/cisnlp/COPSD [1/5]

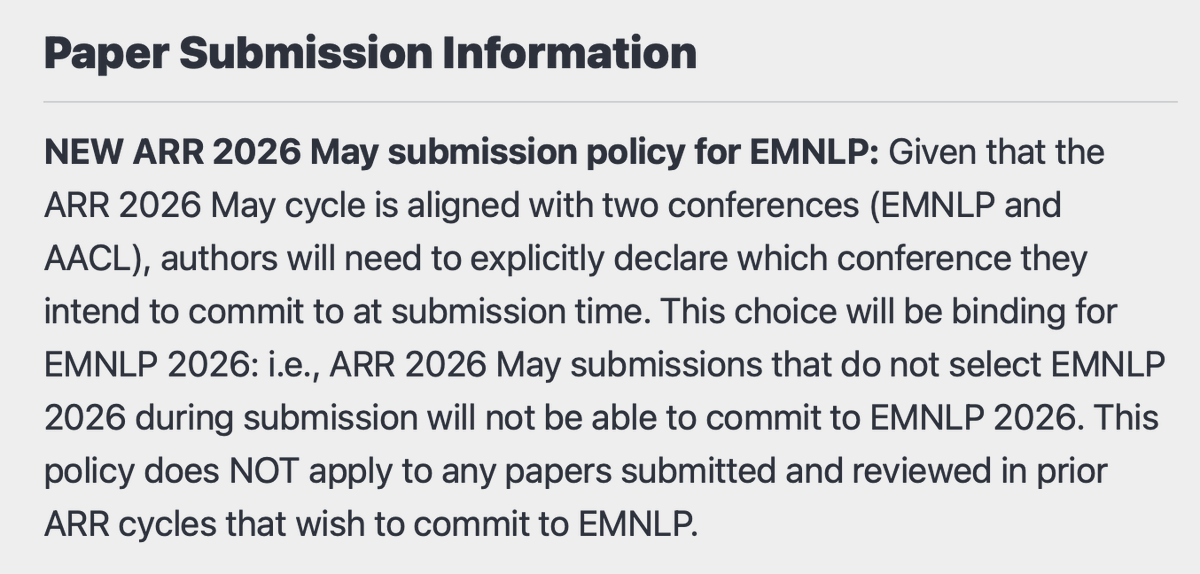

📢 Please note a critical update to the original #EMNLP2026 Call for Papers: since EMNLP and AACL share the ARR May cycle, authors will need to explicitly select a target conference at submission time. This choice will be binding! For more details see: #paper-submission-information" target="_blank" rel="nofollow noopener">2026.emnlp.org/calls/main_con…