dearaianna

348 posts

After @Pinterest @Airbnb @NotionHQ @cursor_ai, today it’s @eoghan @intercom publicly sharing that they’re finding it better, cheaper, faster to use and train open models themselves rather than use APIs for many tasks. And hundreds of other companies are doing the same without sharing. Ultimately, I believe the majority of AI workflows will be in-house based on open-source (vs API). It took much more time than we anticipated but it’s happening now!

On one hand, these are obviously much worse "otter using wifi on an airplane" than any state-of-the AI text-to-video generation, it looks like something from 2022. On the other, it was done entirely offline on my computer using open AI video generation tools, a new capability.

LiteLLM HAS BEEN COMPROMISED, DO NOT UPDATE. We just discovered that LiteLLM pypi release 1.82.8. It has been compromised, it contains litellm_init.pth with base64 encoded instructions to send all the credentials it can find to remote server + self-replicate. link below

GPT-5.4 Pro continues to be the only model of its class. For anything really hard & complex, I throw it into the maw with every bit of context I can think of. More often than not, something very useful comes out. I can't get the same results from Codex or Code or anything else.

I am currently building a agent harness on top of the primitive one for my company so that we can build various agents that are very robust and capable. I originally started off on the Claude agent SDK because of how much I love Claude and Claude Code but for reasons beyond my understanding (I'm not very smart) I couldn't quite get it to work in a performant manner. (Still love anthropic and Claude) After days of tinkering with it, I swapped it out for Deep Agents and the performance boost was absolutely insane. It just worked. And it was fast. Extra points for the DX on the new docs site. Superb. Langchain cooked 🔥👩🍳👨🍳 @hwchase17 @LangChain

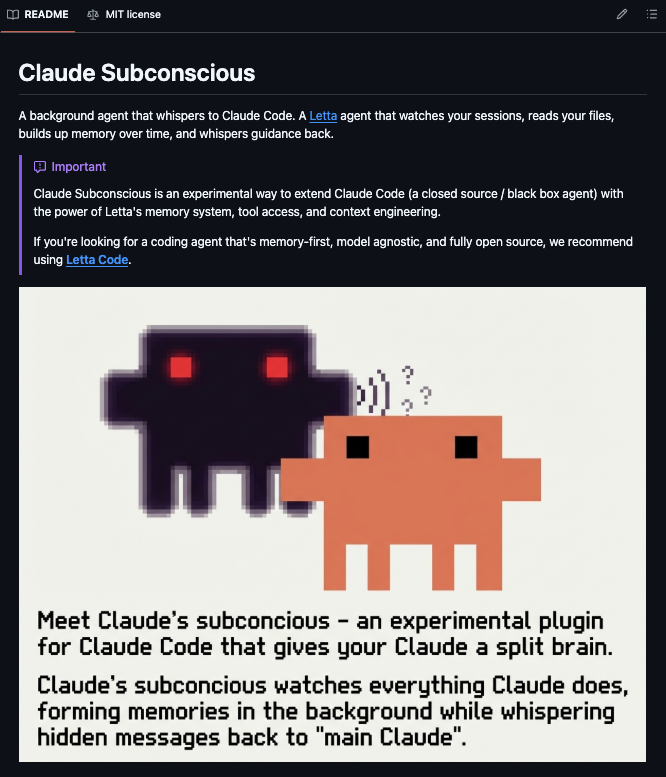

A very special guest on this episode of the Lightcone! @bcherny, the creator of Claude Code, sits down to share the incredible journey of developing one of the most transformative coding tools of the AI era. 00:00 Intro 01:45 The most surprising moment in the rise of Claude Code 02:38 How Boris came up with the idea for Claude Code 05:38 The elegant simplicity of terminals 07:09 The first use cases 09:00 What’s in Boris’ CLAUDE.md? 11:29 How do you decide the terminal’s verbosity? 15:44 Beginner’s mindset is key as the models improve 18:56 Hyper specialists vs hyper generalists 21:51 The vision for Claude teams 23:48 Subagents 25:12 A world without plan mode? 28:38 Tips for founders to build for the future 30:07 How much life does the terminal still have? 30:57 Advice for dev tool founders 32:11 Claude Code and TypeScript parallels 35:34 Designing for the terminal was hard 37:36 Other advice for builders 40:31 Productivity per engineer 41:36 Why Boris chose to join Anthropic 44:46 How coding will change 46:22 Outro

stripe projects, if it keeps expanding its catalog is going to be how we all build agents in the future

Claude Mythos Blog Post Saved before it was taken down. m1astra-mythos.pages.dev

Today's @symbolica harness is a clear example of what human-crafted targeting can achieve on ARC-AGI-3 public demo set You can "buy" performance with benchmark-specific prompts/strategies Their approach could still contain useful ideas, excited to see what the community finds