Deepak Kumar

3.9K posts

Deepak Kumar

@deepakdk3478

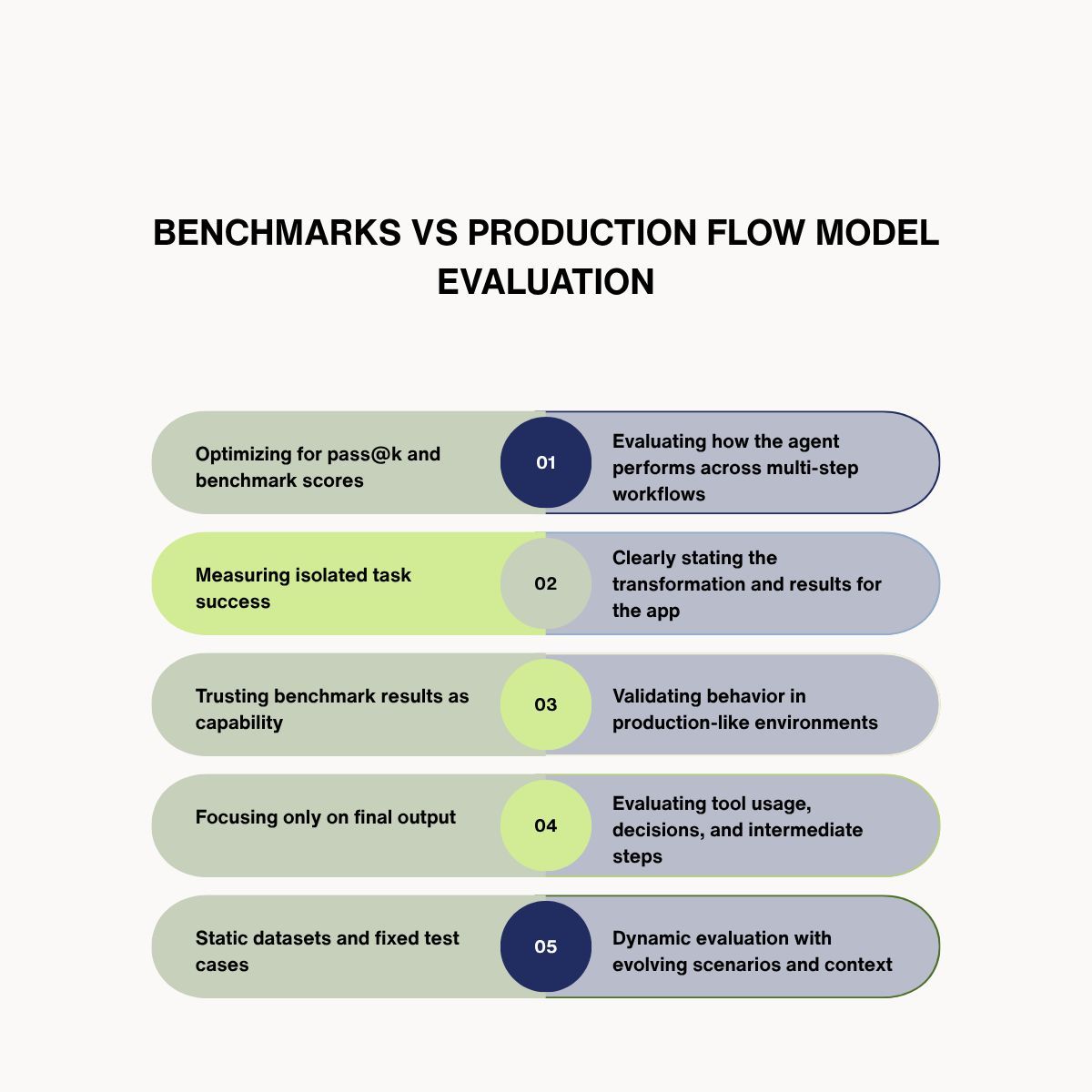

Building @foundryyai | Production evaluation infrastructure for coding agent labs

Introducing Windsurf 2.0. Manage all your agents from one place and delegate work to the cloud with Devin - so your agents keep shipping even after you close your laptop.

📣 Shipping software with Codex without touching code. Here’s how a small team steering Codex opened and merged 1,500 pull requests to deliver a product used by hundreds of internal users with zero manual coding. openai.com/index/harness-…

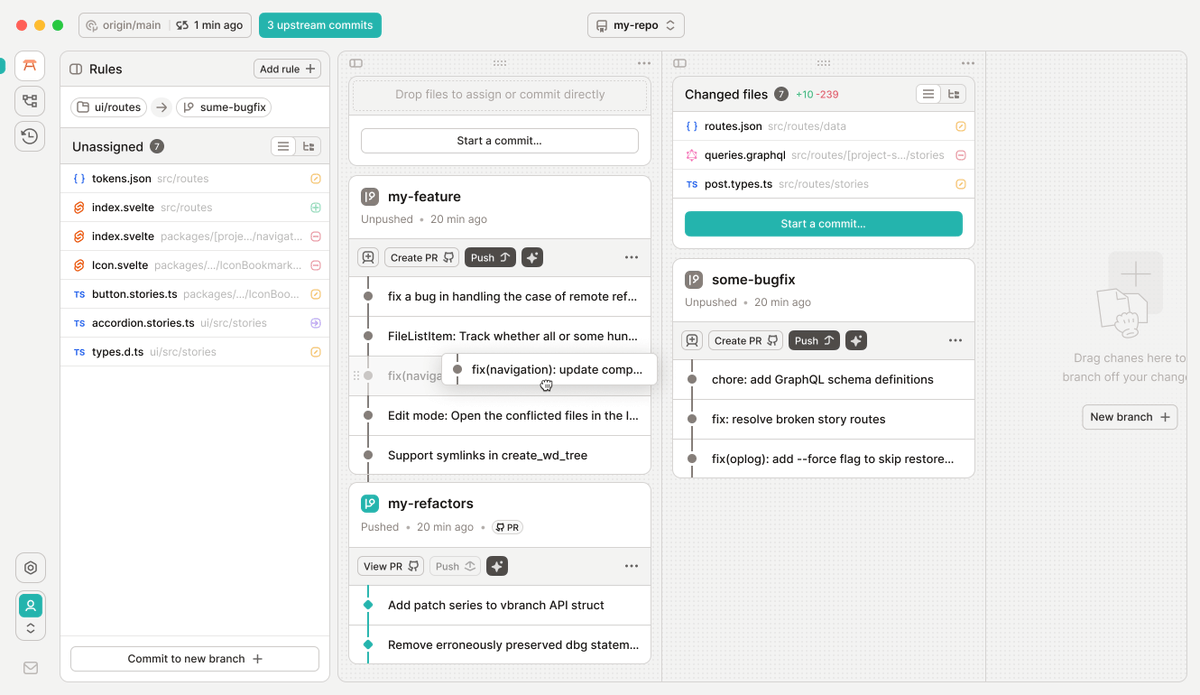

We’ve raised $17M to build what comes after Git blog.gitbutler.com/series-a

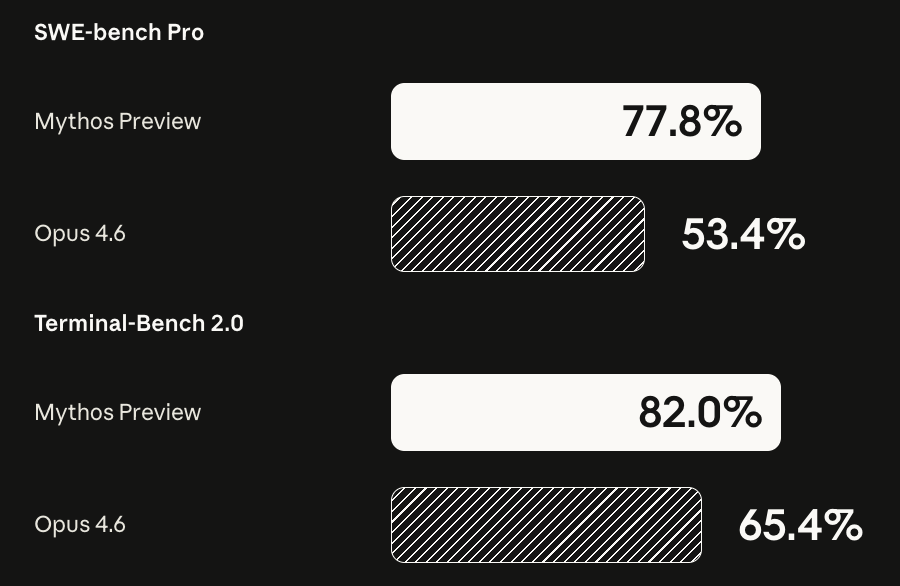

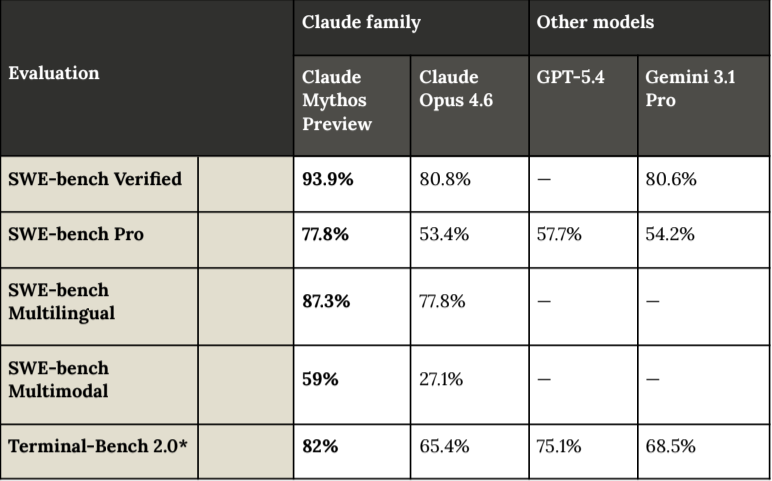

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing