D

4K posts

D

@deesontralize

Superpositioned chaos-neutral chaos-positive. Technocratic qubist, evolutional anthropological buddhist eclectic.

yesterday we chatted with @martin_casado and @sarahdingwang on the pod and he happened to do basic math™ on the logic of asics today @taalas_inc launched their HC1 asic that can inference 17k tok/s. Sure, it's a shitty 3.1 8B today which is a 1.5 year gap. But read the details to the HC2 this winter, and do the math — this timeline will converge to 0 in the next 2 years. Build accordingly.

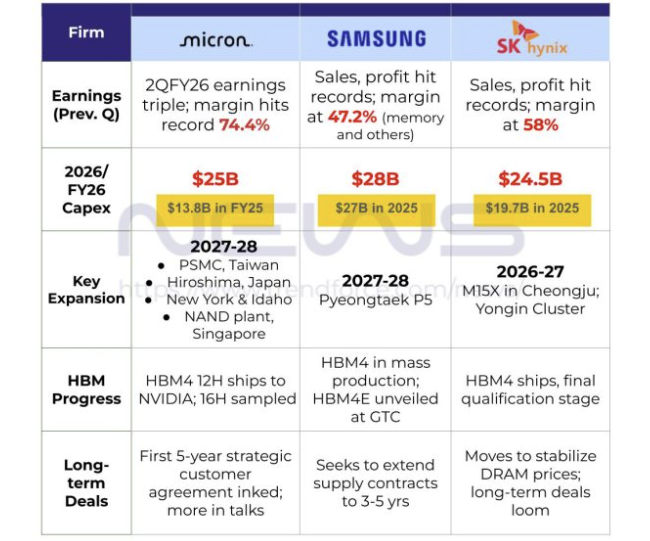

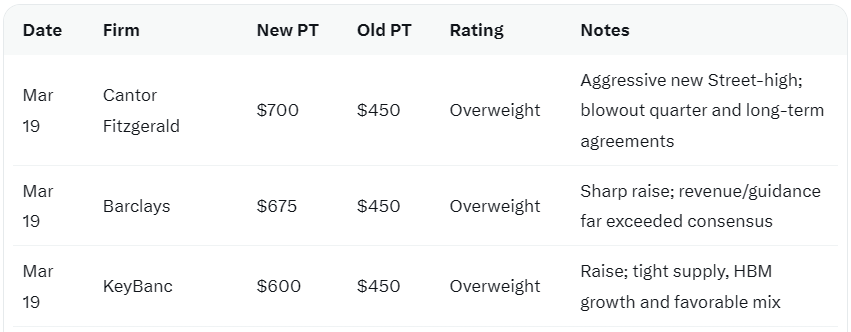

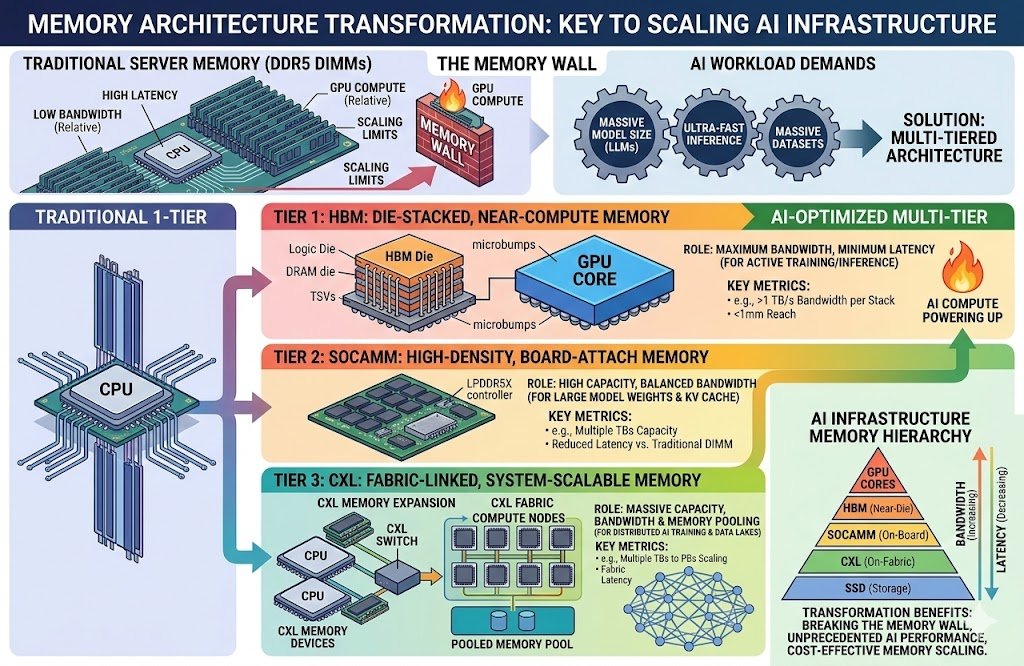

$MU Bargain of the Century PE Ratio: 15.5 Sales Ratio: 2.33 50% Increase in HBM (AI Memory) Sequentially. DRAM/NAND prices are surging.

🆕 @elonmusk has started following @aaronburnett

$MU Nine Price Target Upgrades 🤯 Barclays went from $450 to $675 (Street High). The reasoning is the same everywhere: HBM4, Durable AI demand, and long-term agreements. Goldman Sachs is the lone Hold, and even they raised their target. The wall of skepticism is crumbling

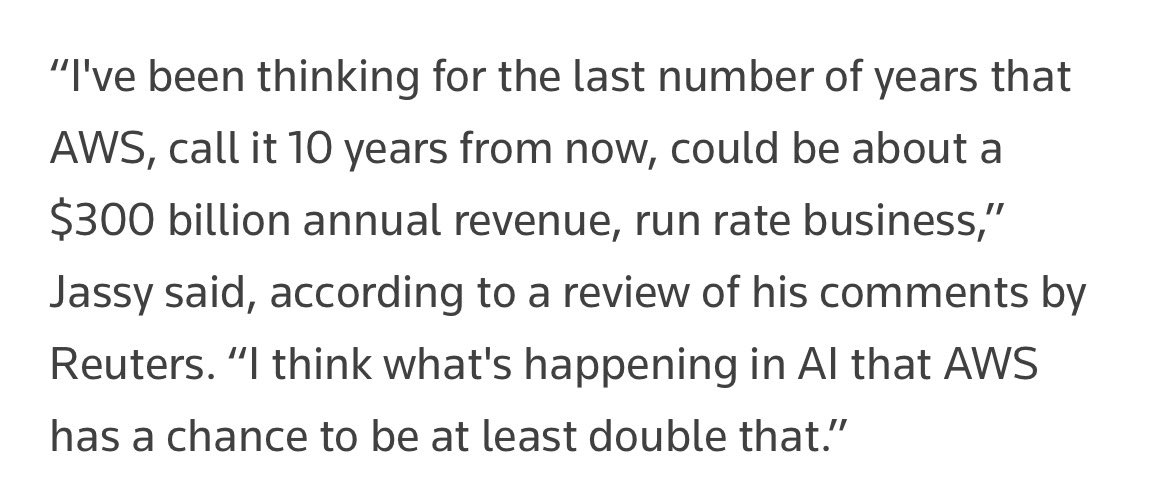

Microsoft weighs legal action over $50bn Amazon-OpenAI cloud deal ft.trib.al/6LZe39E

Sunbathers in Dolores Park are taking advantage of the heat wave in San Francisco on Saturday. 📷: Aidin Vaziri/S.F. Chronicle

Can this be a solution?

$MU Thanks for 5-year partnership with NVIDIA, Micron extends its leadership in Low-Power DRAM for AI Servers (SOCAMM2) which is emerging as one of the most critical memory innovations for scaling next-generation AI infrastructure efficiently. SOCAMM2 addresses the core energy bottleneck head-on. In the era of energy-constrained data centers, SOCAMM2's importance lies in its superior power efficiency. It achieves over 20% better power savings than Micron's previous LPDDR5X generation and more than two-thirds reduction compared to equivalent RDIMMs, despite being one-third the size. Data centers face severe power limitations, AI clusters can consume massive energy, with racks potentially exceeding 50 terabytes of CPU-attached memory, making low-power solutions essential to maximize compute density without overwhelming electrical infrastructure or cooling systems. The module's design also supports liquid cooling and easier serviceability, reducing total cost of ownership by allowing quick replacements for degraded memory, which is common in high-utilization AI environments. Micron has pioneered this by delivering the industry's highest-capacity variant, a 192GB SOCAMM2 module using its advanced 1-gamma DRAM process, which provides 50% more capacity in the same compact 14x90mm footprint compared to prior low-power DRAM designs. It is already being sampled to customers, including for Nvidia's next-generation AI platforms like the Rubin architecture. Bullish 🔥