Riccardo De Santi

118 posts

@desariky

Doctoral Fellow @ETH_AI_Center | visiting @Caltech | Exploration for out-of-distribution discovery: from theory to molecules.

Diffusion/Flow-based models can sample in 1-2 steps now 👍 But likelihood? Still requires 100-1000 NFEs (even for these fast models) 😭 We fix this! Introducing F2D2: simultaneous fast sampling AND fast likelihood via joint flow map distillation. arxiv.org/abs/2512.02636 1/🧵

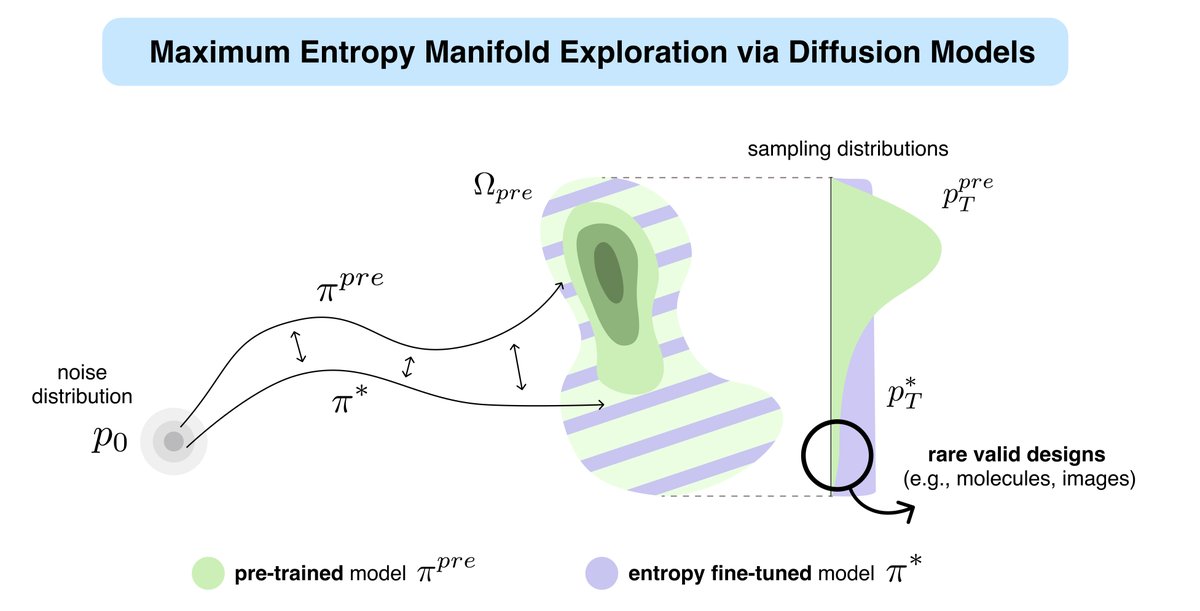

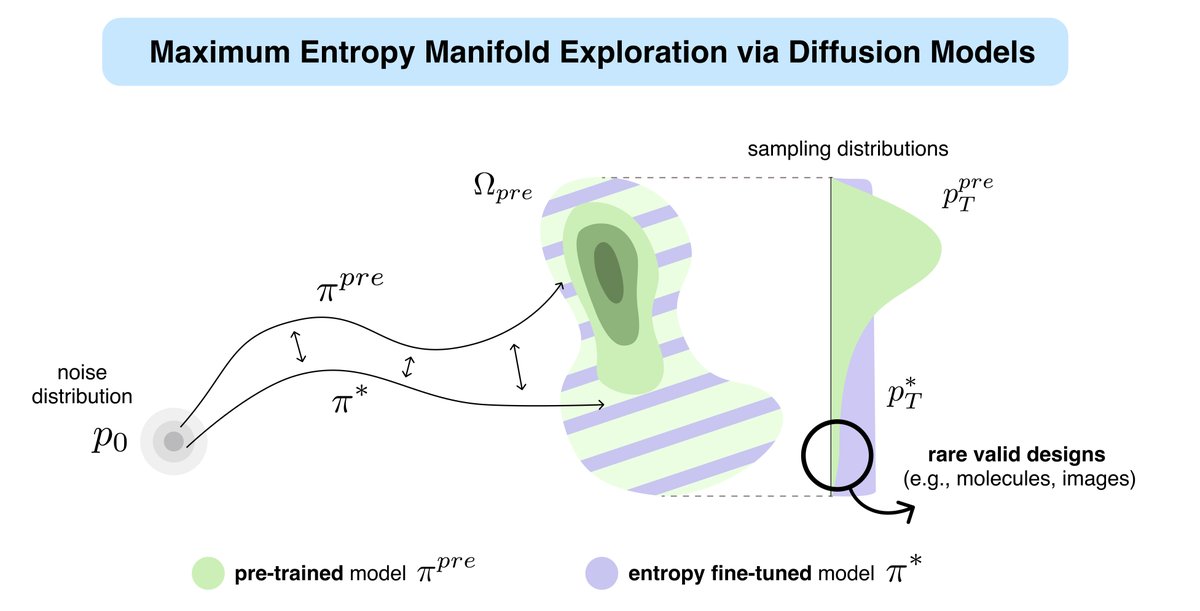

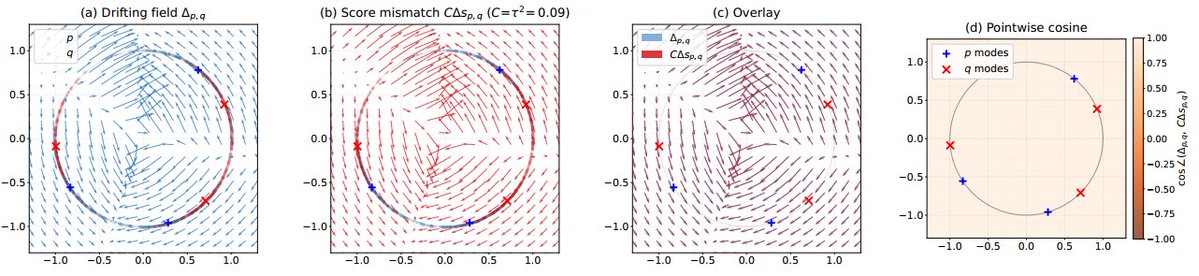

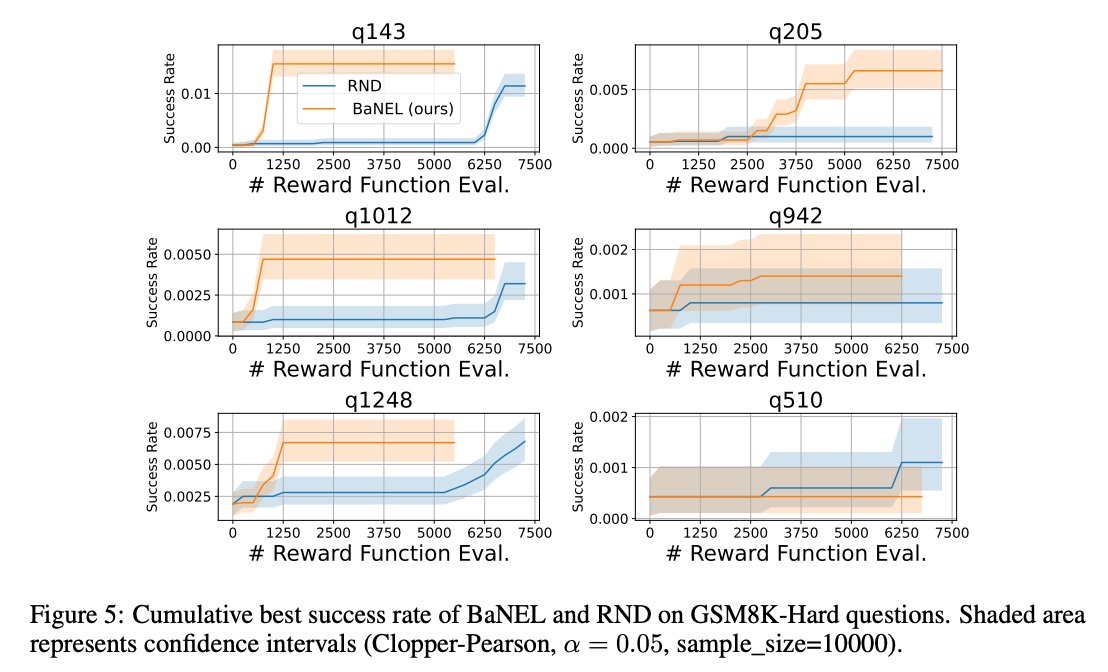

Can ML reliably solve big problems that humans cannot? We’ve seen post-training methods that learn from correct or successful samples. But we still don’t have good algorithms that learn solely from failures! Introducing BaNEL: a method for post-training from zero-reward samples.

📣 The latest ERC Starting Grant competition results are out! 📣 494 bright minds awarded €780 million to fund research ideas at the frontiers of science. Find out who, where & why 👉 europa.eu/!hrxyBp 🇪🇺 #EUfunded #FrontierResearch #ERCStG @HorizonEU @EUScienceInnov