Dmitriy Zhuk

834 posts

Dmitriy Zhuk

@dimzhuk

Author of Agent-Friendly Code and co-founder of https://t.co/9TCZIgoiai. I make RAG & CAG Pipelines for custom AI Agents... uhm... I make AI helpers that know your business.

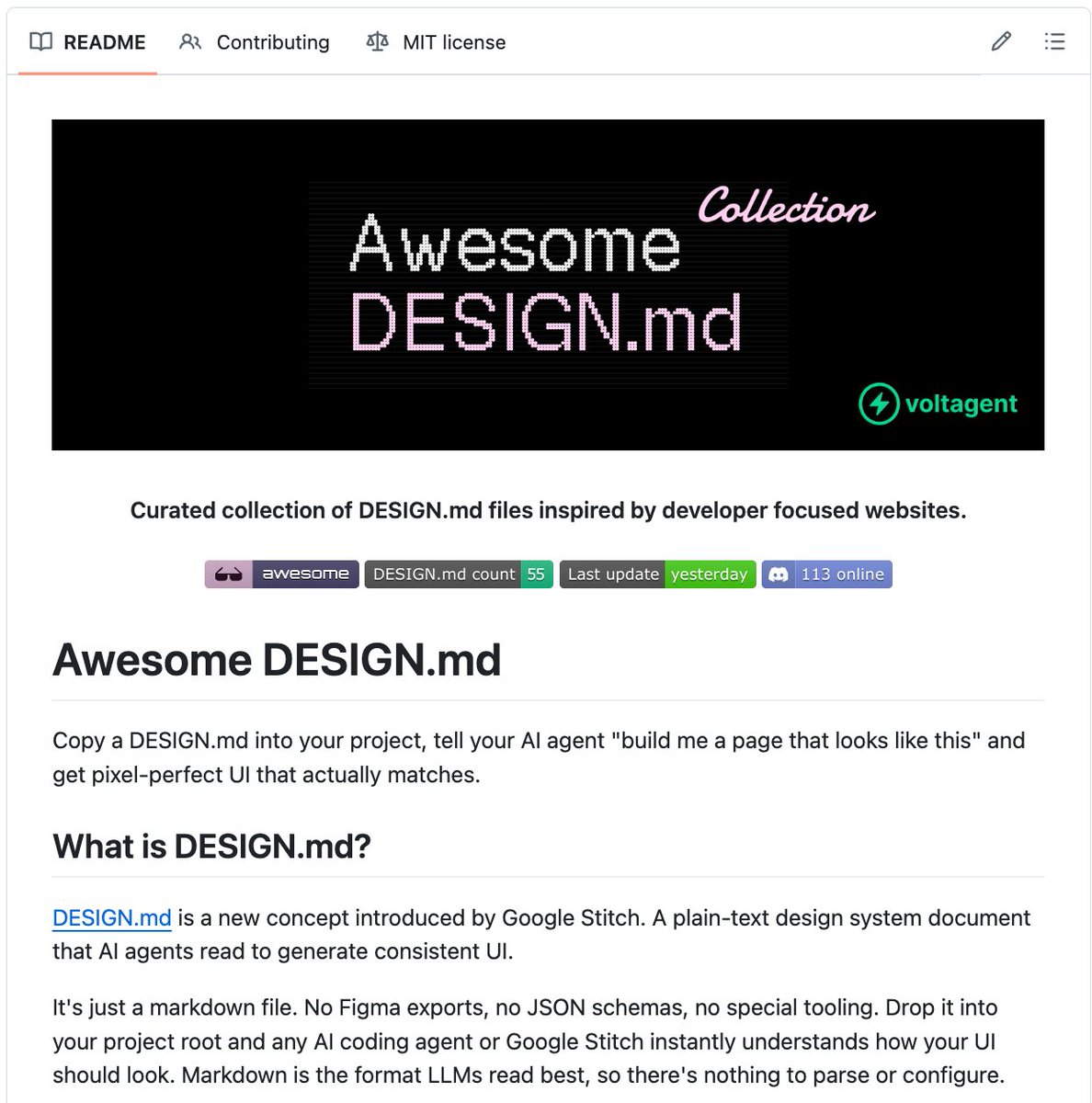

🚨 Someone reverse-engineered the design systems of Apple, Spotify, Airbnb, and 30+ billion-dollar companies. Packed each one into a single file. Free. It's called Awesome Design MD. Drop one file into your project. Your AI agent builds UI that looks like Spotify. Or Apple. Or Airbnb. Instantly. Not screenshots. Not Figma links. A single DESIGN .md file that captures every color, font, spacing value, button style, and layout pattern from a real website. In a format AI agents read and reproduce. Here's the difference: Tell Claude Code "build me a landing page" and it gives you generic UI. Tell Claude Code "build me a landing page" with Spotify's DESIGN .md in your project and it gives you Spotify. Here's what's inside: → Apple. Premium white space, SF Pro typography, cinematic imagery. → Spotify. Vibrant green on dark, bold type, album-art-driven layout. → Airbnb. Warm coral accent, photography-driven, rounded UI. → Linear. Ultra-minimal, precise spacing, purple accent. → SpaceX. Stark black and white, full-bleed imagery, futuristic. → BMW. Dark premium surfaces, precise German engineering aesthetic. → NVIDIA. Green-black energy, technical power aesthetic. → Uber. Bold black and white, tight type, urban energy. → Sentry, PostHog, Raycast, Cursor, ElevenLabs, and 20+ more. Here's how to use it: → Pick a design system from the collection → Copy the DESIGN .md file into your project root → Tell your AI agent to use it → Get UI that matches the design language of a billion-dollar company That's it. One file. Your AI agent now has the design taste of a $200/hour design consultant. Designers charge $5,000+ for a custom design system. Companies spend $50,000+ building one from scratch. This is free. 31 design systems. Copy. Paste. Ship beautiful UI. Works with Claude Code, Cursor, Codex, and any AI coding agent that reads project files. 100% Open Source. MIT License.

This is Farzapedia. I had an LLM take 2,500 entries from my diary, Apple Notes, and some iMessage convos to create a personal Wikipedia for me. It made 400 detailed articles for my friends, my startups, research areas, and even my favorite animes and their impact on me complete with backlinks. But, this Wiki was not built for me! I built it for my agent! The structure of the wiki files and how it's all backlinked is very easily crawlable by any agent + makes it a truly useful knowledge base. I can spin up Claude Code on the wiki and starting at index.md (a catalog of all my articles) the agent does a really good job at drilling into the specific pages on my wiki it needs context on when I have a query. For example, when trying to cook up a new landing page I may ask: "I'm trying to design this landing page for a new idea I have. Please look into the images and films that inspired me recently and give me ideas for new copy and aesthetics". In my diary I kept track of everything from: learnings, people, inspo, interesting links, images. So the agent reads my wiki and pulls up my "Philosophy" articles from notes on a Studio Ghibli documentary, "Competitor" articles with YC companies whose landing pages I screenshotted, and pics of 1970s Beatles merch I saved years ago. And it delivers a great answer. I built a similar system to this a year ago with RAG but it was ass. A knowledge base that lets an agent find what it needs via a file system it actually understands just works better. The most magical thing now is as I add new things to my wiki (articles, images of inspo, meeting notes) the system will likely update 2-3 different articles where it feels that context belongs, or, just creates a new article. It's like this super genius librarian for your brain that's always filing stuff for your perfectly and also let's you easily query the knowledge for tasks useful to you (ex. design, product, writing, etc) and it never gets tired. I might spend next week productizing this, if that's of interest to you DM me + tell me your usecase!

We’re introducing Cursor 3. It is simpler, more powerful, and built for a world where all code is written by agents, while keeping the depth of a development environment.