Eric Quinnell

582 posts

Eric Quinnell

@divBy_zero

Founding Chip Architect, Stealth Startup. Fmr AWS Tranium, Tesla Dojo, CPUs (x86+ARM). PhD Computer Arithmetic

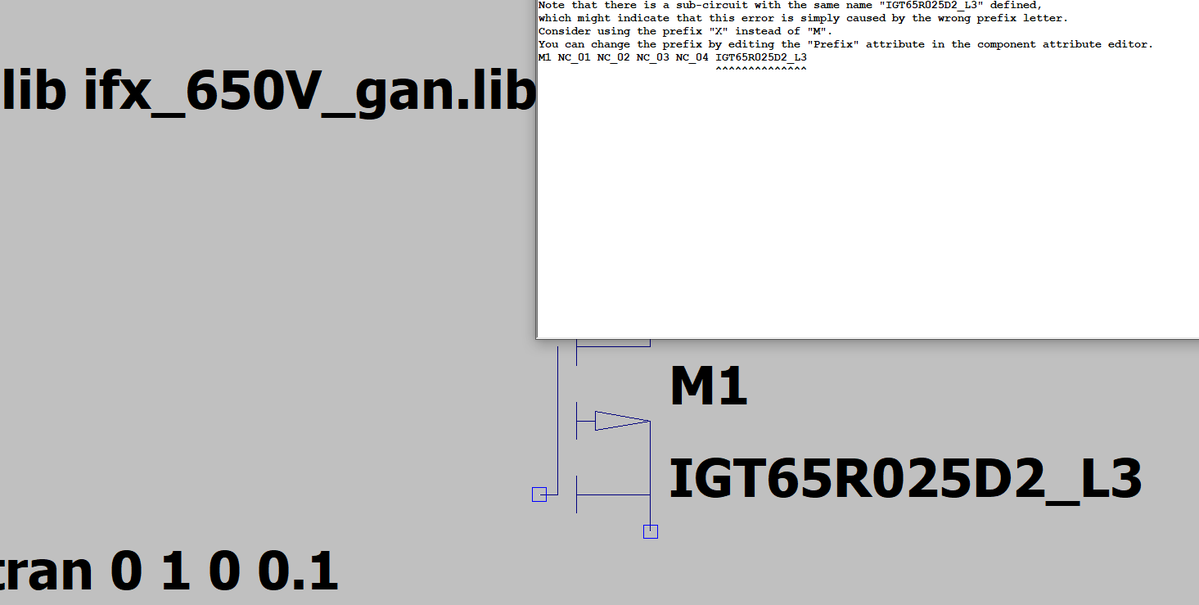

Floating point math is not associative! And many of the highest performance kernels split the workload among SMs and accumulate partial results in a nondeterministic order. Many AI labs just accept this, or pay a huge performance penalty for determinism. DeepSeek decided to do neither. (1/4) 🧵

Congrats to the @Tesla_AI chip design team on taping out AI5! AI6, Dojo3 & other exciting chips in work.

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

there is a game called "data center" on steam which let's you build and manage your own data center. this is lowkey genius, the best way to educate people on a new trait. hyperscalers should learn a thing or two from "edutainment".