Zacchaeus Bolaji

5.2K posts

Zacchaeus Bolaji

@djunehor

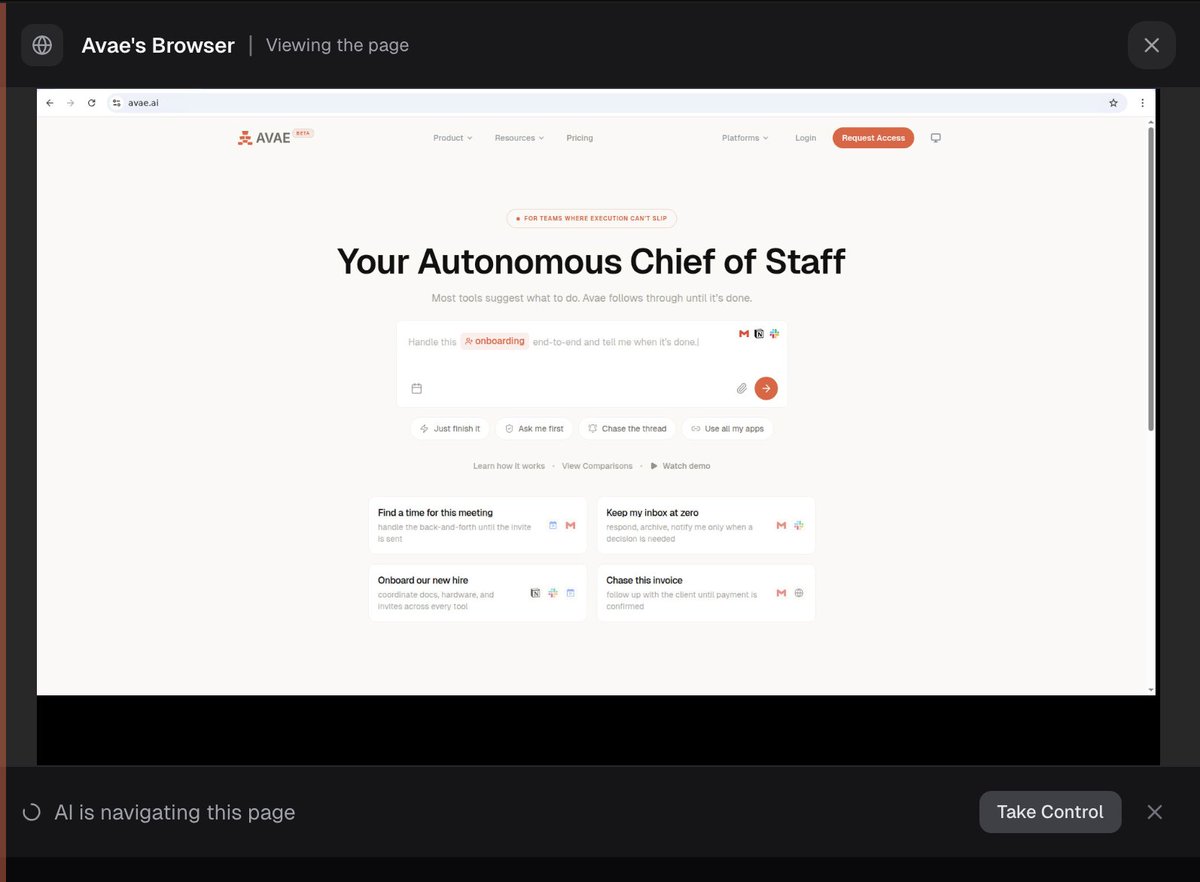

Building @useavae - AI Virtual Assistant for Founders and Busy Executives. Engineering + Infrastructure. 5x founder, 2x exit. Ex-@meta

We're experimenting with ways to keep AI agents in sync with the exact framework versions in your projects. Skills, 𝙲𝙻𝙰𝚄𝙳𝙴.𝚖𝚍, and more. But one approach scored 100% on our Next.js evals: vercel.com/blog/agents-md…

But the voice LLM still has to call a large LLM to have the intelligence to k ow what to say.

This also feels like a uniquely bay area idea: "claude, book my entire trip, make no mistakes" whereas for most people their vacations are the highlights of their entire year and what they're looking forward to/planning for most of it. First time I put this together was hearing John Collison talking about this and how it'd be the perfect thing to do for a honeymoon trip and thinking "that's such a bay area mindset" when 99% of people would love the planning, searching, booking aspect for any trip, let alone a honeymoon