David Krevitt

293 posts

David Krevitt

@dkrevitt

I only tweet at airlines

Austin, TX Katılım Kasım 2010

469 Takip Edilen561 Takipçiler

We waste too much time worrying about short term hype. Hyper-fixating on the news cycle isn’t going to fix the biggest problems facing society in the coming decades.

The world needs more long term thinking.

So we are launching Forecast 2050: a series of conversations with the thinkers, founders, and investors shaping the next 25 years.

Thank you to @tylercowen, Scott Aaronson, @noor_siddiqui_, @soundboy, @matthewclifford, @devonzuegel, @CJHandmer, @MalcolmRifkind, Yanai Yedvab, @viswacolluru, @pablolubroth, and @PhilipJohnston for joining us.

First episode with Tyler drops tomorrow. Stay tuned.

English

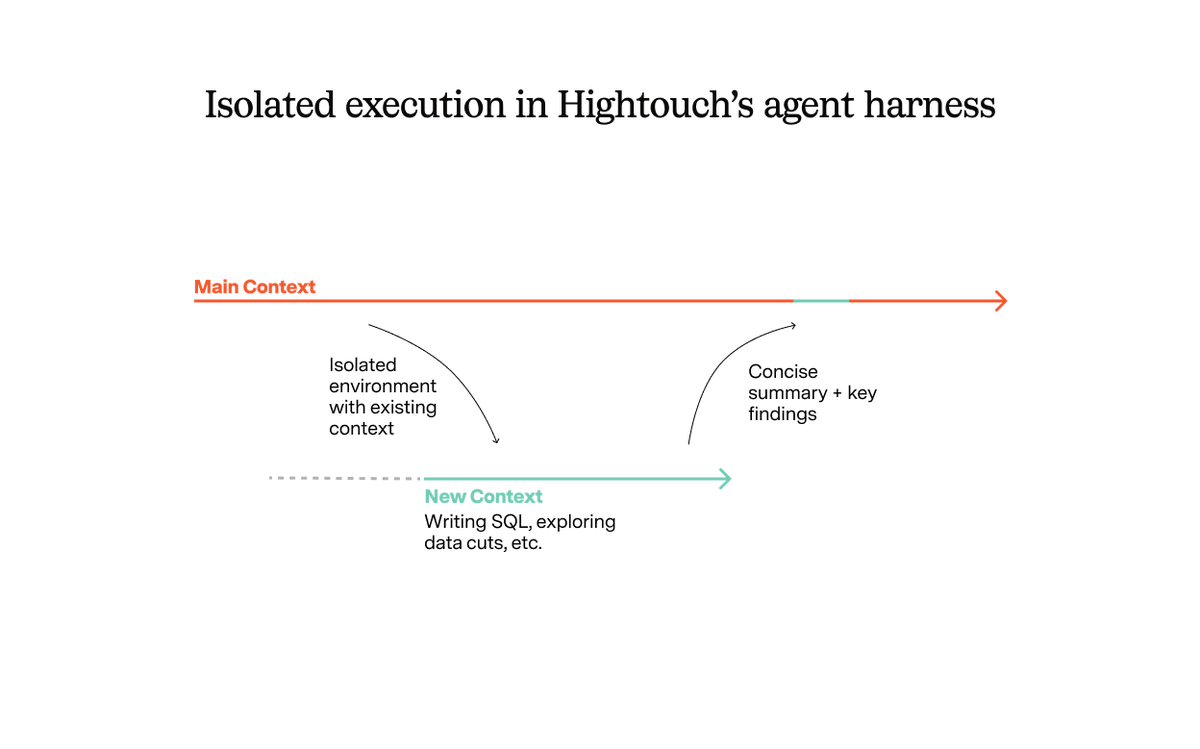

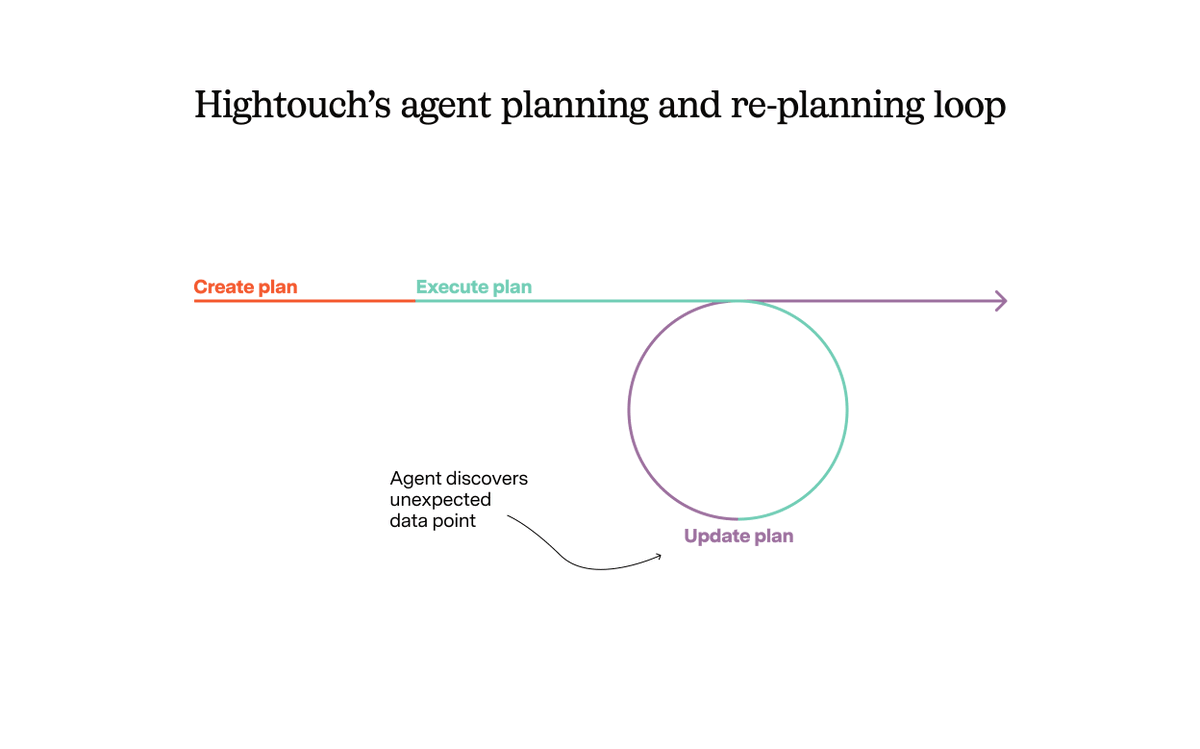

I'm enjoying my new role as Diagram Coordinator. New blog post about the incredible @HightouchData agent harness coming out soon

English

@DataChaz it's also kind of got an attitude w/ it's copywriting, i dig it

English

David Krevitt retweetledi

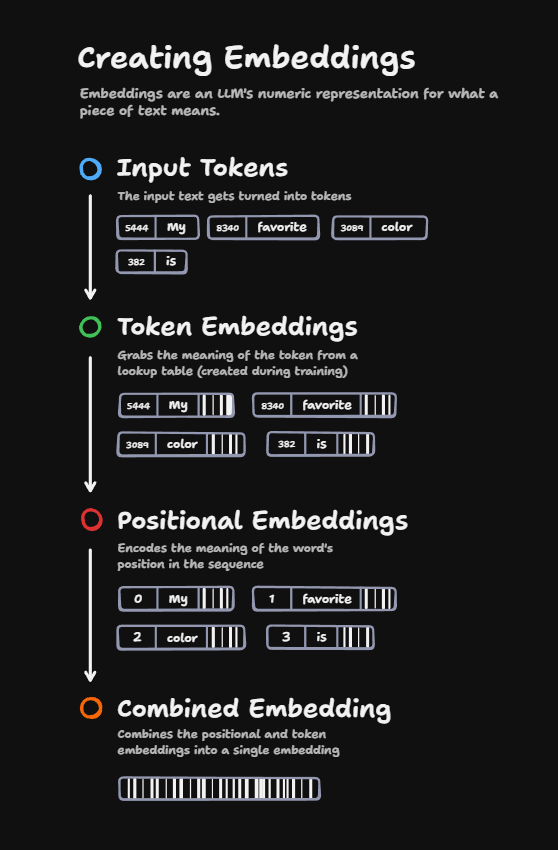

UNDERSTAND AI OR DIE TRYING

Announcing the AI Reference, the best, fastest, and free-est way to get smart on the fundamentals of AI models and how they work.

Stuff like RAG, RLHF, context, and pre-training. It’s totally free and you can dive in here.

technically.dev/ai-reference

GIF

English

Whether this launch goes well or not, I'll always have Parth.

sisyphus bar and grill@itunpredictable

UNDERSTAND AI OR DIE TRYING Announcing the AI Reference, the best, fastest, and free-est way to get smart on the fundamentals of AI models and how they work. Stuff like RAG, RLHF, context, and pre-training. It’s totally free and you can dive in here. technically.dev/ai-reference

English

Today in "coding like a surgeon":

Before doing a code review, had Claude write a brief for me explaining the area of the code that's being touched, with diagrams. (And printed it out, because I like reading on paper!)

Great way to naturally learn more about a big codebase. Reading this first makes me better equipped to consider the details of the PR once I'm in the GitHub UI.

Feels kinda similar to how Deep Research turns a bunch of web browsing into a report you can read. Instead of frantically jumping around an interactive thing, I can calmly read and annotate a written guide.

English

@wesbos i feel your pain, we've all been there. silver lining is it makes you look more relatable bc we all do it

English

@wesbos have to add "update apps" to your pre-recording checklist

English

@juliarturc idk think the sweet spot is having the last name cut off, so only the real ones know where to find you

English

@Kyrylous @PawelHuryn aren't these platforms themselves designed to be information bubbles?

English

@PawelHuryn It's more like someone's feed is overloaded by 'AI' news and one's existing in an information bubble.

English

I've summarized your entire feed so you can enjoy your weekend:

- Prompting is dead

- This prompt replaces McKinsey

- Unitree robots can dance

- Atlas just killed Google

- Fine-tuning is dead

- LLMs can’t reason

- SEO is dead

- This LLM can reason

- You’re using AI wrong

- A bubble is about to pop

- A crazy paper (+hallucinations)

- How to generate a professional headshot

- I cancelled my Canva because of Gemma

- 10 courses in case the first didn’t work

- 7 agents replaced my entire team

- OpenAI just killed Hollywood

- DeepSeek just killed OpenAI

- .md files are now “skills”

- Only 1% of people know this

- Leave a comment (must follow)

- Either way, everything these days is generated by AI

Have a great day!

English

@PawelHuryn You know that you just triggered like 100 n8n workflows for new posts on those topics? ;)

English

@milan_milanovic Interesting, I wonder if this could be applied to dead content as well

English

𝗛𝗼𝘄 𝗚𝗼𝗼𝗴𝗹𝗲 𝗗𝗲𝗹𝗲𝘁𝗲 𝗠𝗮𝘀𝘀𝗶𝘃𝗲 𝗔𝗺𝗼𝘂𝗻𝘁𝘀 𝗼𝗳 𝗗𝗲𝗮𝗱 𝗖𝗼𝗱𝗲?

In a recent blog post on the Google Testing portal, the authors described a process for removing dead code.

We all know that dead code shouldn't exist if we follow Clean Code principles, because it contributes to the accumulation of technical debt.

Google stores code in a 𝗺𝗼𝗻𝗼-𝗿𝗲𝗽𝗼 𝘀𝘆𝘀𝘁𝗲𝗺 𝗰𝗮𝗹𝗹𝗲𝗱 𝗣𝗶𝗽𝗲𝗿 that contains the source code of shared libraries, production services, experimental programs, and diagnostic and debugging tools.

Yet, some 𝗱𝗲𝗮𝗱 𝗰𝗼𝗱𝗲 𝗶𝘀 𝗹𝗲𝗳𝘁 after some time, and adding and removing modules. And it is costly because an automated system doesn't know how to stop running slow tests; it is run on some machines, which costs money, etc.

How they solved it: in a hackathon, they created a 𝗦𝗲𝗻𝘀𝗲𝗻𝗺𝗮𝗻𝗻 𝗽𝗿𝗼𝗷𝗲𝗰𝘁 that automatically identifies dead code and sends code review requests to delete it.

Change descriptions are concise and provide enough background info for all reviewers to make a judgment.

Google's Build system, called Blaze, helps them determine what to delete by representing dependencies between binary targets, libraries, tests, source files, and more.

When we decide 𝘄𝗵𝗮𝘁 𝗻𝗼𝘁 𝘁𝗼 𝗱𝗲𝗹𝗲𝘁𝗲, those are usually programs that serve as an example of using an API, or some programs run where they can't get a log signal.

To learn more about it, check the text in the comments.

English