Dillon Erb

320 posts

Dillon Erb

@dlnrb

building something new @a____t____g — prev: CEO / co-founder @hellopaperspace (acquired by @digitalocean)

today we’re open-sourcing nmoe: github.com/Noumena-Networ… i started this because training deepseek-shaped ultra-sparse moes should be straightforward at research scale, but in practice it’s painful: - expert flops get stranded (router shatters your batch → tiny per-expert gemms → gpus idle) - router stability is fragile (especially without deepseek’s batch sizes) - data + mixtures dominate (proxy runs are useless if mixtures aren’t deterministic/resumable) nmoe is our attempt at a clean, production-grade reference path for moe training that you can actually read + modify (outside of the highly optimized kernels). what’s inside: - rdep (replicated dense / expert parallel): replicate dense/attention, shard experts, pool+dispatch routed tokens so per-expert batches are hot (no nccl all-to-all on the moe path; direct dispatch/return via ipc + nvshmem) - mixed precision experts (bf16/fp8/nvfp4), with a focus on killing the usual “mixed precision overhead” taxes - a frontier-ish data pipeline: deterministic mixtures, exact resume, and tooling for building/inspecting datasets (including hydra-style grading) - metrics + nviz: sqlite experiments + duckdb timeseries + a dashboard that reads from shared storage - container-first + toml-first, and intentionally narrow: b200-only (sm_100a), no tensor parallel, no expert all-to-all this repo started in the spirit of nanochat (small, hackable, end-to-end), then grew into a rewrite of a bunch of the core components we wish existed as a public reference for moe training. over the next few weeks i’ll post deep dives on: - rdep + why per-expert batch size is the whole moe problem - router stability in small runs - fp8/nvfp4 expert training without drowning in overhead - deterministic mixtures + why “close enough” sampling breaks proxy validity - the metrics/nviz stack and what we track that actually matters

We embedded all 5000+ NeurIPS papers! exa.ai/neurips Cool queries: - "new retrieval techniques" - "the paper that elon would love most" - "intersection of coding agents and biology, poster session 5" It uses our in-house model trained for precise semantic retrieval 😌

enough preamble. The most important part in every Whale paper, as I've said so many times over these years, is “Conclusion, Limitation, and Future Work”. They say: Frontier has no knowledge advantage. Compute is the only serious differentiator left. Time to get more GPUs.

🚀 Launching DeepSeek-V3.2 & DeepSeek-V3.2-Speciale — Reasoning-first models built for agents! 🔹 DeepSeek-V3.2: Official successor to V3.2-Exp. Now live on App, Web & API. 🔹 DeepSeek-V3.2-Speciale: Pushing the boundaries of reasoning capabilities. API-only for now. 📄 Tech report: huggingface.co/deepseek-ai/De… 1/n

New training speed record for @karpathy's NanoGPT setup: 3.28 Fineweb val loss in 22.3 minutes Previous record: 24.9 minutes Changelog: - Removed learning rate warmup, since the optimizer (Muon) doesn't need it - Rescaled Muon's weight updates to have unit variance per param 1/5

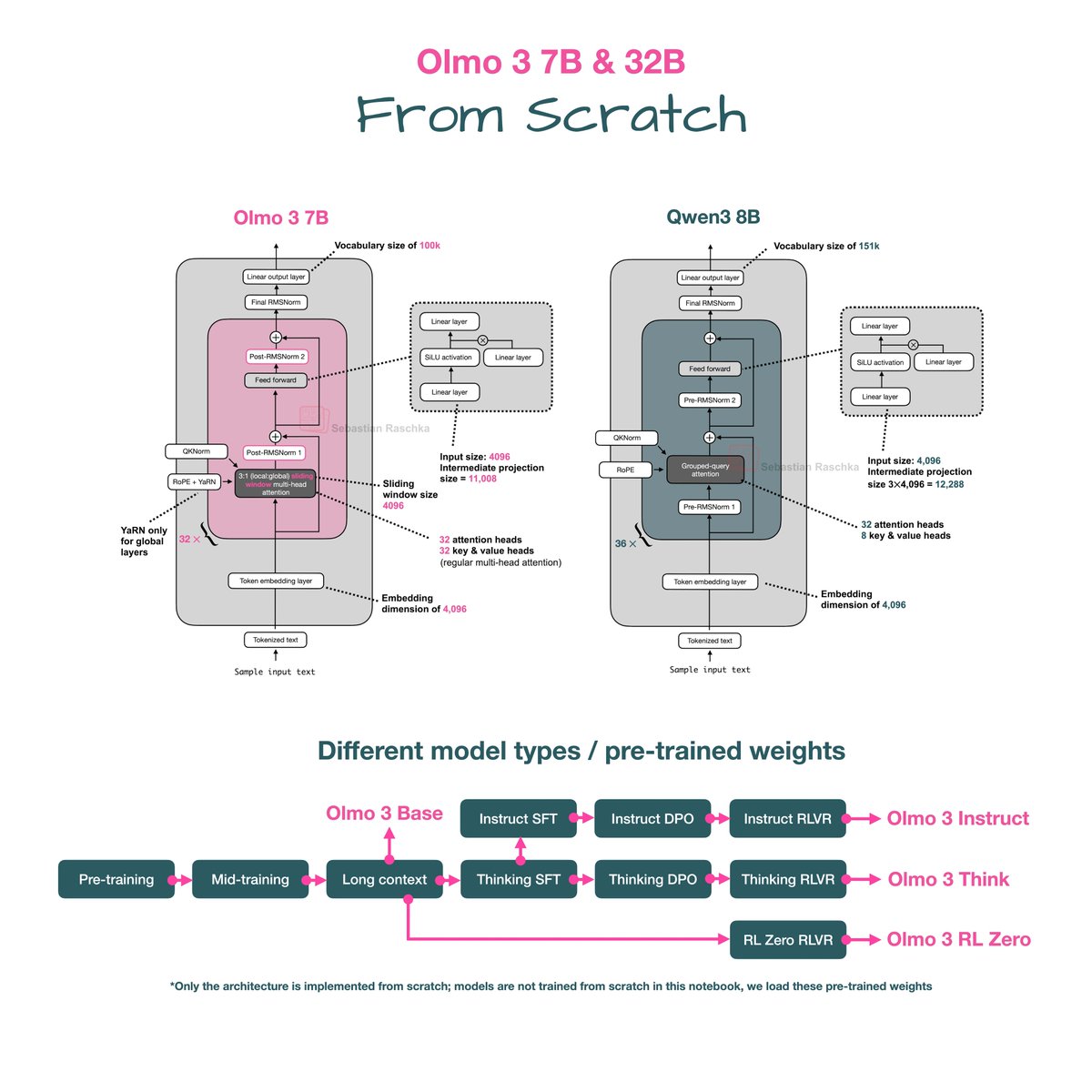

Olmo models are always a highlight due to them being fully transparent and their nice, detailed technical reports. I am sure I'll talk more about the interesting training-related aspects from that 100-pager in the upcoming days and weeks. In the meantime, here's the side-by-side architecture comparison with Qwen3. 1) As we can see, the Olmo 3 architecture is relatively similar to Qwen3. However, it's worth noting that this is essentially likely inspired by the Olmo 2 predecessor, not Qwen3. 2) Similar to Olmo 2, Olmo 3 still uses a post-norm flavor instead of pre-norm, as they found in the Olmo 2 paper that it stabilizes the training. 3) Interestingly, the 7B model still uses multi-head attention similar to Olmo 2. However, to make things more efficient and shrink the KV cache size, they now use sliding window attention (e.g., similar to Gemma 3.) Next, let's look at the 32B model. 4) Overall, it's the same architecture but just scaled up. Also, the proportions (e.g., going from the input to the intermediate size in the feed forward layer, and so on) roughly match the ones in Qwen3. 5) My guess is the architecture was initially somewhat smaller than Qwen3 due to the smaller vocabulary, and they then scaled up the intermediate size expansion from 5x in Qwen 3 to 5.4 in Olmo 3 to have a 32B model for a direct comparison. 6) Also, note that the 32B model (finally!) uses grouped query attention.

hillclimb (@hillclimbai) is the human superintelligence community, dedicated to building golden datasets for AGI. Starting with math, their team of IMO medalists, lean experts, PhDs is designing RL environments for @NousResearch.

This was 6 years ago 🤯 We grew from $15M to $65M that year and were on planning for $125M in 2020. 6 months later a virus changed the world. Garry stood by us through it all.

most diffusion LLMs out there don’t really do diffusion, they just predict the next token in a randomized order. eventually people will realize that there are much smarter ways to do this.