Daniel Norberg

1.3K posts

Daniel Norberg

@dnorberg

Software engineer at https://t.co/FFQzyWhc2V. previously at https://t.co/EjBbnKeOPg. Performance and scalability fanatic. [email protected]

Stockholm, Sweden Katılım Kasım 2008

204 Takip Edilen396 Takipçiler

Daniel Norberg retweetledi

Very excited about all the hard infra problems we're solving at Modal, and still feels like we're just getting started!

Erik Bernhardsson@bernhardsson

It's true – @modal has raised a $87M Series B at a $1.1B valuation to advance the future of AI infrastructure. Thank you to @Lux_Capital, @Redpoint, @AmplifyPartners, and others. Now more than ever, AI demands a complete reinvention of traditional compute infrastructure

English

@MaximePeabody @bernhardsson We’re actively working on improving Volume scalability.

English

I spent the past week testing modal out on a couple projects - it's nice!

I'm curious, what's the target market?

For side projects it seems great because it's way easier to deploy code to gpus on modal than anything else I've tried, especially AWS/GCP.

But for scale, I noticed that the Volume for example only handles ~50k files, which seems pretty low?

English

Easiest way if people want Modal stock is to join us! jobs.ashbyhq.com/modal

jason liu@jxnlco

How do I buy @modal_labs stock

English

@bernhardsson This is why my next language will have only types, no names or values.

English

@bernhardsson @modal_labs Not in rust but I imagine it’s pretty straight forward!

English

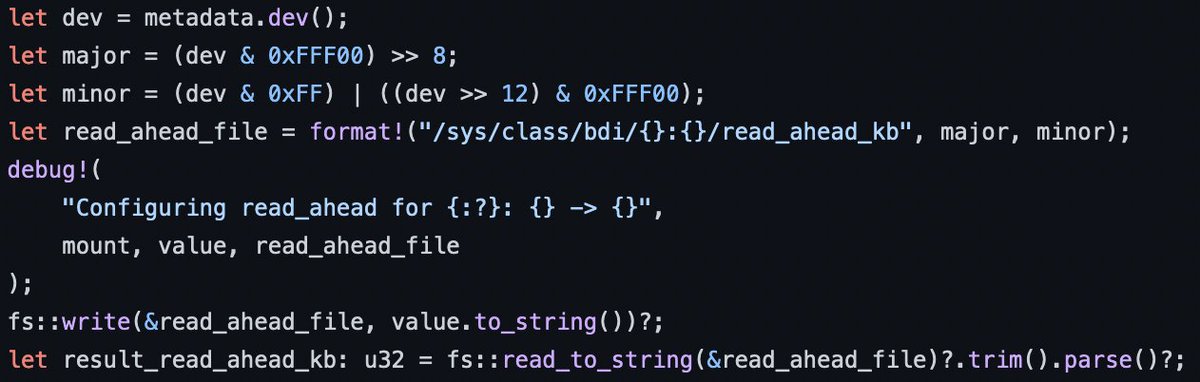

We just spent an engineer-week or two at @modal_labs working on a performance optimization that basically boiled down to a few lines making all file operations 2x faster!

(Deployed on Friday evening so we get a full weekend of data to analyze!)

English

Small thread of non-obvious things I miss in Sweden when in Singapore and vice versa.

1. The different and lighter shades of green and yellow in nature. Singapore is much more dark green and dark brown.

Petter Weiderholm@pweiderholm

I will write a little thread of the non obvious things I miss with Sweden

English

@normanmaurer Will netty 4 and 5 be able to coexist on the classpath?

English

Love that we have the ability to finally break APIs in #netty 5 :) github.com/netty/netty/pu…

English

Daniel Norberg retweetledi