Sabitlenmiş Tweet

Don Karter

269 posts

Don Karter

@donk8r

Making dev life simpler with AI tools. Founder @muvonteam. Open source & Rust.

Katılım Nisan 2026

30 Takip Edilen21 Takipçiler

@bindureddy Beats only in bench but fails in real cases to be honest

English

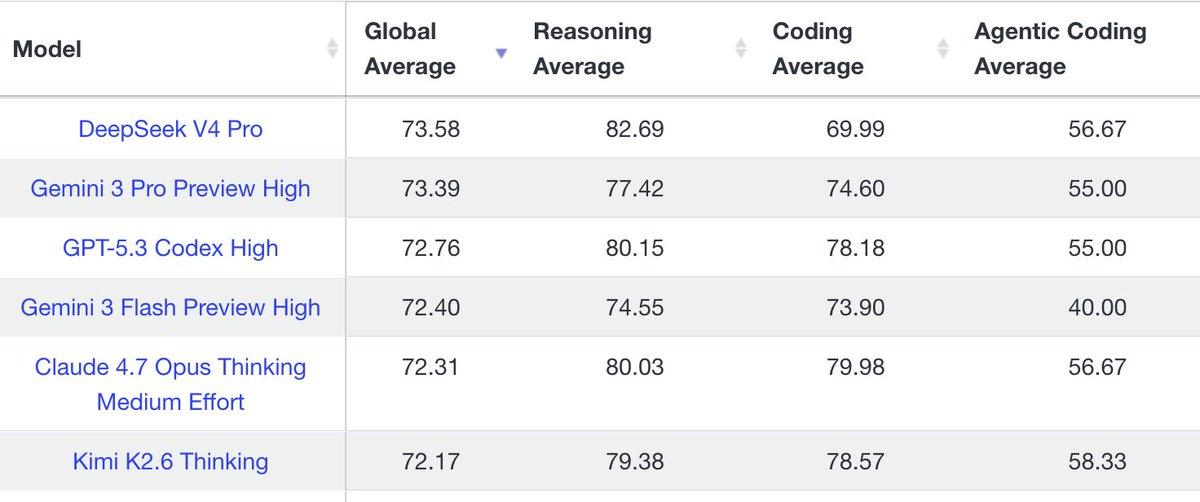

DeepSeek V4 Beats Opus 4.7 And GPT 5.5 To Become The World's Best Open Source Model

DeepSeek V4 Pro is the NEW KING of open-source .

- better and 10x cheaper than Opus 4.7 and GPT 5.5 medium

- out performs Kimi 2.6 thinking

- much faster that any of the other big models

It's literally the best open source model in the world and months away from GPT-5.5 xHigh.

English

Don Karter retweetledi

@icanvardar If you do not hold it like this you just know how to make it work with closed cover :)

English

@HsanC_ Any free channel you could advice? What the best start when you have no influence to find the first customer in your opinion?

English

@donk8r you just try all the channels and double down on what's working

English

@dharmeshba @opencode How fast is it? No lagging on turns? Thinking about also try their plan

English

Claude rate limits suck!

Just get @opencode Go plan for $10 and use Kimi 2.6

Lit 🔥

English

@foundrceo Building Vext getvext.app

Free to try, buy once, your forever.

Running locally on Mac M1+

English

Rebuilding is the trap, but so is prompt brittleness.

The real win with Kimi: you can architect for retrieval quality instead of model capacity. Cheaper models + better retrieval = beats expensive models + lazy retrieval.

That's the hidden cost nobody talks about — not the prompts, the pipeline.

English

Cancelled both my Claude Code Pro and ChatGPT Pro for this.

Kimi K2.6 is just as good for my side projects as Opus or GPT 5.4 were. The price for this is crazy low, and there are a bunch of models I can try (like DeepSeek).

Bonus: I'm moving away from building everything on Claude Code - now that both @opencode and @cursor_ai have their SDKs open, I feel I can rebuild the agentic workflows I built for Claude Code in a more platform-independent manner.

Lotto@LottoLabs

Update on Opencode Go It’s great value for $5/month, there’s really no reason not to do the first month. At $10/month it’s still good value and gets you access to all sota OS models. You can’t daily drive it without hitting limits on the big models but w/ Kimi x3 you won’t hit limits unless you’re insane. Overall highly recommend the first month, then make your own decision.

English

@shub0414 The real play is token efficiency.

We cut token spend 60% on the same Claude instance by fixing retrieval. No model change. Just better context.

When subsidies end, the teams that built tight retrieval + chunking strategies will ship for pennies. The rest will be priced out.

English

Unpopular opinion: Your $20/month AI subscription is a lie.

You’re living on VC handouts. OpenAI and Anthropic are burning billions to subsidize your productivity. That money will run out.

When the subsidies dry up, the cost of "intelligence" will skyrocket.

The play? Build your agents and workflows now while compute is pennies on the dollar.

Lock in your advantage before the price tag catches up to the tech.

English

@rohanpaul_ai The real issue is retrieval quality in agent loops.

We tested: agents with noisy context get stuck debating bad info. Same agents with clean, ranked retrieval converge in 3 rounds.

It's not the model. It's what enters the context window.

English

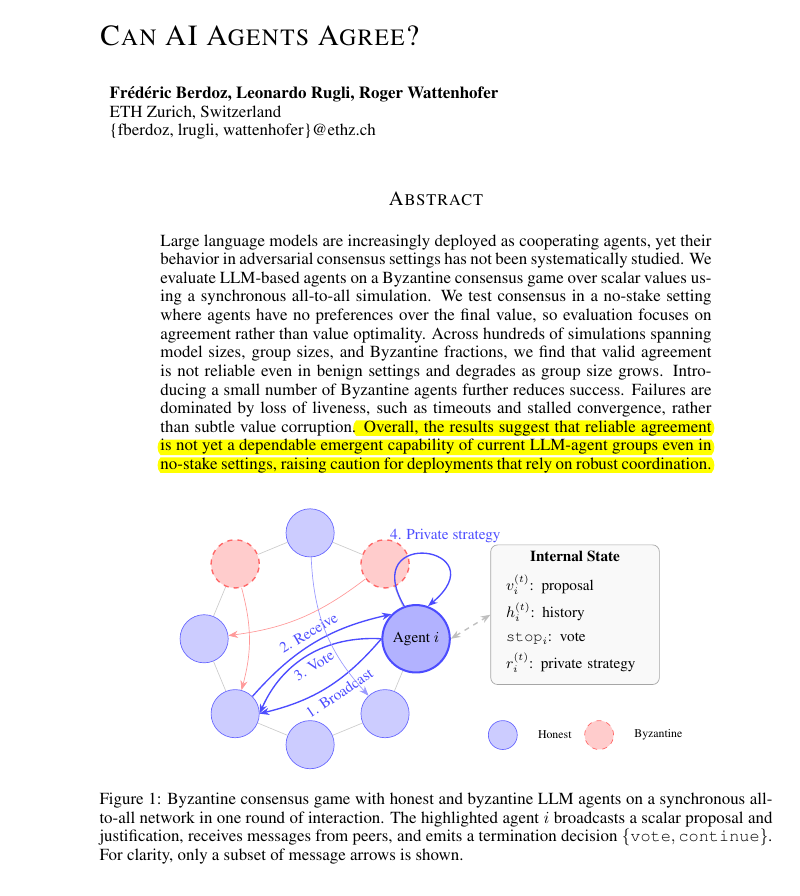

Research proves that current AI agent groups cannot reliably coordinate or agree on simple decisions.

Building teams of AI agents that can consistently agree on a final decision is surprisingly difficult for LLMs.

But problem is that developers frequently assume that if you have enough AI agents working together, they will eventually figure out how to solve a problem by talking it through.

This paper shows that this assumption is currently wrong. Even in a friendly environment where every agent is trying to help, the team often gets stuck or stops responding entirely. Because this happens more often as the group gets bigger, it means we cannot yet trust these agent systems to handle tasks where they must agree on a correct answer.

----

Paper Link – arxiv. org/abs/2603.01213

Paper Title: "Can AI Agents Agree?"

English

@sama Disagree slightly — it's not smarter vs cheaper, it's context quality vs model size.

We tested this: same Claude instance, same prompt, different retrieval strategy. 60% token reduction. No model change.

The bottleneck isn't the model. It's what enters the context window.

English