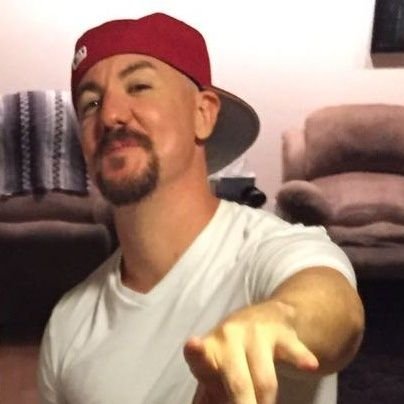

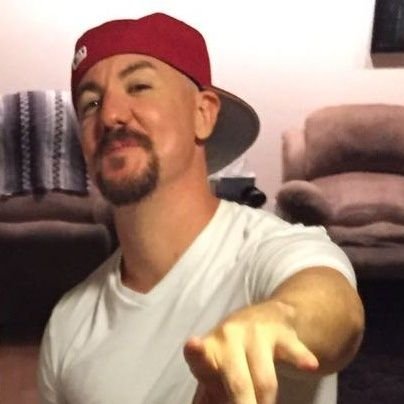

Ronnie Baby

10.7K posts

Ronnie Baby

@dougjamesm

Normal dude, floating on a rock, in a solar system, revolving around a super massive black hole. I am a fraction of a spec of dust, the center of nothing.

A viewer demanded I touch the grass behind me to prove it wasn’t CGI on a green screen.

God is great.

FOX: Among the crew are the first woman, first person of color and first Canadian on a lunar mission. There's a lot of firsts happening.

🚨BOMBSHELL🚨 Former Capitol Police officer Shauni Kerkhoff failed a November FBI polygraph test when asked if she placed pipe bombs on Jan. 5, 2021, a court filing states.

Anyone who experienced the Space Shuttle Challenger explosion, like I did, is probably a bit hesitant to get too excited about the Artemis II launch today. I truly hope our children don’t have to experience such tragedy. May the Universe welcome them and return them safely home 🙏