Yingtong Dou

188 posts

@dozee_sim

Research Scientist @Visa. CS Ph.D. @UICCS. Foundation Model & Anomaly/Fraud Detection. Opinions are my own.

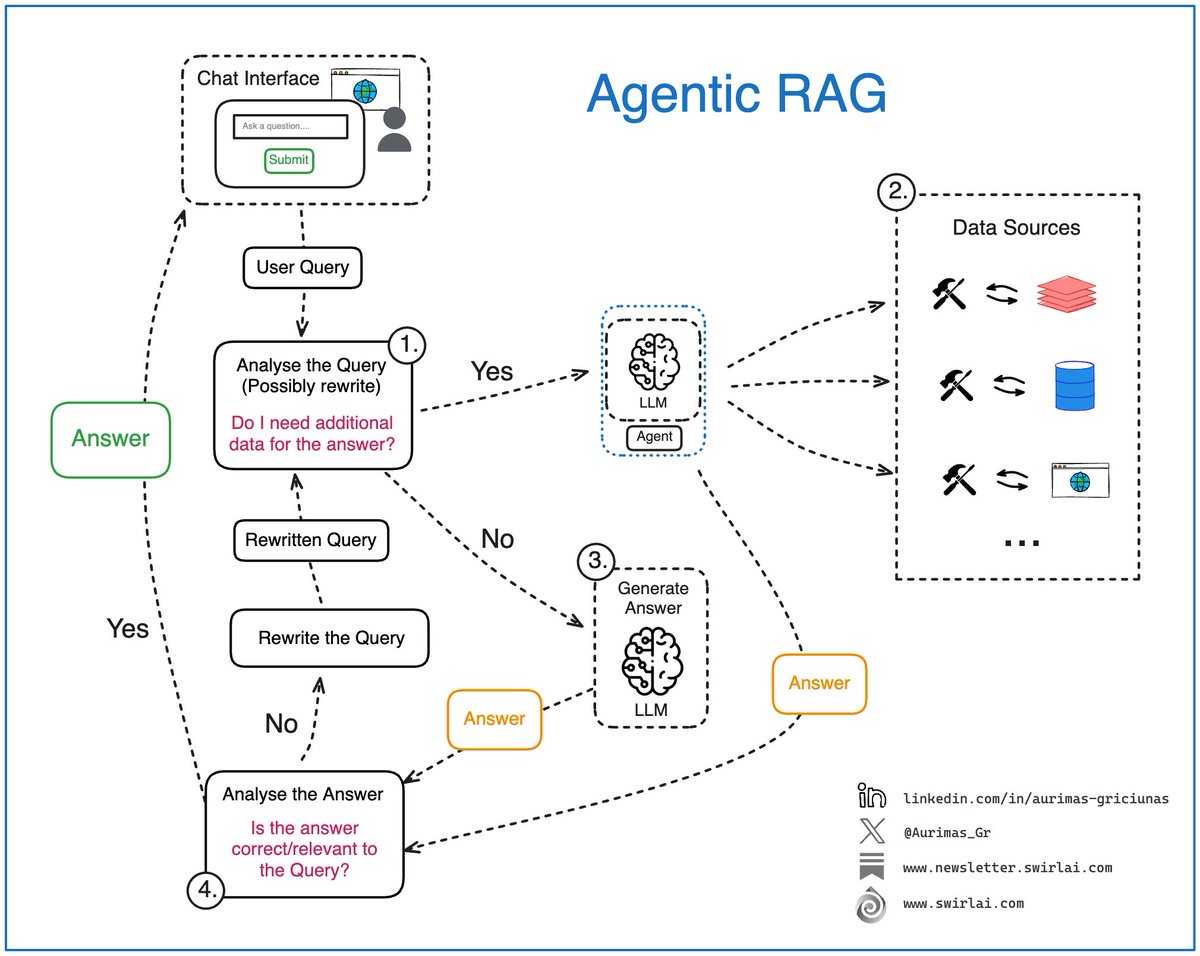

Thanks @_akhaliq for sharing our work! MobileLLM-R1 marks a paradigm shift. Conventional wisdom suggests that reasoning only emerges after training on massive amounts of data, but we prove otherwise. With just 4.2T pre-training tokens and a small amount of post-training, MobileLLM-R1 demonstrates strong reasoning ability. Despite using only 4.2T tokens, 11.7% compared to 36T pretraining token Qwen used, it delivers remarkable performance. Collaborating with @erniecyc , Changsheng, et al.

It's a bit sad and confusing that LLMs ("Large Language Models") have little to do with language; It's just historical. They are highly general purpose technology for statistical modeling of token streams. A better name would be Autoregressive Transformers or something. They don't care if the tokens happen to represent little text chunks. It could just as well be little image patches, audio chunks, action choices, molecules, or whatever. If you can reduce your problem to that of modeling token streams (for any arbitrary vocabulary of some set of discrete tokens), you can "throw an LLM at it". Actually, as the LLM stack becomes more and more mature, we may see a convergence of a large number of problems into this modeling paradigm. That is, the problem is fixed at that of "next token prediction" with an LLM, it's just the usage/meaning of the tokens that changes per domain. If that is the case, it's also possible that deep learning frameworks (e.g. PyTorch and friends) are way too general for what most problems want to look like over time. What's up with thousands of ops and layers that you can reconfigure arbitrarily if 80% of problems just want to use an LLM? I don't think this is true but I think it's half true.