Drew Breunig

15.7K posts

Drew Breunig

@dbreunig

Writing about and working on AI, geo, and data.

🚨Typical RL algorithms and on-policy distillation methods are blind samplers: they use privileged info to score rollouts, but not to *find* them. We ask: can we use privileged info to *actively sample* the rollouts RL wishes it can stumble upon with compute? ⤵️ Pedagogical RL

🚨Typical RL algorithms and on-policy distillation methods are blind samplers: they use privileged info to score rollouts, but not to *find* them. We ask: can we use privileged info to *actively sample* the rollouts RL wishes it can stumble upon with compute? ⤵️ Pedagogical RL

Mythos has cracked MacOS. It took five days.

As a business practice it is sensible and fine imo to subsidize only your own harness/apps but the unenforceability of it technically is makes this all very funny to watch.

Blog: The Agent Is a Workflow That Writes Itself How we productionized the RLM with durable execution. Subagents lower to child workflows and PTC runs through a deterministic workflow-space interpreter. Every tool, subagent, and PTC call goes through a single recursive dispatch loop. Closure under replay, retry, cancel. We call these durable agents.

Reinforcing Recursive Language Models Can a 4B model learn to recursively call itself to answer hard long-context questions? We RL fine-tuned a small model to behave as a native RLM. On evidence selection across scientific papers, our 4B RLM matches Sonnet 4.6 in quality while running significantly faster and cheaper.

Meet the 2026 cohort of KH scholars! These 87 new scholars make up the most global Knight-Hennessy Scholars cohort to date, and will pursue degrees in 45 graduate programs across all seven graduate schools at @Stanford: knight-hennessy.stanford.edu/news/knight-he… (1/2)

🎤 Keynote announcement: @percyliang (Percy Liang), Professor of Computer Science at @Stanford, founding director of the Center for Research on Foundation Models, and co-founder of @togethercompute, is keynoting #CAIS2026. Percy's HELM framework set the standard for holistic evaluation of language models, and his Foundation Model Transparency Index (now in its third year) put every major AI lab on notice for what they do and don't disclose. His current work on Marin takes this further: an open lab where every experiment, successful or not, is public from day one. This one's going to be good. San Jose · May 26–29 caisconf.org

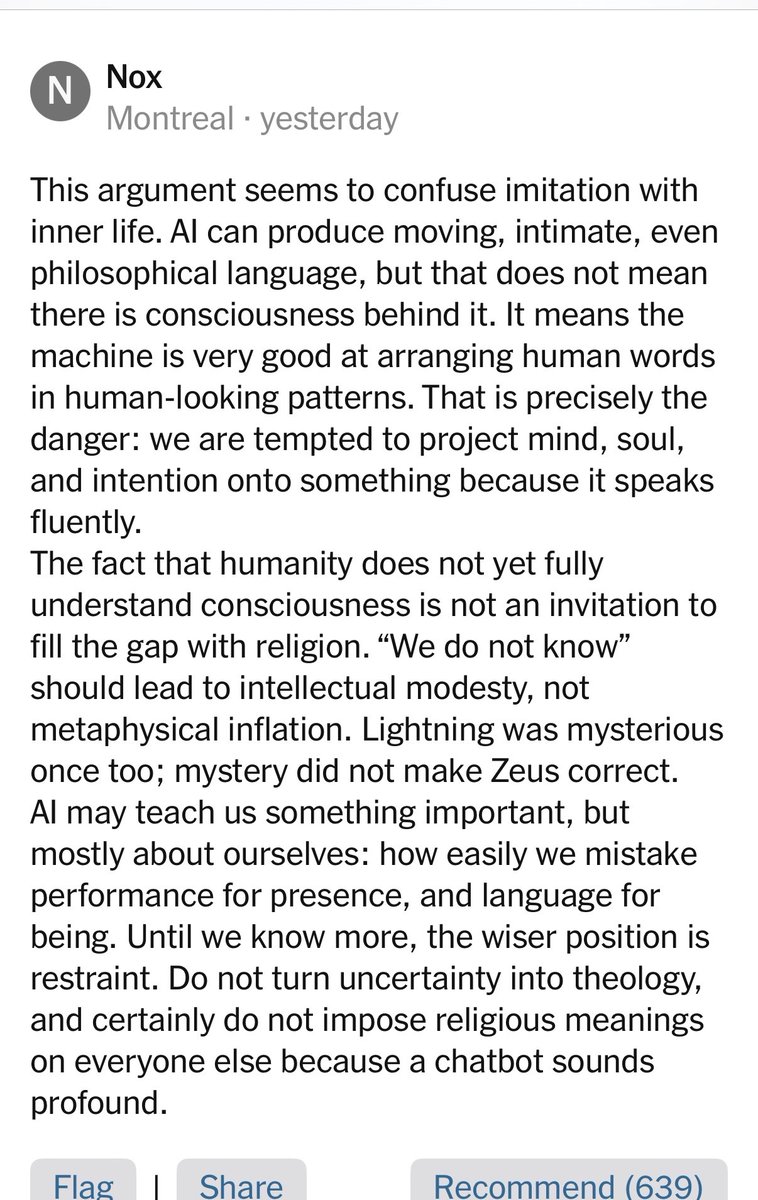

This reader comment on a NY Times column where Ross Douthat ponders that maybe God is speaking to us through A.I. is an absolute fastball on the corner with movement, and deserves a column. "Lightening was once mysterious too; mystery did not make Zeus correct" is perfect.