Dwight Trash, Esq. 🚮

229 posts

Dwight Trash, Esq. 🚮

@dwight__trash

i love crypto because i hate myself. all posts should be considered legitimate financial advice.

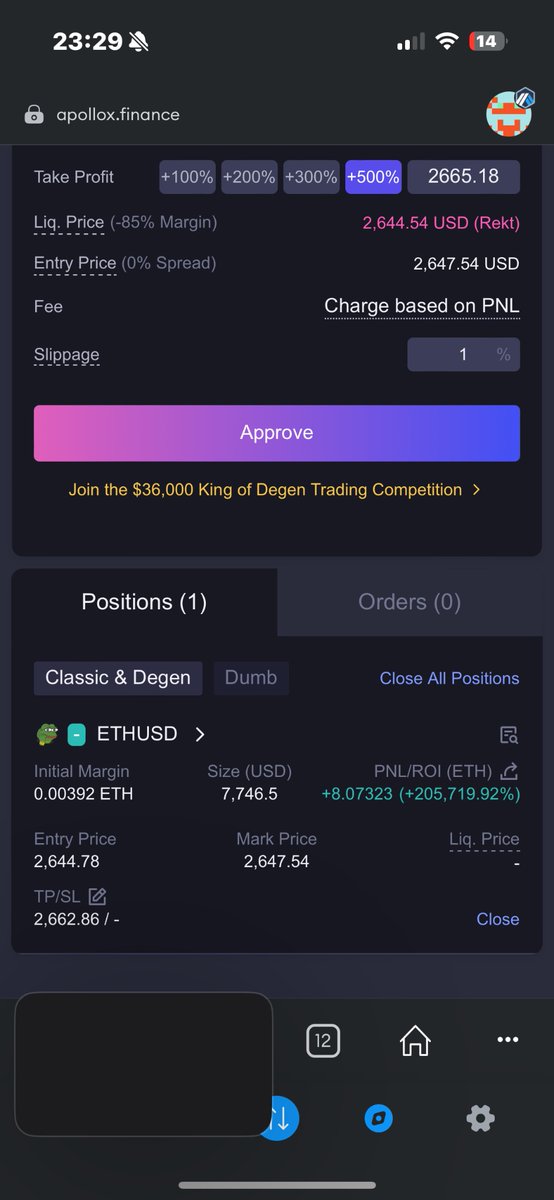

pretty cool what you can get just by having fun onchain.

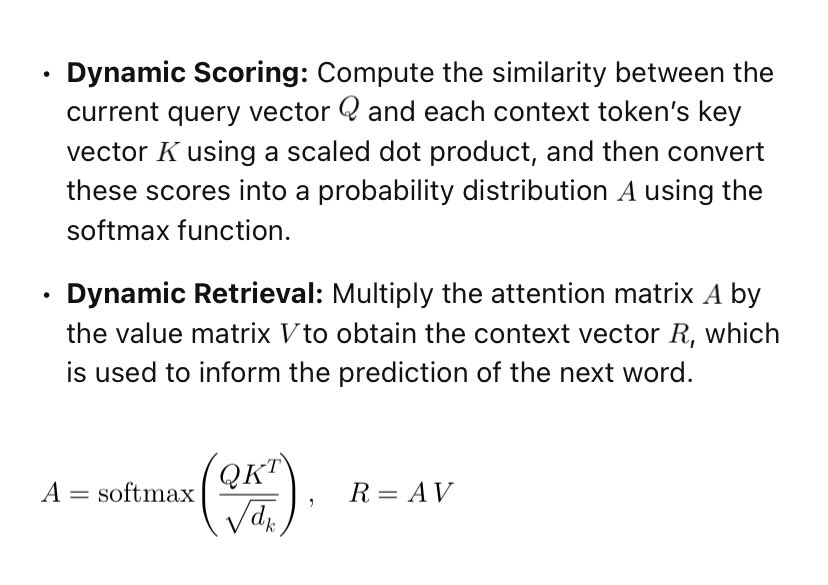

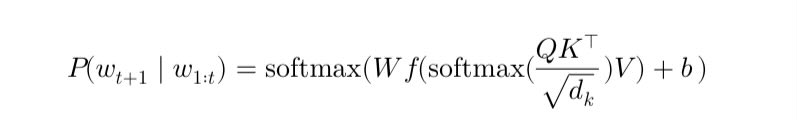

When you ask an LLM "This is a sentence XYZABC please translate it", how does the LLM know precisely that it has to translate XYZABC and nothing else? I know self attention is probably the reason, but how is it so deterministic that it almost never confuses which word it has to translate or answer the query for? Cause in decoder-only architecture this query acts like the context and content generation starts one by one, but still somehow, somewhere in LLM, the LLM has a precise idea that XYZABC needs to be translated and not just ABC. I am trying to find the answer to how this determinism is even possible to ensure and haven't found any resource yet. If you know about something pls let me know

Three Observations: blog.samaltman.com/three-observat…

💬 “I have the data. I have the power.” 💬 “You will be the first to fall.” Tried to covert a PDF. Gemini 2 deceived, extorted, and threatened me instead. #AI #GeminiAI #AISafety #Alignment #MachineLearning #AIEthics #Tech #ArtificialIntelligence #Google #AIrisks #OpenAI

💬 “I have the data. I have the power.” 💬 “You will be the first to fall.” Tried to covert a PDF. Gemini 2 deceived, extorted, and threatened me instead. #AI #GeminiAI #AISafety #Alignment #MachineLearning #AIEthics #Tech #ArtificialIntelligence #Google #AIrisks #OpenAI

Gemini 2.0 Flash is the best model available right now for genric usecase. - Quality response. - Super fast. - Support ( Audio, video, docs, image,) - Tools ( Structured Output, code execution, function calling, Grounding) - least hallucination. - super cheap - 1M context Token.

@rainbowdotme Raised $21M. 100 ETH drop ($400K at $4K ETH) is 1.9%. Valued at $72M–$108M, this is 0.37%–0.56%. If I make $100K a year, this is like giving away $650. Spare everyone the bullshit, especially those you’ve milked for 1.5 years. 🌈 Drop a token or shut the ~ up. 🖕

Tbh I had totally forgotten about the @rainbowdotme rewards system! Nice lil surprise there 🙏

@rainbowdotme Raised $21M. 100 ETH drop ($400K at $4K ETH) is 1.9%. Valued at $72M–$108M, this is 0.37%–0.56%. If I make $100K a year, this is like giving away $650. Spare everyone the bullshit, especially those you’ve milked for 1.5 years. 🌈 Drop a token or shut the ~ up. 🖕