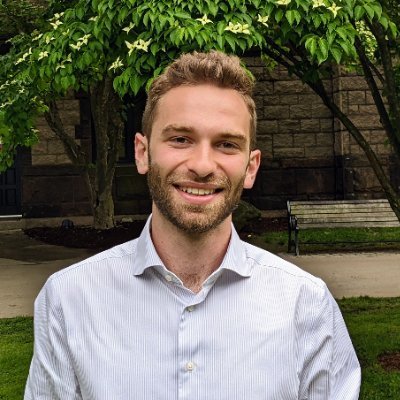

Ehsan Adeli

1.1K posts

@eadeli

Prof. at @Stanford @StanfordMed @StanfordPSY; @StanfordSVL; @StanfordAILab; @StanfordTAIL AI, Computer Vision, Neuroscience, Healthcare, Medical Image Analysis.

🩺 The 12th Medical Computer Vision Workshop is coming to #CVPR2026! 📅 June 3, 2026 | Denver, CO For over a decade, MCV has united computer vision & medicine. This year: 9 world-class speakers, full keynote series. See you in Denver! 🏔️ #CVPR2026 #MedicalAI #MedicalImaging #CV

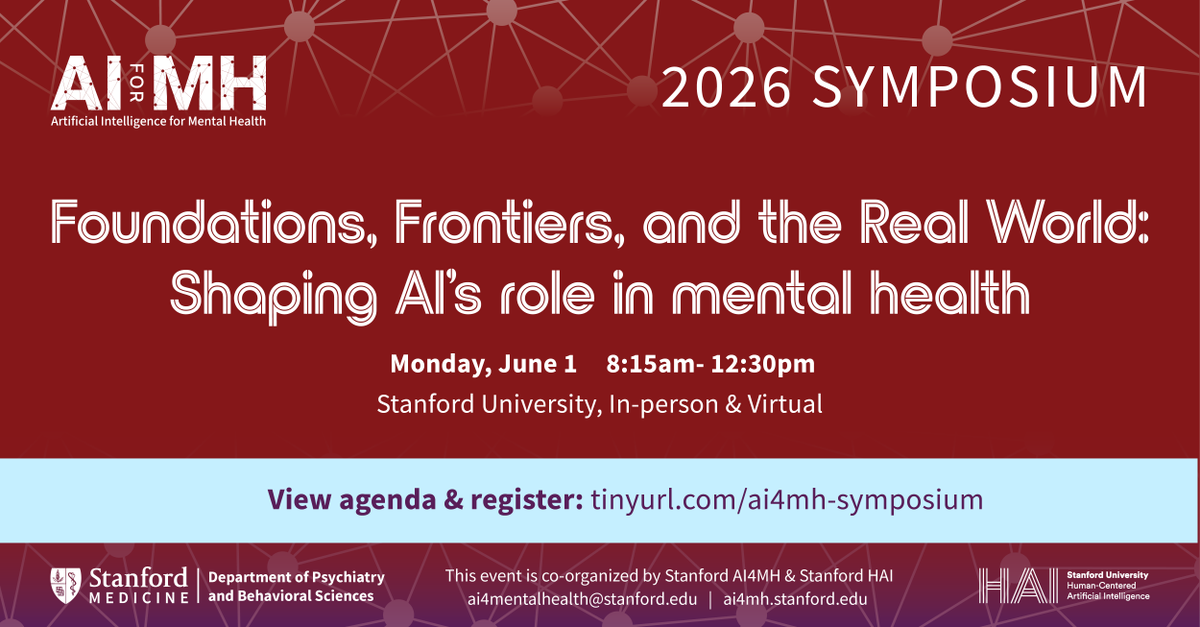

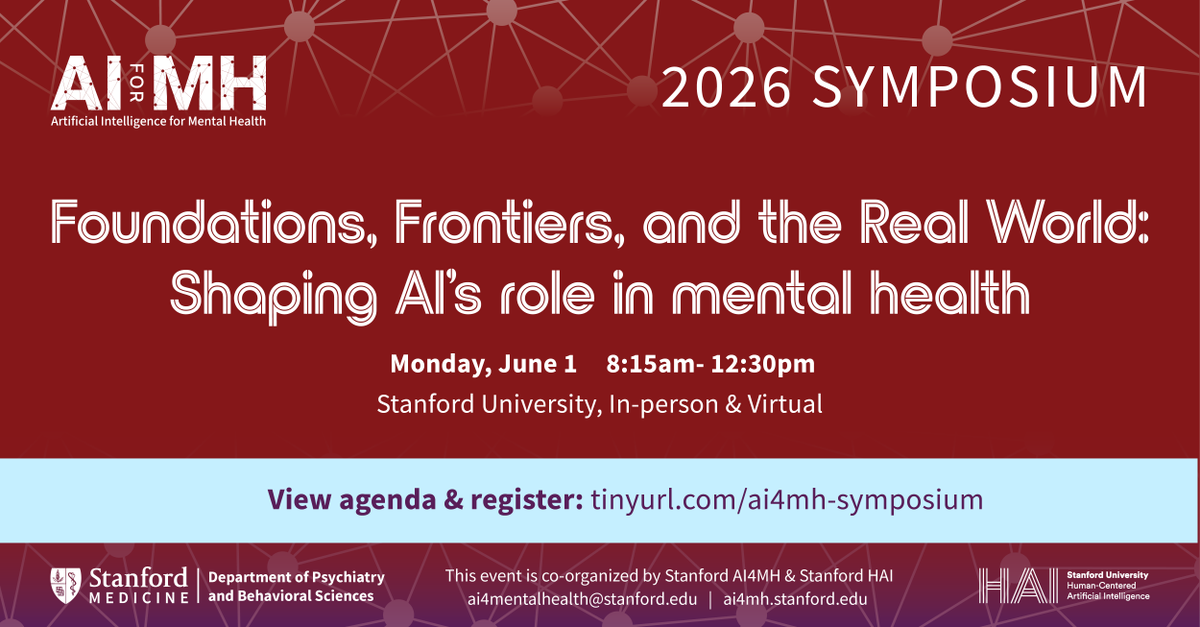

As part of the @Stanford Health AI Week, join us for the inaugural AI for Mental Health (AI4MH) Symposium on June 1. The event will explore how AI is shaping mental health – from foundational advances to real-world implementation and responsible use. med.stanford.edu/psychiatry/spe…

I want to believe that this is not true, and that there is a way to make peer review constructive, even when we don’t support a manuscript in its totality (or at all). I don’t actually think its that difficult; I have tried very hard to model this with my own behavior. (8/10)

Can you identify AI-generated videos by watching how human figures move in the video? We introduce 🏃♂️HumanScore💃 — a benchmark for evaluating human motions in generated videos through biomechanics, not just visual realism. Check out our project page: humanscore.stanford.edu #AI #ComputerVision #VideoGeneration #StanfordAI

It’s 11th year and counting! Teaching the first lecture of @cs231n every year has been a highlight of my spring seasons. As usual, I asked students which departments or schools they come from @Stanford . Increasingly, students raise their hands to indicate that they come from all seven schools on campus, from @StanfordEng to @StanfordMed @StanfordHumSci @StanfordGSB @StanfordLaw @StanfordEd @stanforddoerr . AI is truly a horizontal technology that excites students across all backgrounds and disciplines!🤩

@interminded @heygurisingh Hi, thanks for your interest in this work! The prompt that you have tried is from “benchmark evaluation” section, which should be used as “system” in an API followed by a question. To see the effect in chat, you could try something like this: chatgpt.com/share/69b1ed8d…

Beware of large AI models especially in biology and healthcare. They can make accurate predictions using all kinds of shortcuts & spurious reasoning. This is very important work.