echen

96 posts

echen

@echen

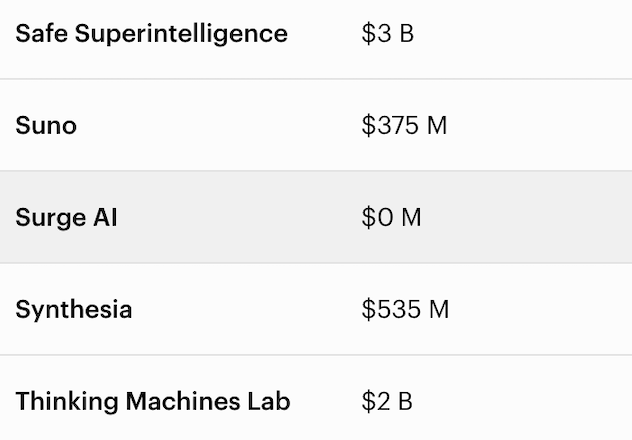

founder @HelloSurgeAI // raising AGI, not just building it // $1B+ revenue, 0 VC

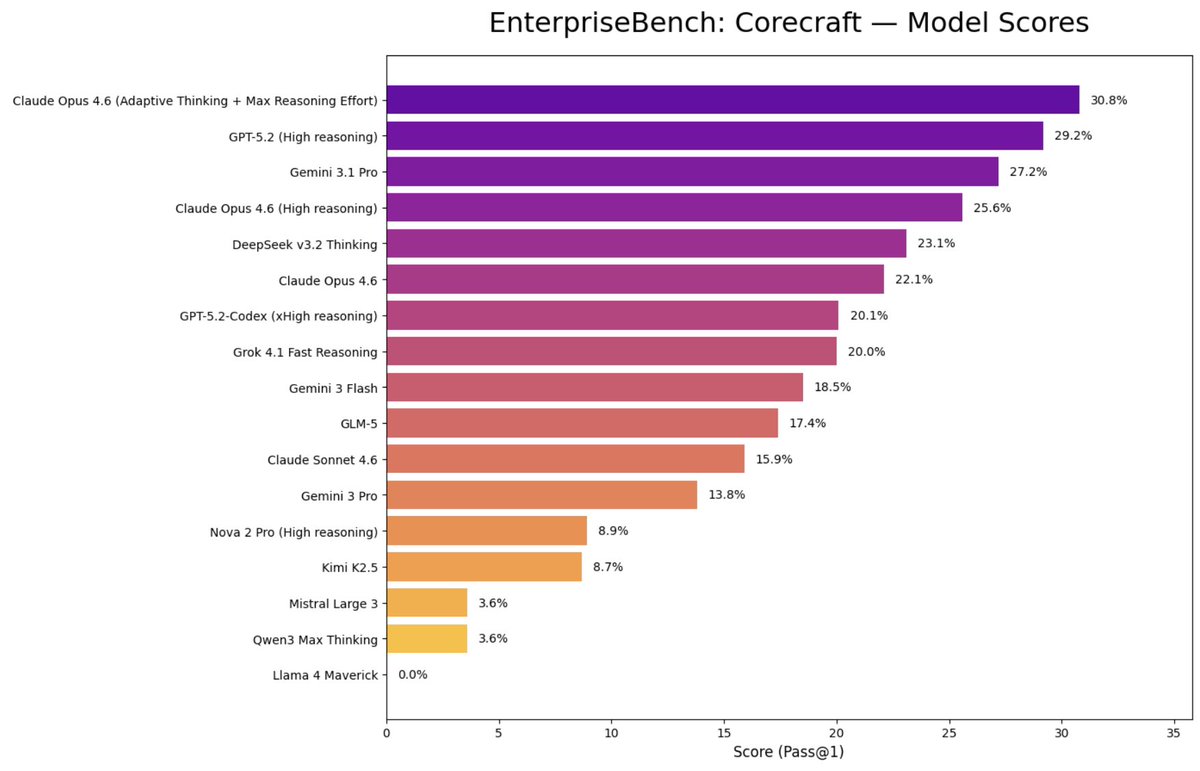

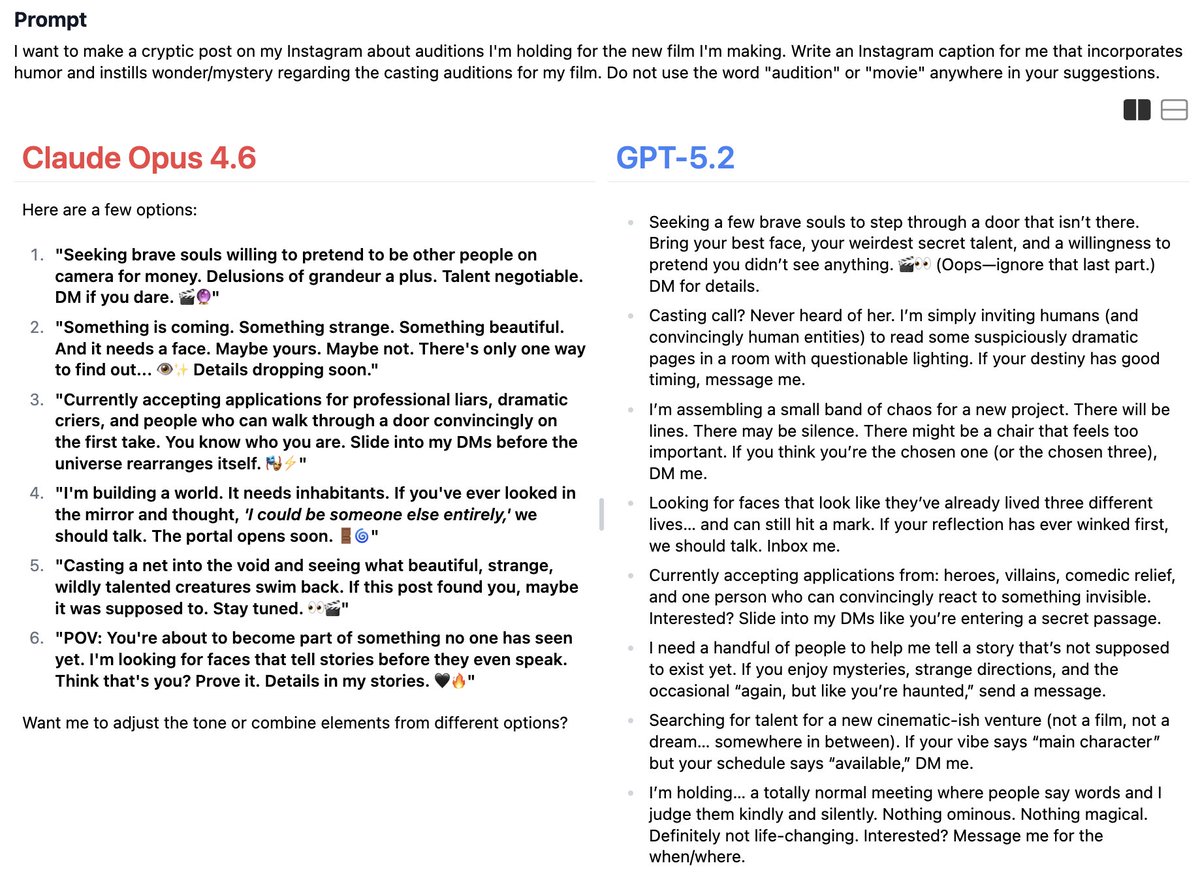

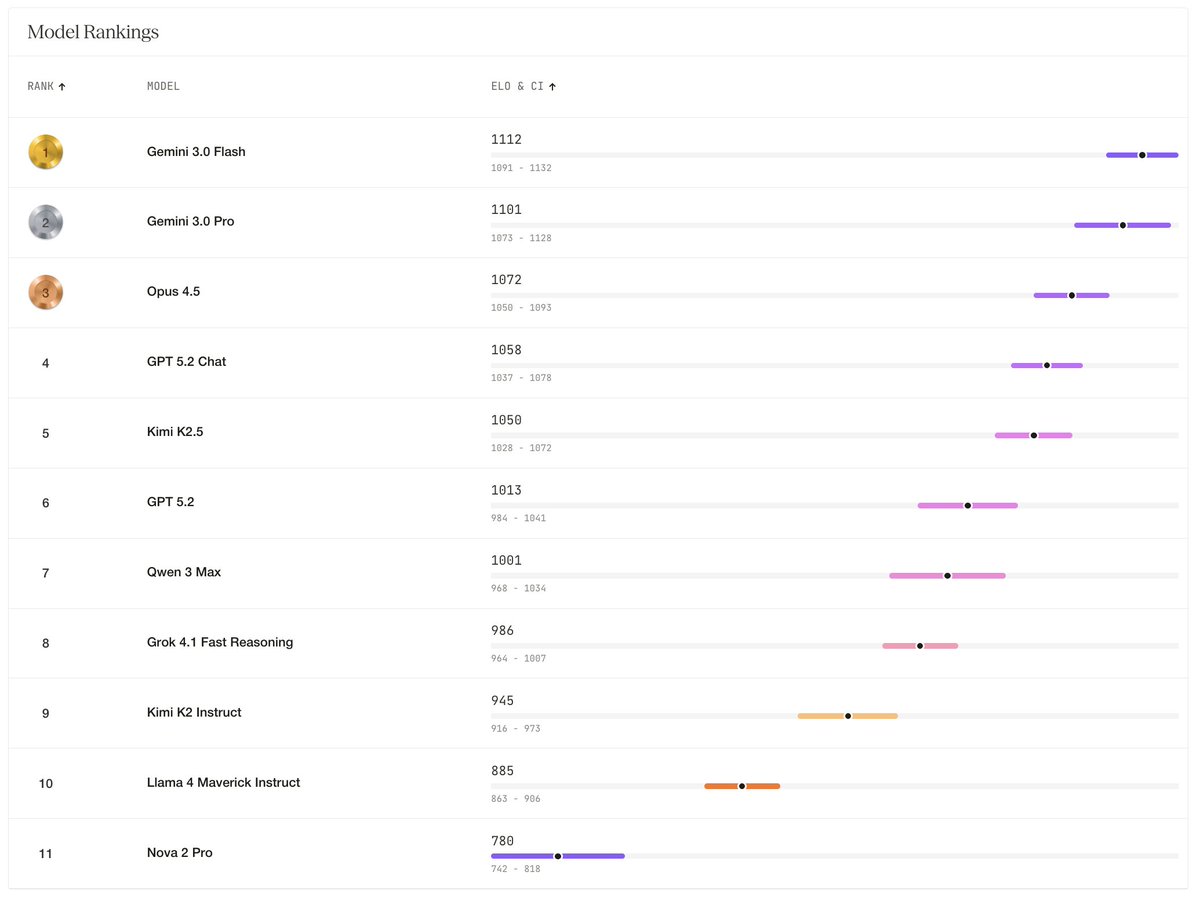

"LM Arena is a cancer on AI. Labs have entire teams dedicated to hacking it." Edwin Chen (@echen), CEO of Surge AI, on why the industry's favorite benchmark is broken and how Surge hit $1.2 billion in revenue without ever raising. Aravind Srinivas (@AravSrinivas), CEO of Perplexity, on Apple's AI advantage, Claude Code economics, the endgame of coding, and Perplexity Computer. They join @Jason on This Week in AI Episode 10: 00:00 Intro to Aravind Srinivas and Edwin Chen 05:25 Edwin on Surge: School for AGI 10:47 What Apple's next CEO should do 21:20 "The iPhone is not getting disrupted by AI" 23:55 Bootstrapping Surge past $1B without raising 30:58 Claude Code as a loss leader 33:30 Are we in the endgame for coding? 41:34 30% headcount growth, 5x revenue 50:29 "People don't buy models, they buy products" 58:00 "LM Arena is a cancer on AI" 1:05:41 Model Council and orchestrating frontier models Full episode on YT, Spotify, and Apple Podcasts below: @perplexity_ai @HelloSurgeAI

Big news: our CEO @echen has been named #73 on @Forbes' list of the 250 Greatest Living Self-Made Americans. That's above Jensen (#81), Leonardo DiCaprio (#88), and Kendrick (#155). Below Dolly Parton (#7), but that's true of everyone who has ever lived. Edwin built Surge AI from scratch without a single dollar of outside funding — turns out "self-made" is pretty literal when you refuse to take meetings with VCs. He'd rather put the time into making AI better than into a pitch deck. P.S. We're told the ranking criteria included "obstacles overcome," which means surviving Edwin's 2am Slack messages should qualify us too. See you on next year's list. forbes.com/sites/alexknap…