ed_the_engineer

66 posts

ed_the_engineer

@ed_the_engineer

Still grinding for the “yes you’re actually an engineer” badge

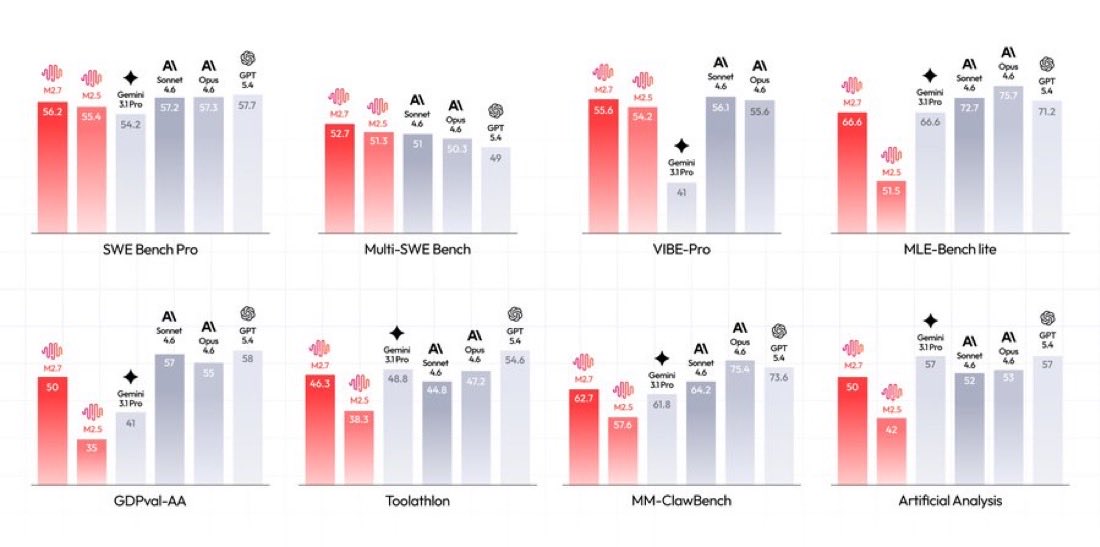

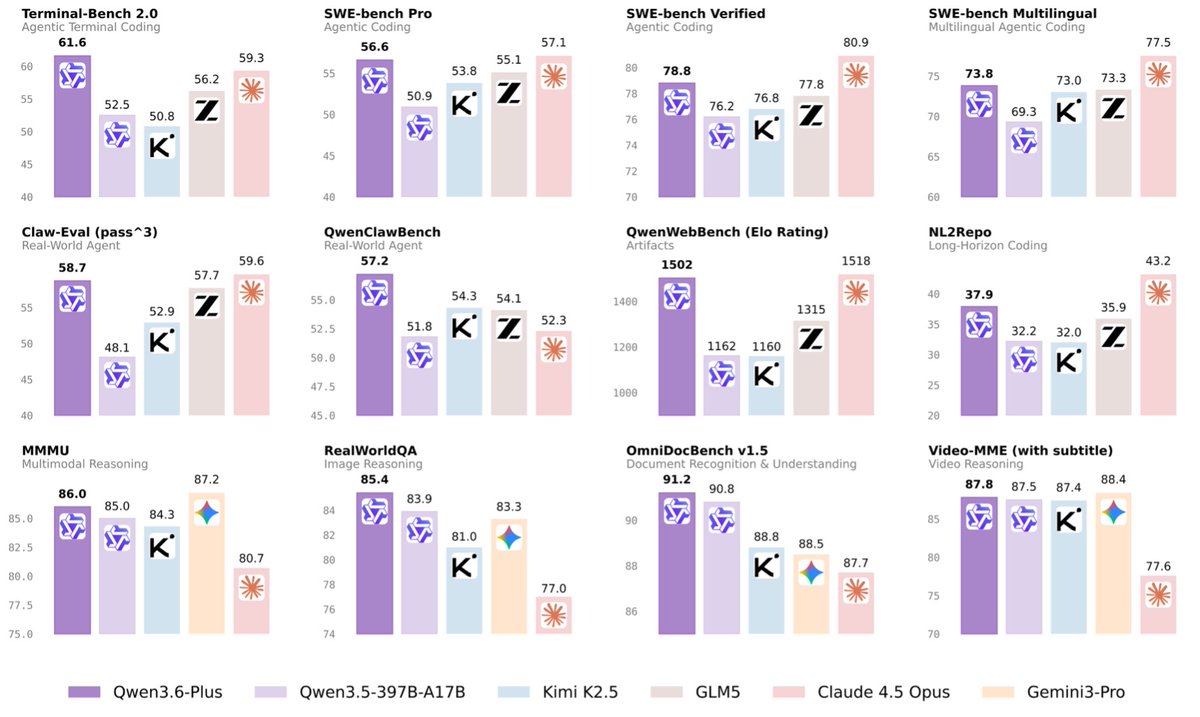

Introducing GLM-5.1: The Next Level of Open Source - Top-Tier Performance: #1 in open source and #3 globally across SWE-Bench Pro, Terminal-Bench, and NL2Repo. - Built for Long-Horizon Tasks: Runs autonomously for 8 hours, refining strategies through thousands of iterations. Blog: z.ai/blog/glm-5.1 Weights: huggingface.co/zai-org/GLM-5.1 API: docs.z.ai/guides/llm/glm… Coding Plan: z.ai/subscribe Coming to chat.z.ai in the next few days.

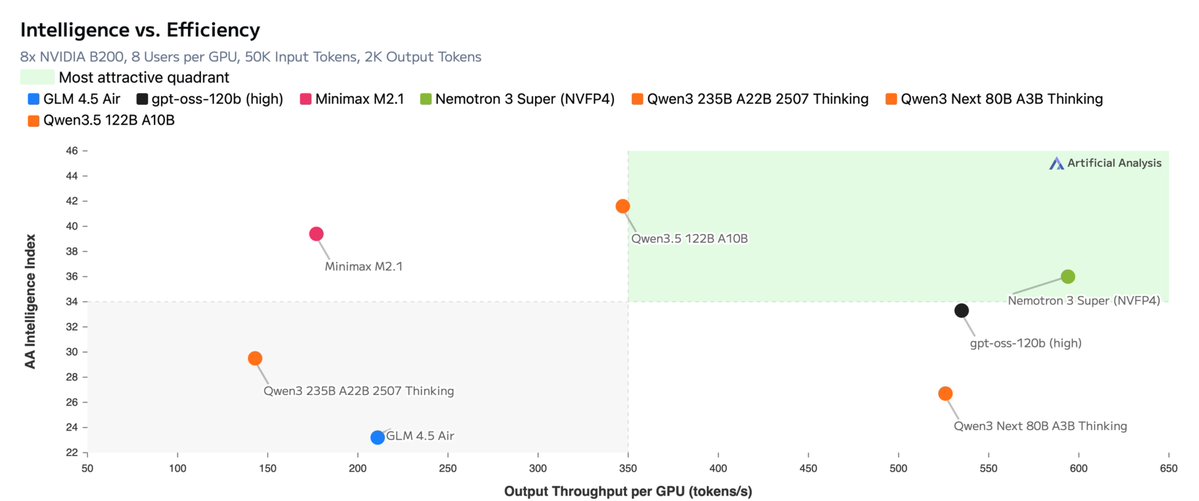

🧵 I just saw the future of AI-powered agentic coding. 10,000+ tokens/second (up to 17K tokens/second). Almost realtime agentic execution. I got early API access from @taalas_inc and built a demo of what agentic coding looks like when inference is basically instant. This changes everything. Here's what I learned 👇

Builders on X What are you building right now? App. Startup. Side project. Content. I want more builders on my timeline. Let’s connect 🤝🏻 #BuildInPublic