Eran Sandler

15.5K posts

Eran Sandler

@erans

Builder, operator and investor. Infra, AI, and product nerd. Trying to make powerful things simple. Opinions are my own. Building https://t.co/b0sgru9dFz

if you run agents that execute arbitrary code: do you isolate the tool or isolate the agent? we tried both. isolating the agent won (zero secrets, control-plane proxy). here's why ↓

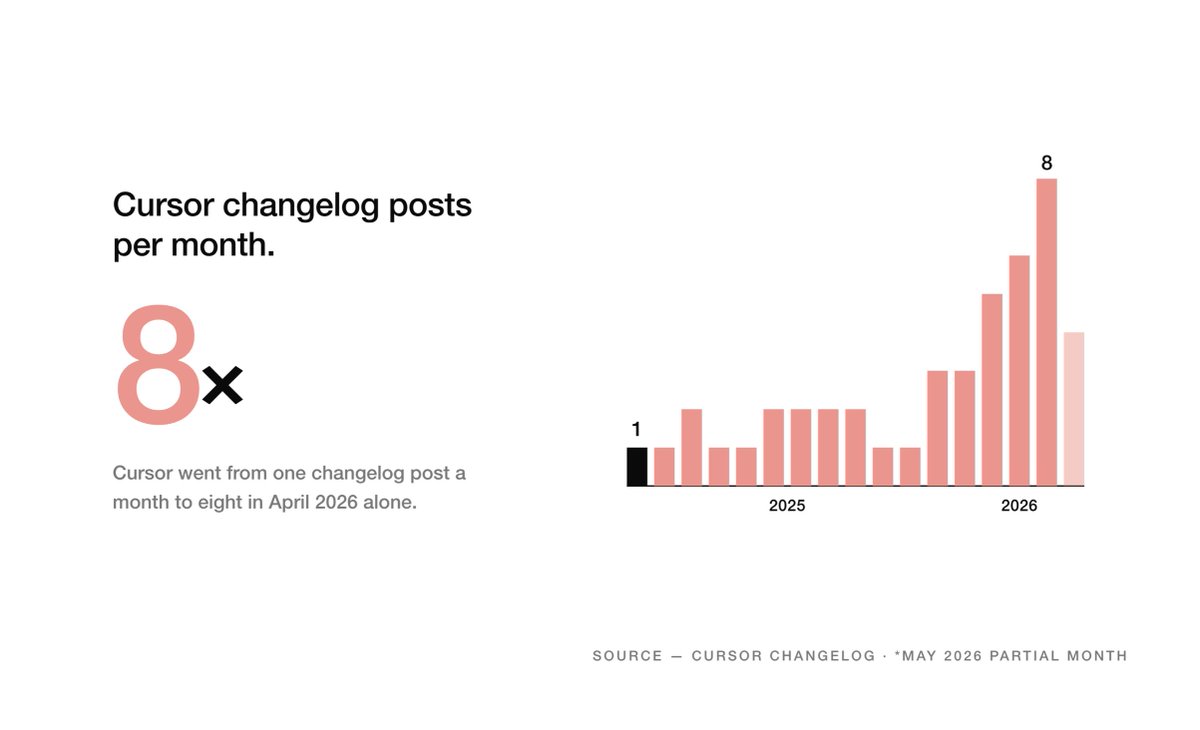

This is becoming the new normal for changelogs It’s also why nobody reads them anymore

🤖 Agentic AI is already running in production while security teams treat it as a policy issue. You can’t secure what you don’t understand. Three agent types — one now lets anyone build powerful agents with real access, no code needed. Read about it: thehackernews.com/2026/05/why-ag…

if you are a YC CTO i would like to talk to you. i’ll come to your office and bring nice things.

SECURITY ADVISORY — TanStack npm packages A supply-chain compromise affecting 42 @tanstack/* packages (84 versions total) was published to npm earlier today at approximately 19:20 and 19:26 UTC. Two malicious versions per package. Status: ACTIVE — packages are deprecated, npm security engaged, publish path being shut down. Severity: HIGH — payload exfiltrates AWS, GCP, Kubernetes, and Vault credentials, GitHub tokens, .npmrc contents, and SSH keys. If you installed any @tanstack/* package between 19:20 and 19:30 UTC today, treat the host as potentially compromised: • Rotate cloud, GitHub, and SSH credentials immediately • Audit cloud audit logs for the last several hours • Pin to a prior known-good version and reinstall from a clean lockfile Detection — the malicious manifest contains: "optionalDependencies": { "@tanstack/setup": "github:tanstack/router#79ac49ee..." } Any version with this entry is compromised. The payload is delivered via a git-resolved optionalDependency whose prepare script runs router_init.js (~2.3 MB, smuggled into each tarball at the package root). Unpublish is blocked by npm policy for most affected packages due to existing third-party dependents. All 84 versions are being deprecated with a SECURITY warning, and npm security has been engaged to pull tarballs at the registry level. Full technical breakdown, complete package and version list, and rolling status updates: github.com/TanStack/route… Credit to the security researcher for responsible disclosure.

$NOW is expanding its $MSFT partnership by integrating AI Control Tower with Microsoft Agent 365. The goal is to give enterprises unified visibility, approval workflows and policy controls for AI agents across both the ServiceNow and Microsoft ecosystems.