Eric Herring ericherring.bsky.social

21.7K posts

@eric_herring

Prof of World Politics, University of Bristol. Research/action on Somali led sustainable development with @TransparencySol. Honoured by my Somali name Warsame

China removes hukou hurdle for migrant workers in social insurance shake-up. #Echobox=1779485963" target="_blank" rel="nofollow noopener">scmp.com/news/article/3…

"The question is not whether Labour values have been usurped by Starmer’s faction. It is what kind of party could be built out of the corpse of Starmer’s party. One option is clearly a more Blairite party: pro-tech giants, the US, and privatisation. But are there any serious options to create a progressive party, one that dares speak out on the issues of the day, that actually communicates with a progressive electorate? It is hard to see at the moment whether the ambition or capacity exists within it. It is worth noting that Starmer’s Party is only barely the official party of the organised working class. Whereas Labour had affiliated to it nearly every major trade union, today only just over half of union members are in party-affiliated unions. And even then some may leave. This is hardly surprising: as it stands its policies, Starmer’s Party’s political instincts, are far closer to those of the Tories and Reform than to the progressive parties that are eating it up. And that is not accidental, or the result of a lack of vision. It was the whole point." Read @DEHEdgerton's obituary for Starmerism newstatesman.com/politics/labou…

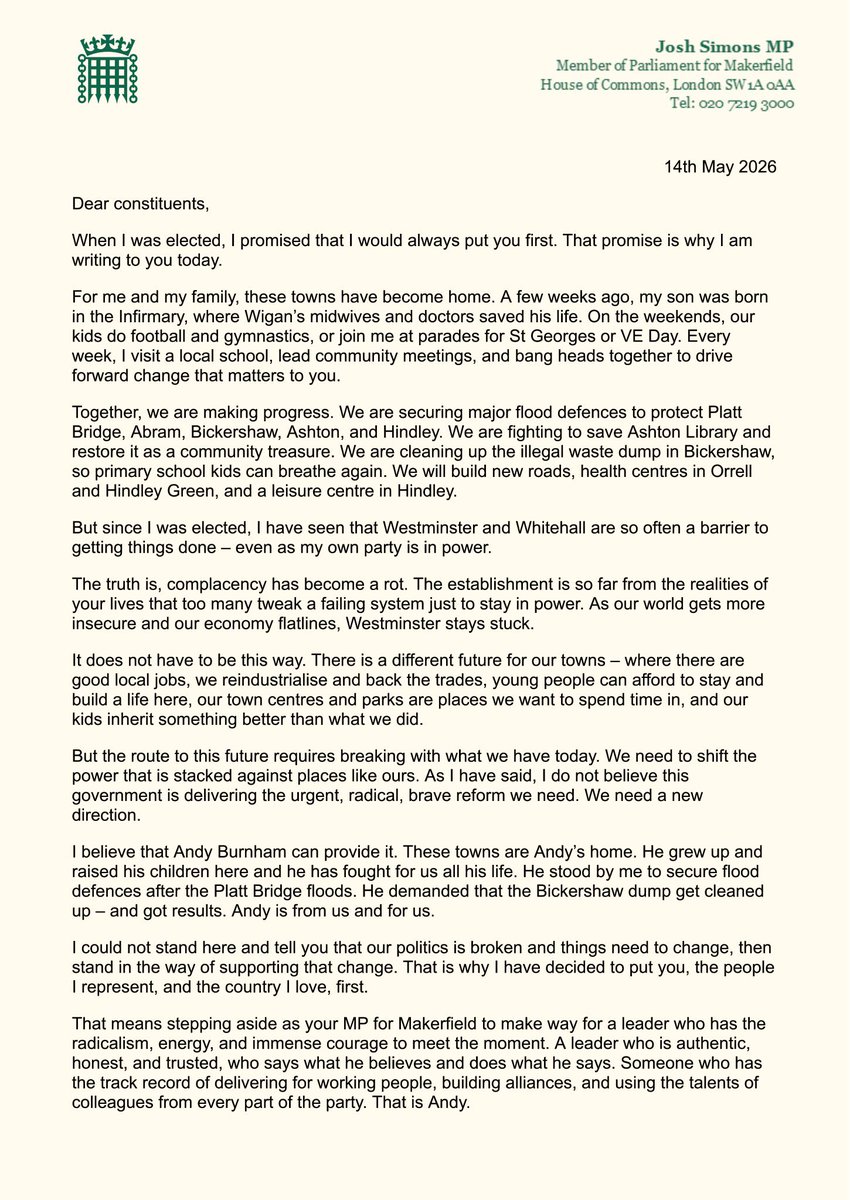

I can confirm that I will be requesting the permission of the NEC to stand in the Makerfield by-election. I grew up in this area and have lived here for 25 years. I care deeply about it and its people. I know they have been let down by national politics. Ten years ago, I decided to leave Westminster. Why? Because, after 16 years, I came to the conclusion that our national political system does not work for areas like ours. I learnt this fighting its failure to invest in the Wigan borough, for justice for the Hillsborough families and against its treatment of Greater Manchester during the pandemic. Over the last decade, I have been challenging this failure from the outside and building a new and better way of doing politics. We have built Greater Manchester into the fastest-growing city-region in the UK and put buses back under public control, introducing a £2 fare cap to help people with cost-of-living pressures. However, there is only so much that can be done from Greater Manchester. Much bigger change is needed at a national level if everyday life is to be made more affordable again. This is why I now seek people’s support to return to Parliament: to bring the change we have brought to Greater Manchester to the whole of the UK and make politics work properly for people. Millions are struggling and they need the Labour Government to succeed. It has already made changes to make life better for them in its first two years. After this week, we owe it to people to come back together as a Labour movement, giving the Prime Minister and the Government the space and stability they need as the by-election takes place. I want to recognise the difficult decision taken by Josh Simons and the sacrifice he and his family are making. I have worked closely with him as Mayor on issues like flooding and illegal waste dumping and have seen first-hand how effective he has been. He has put the communities of Makerfield first, made a real difference for them and should take great pride in that. Finally, I truly do not take a single vote for granted and will work hard to regain the trust of people in the Makerfield constituency, many of whom have long supported our party but lost faith in recent times. We will change Labour for the better and make it a party you can believe in again. ENDS

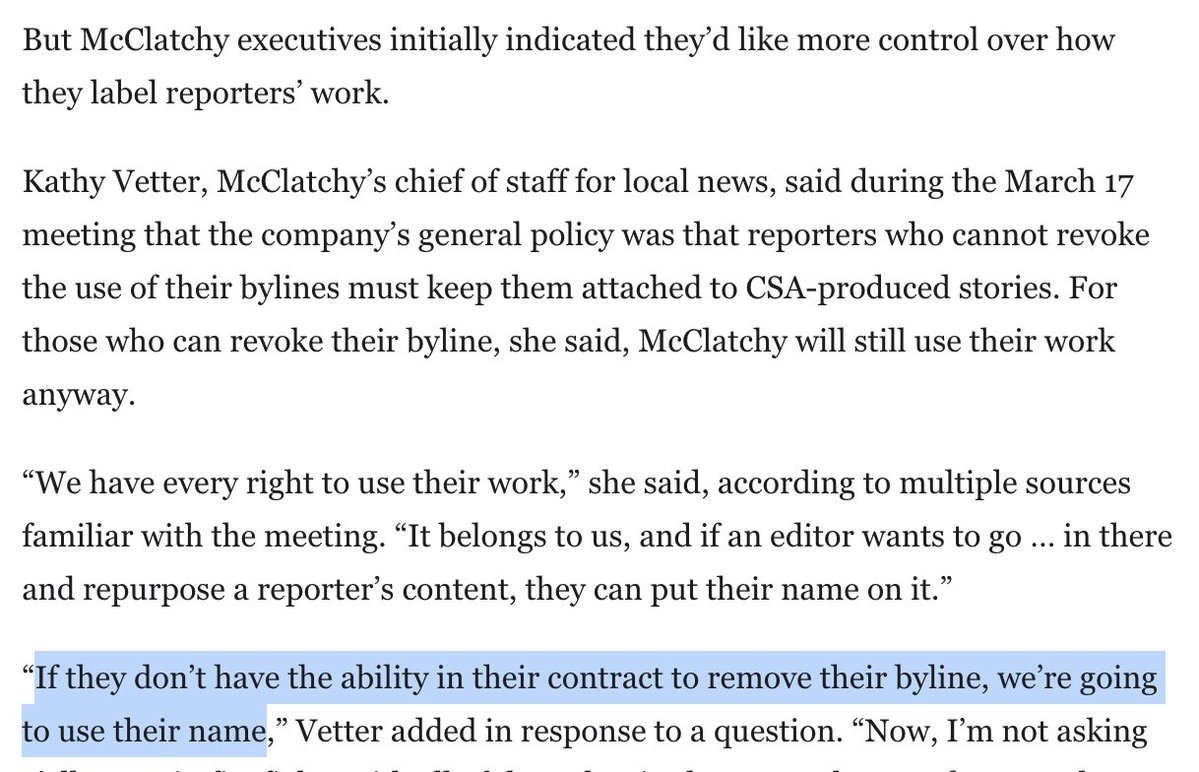

SCOOP: I got internal photos/videos of McClatchy's Claude-powered "content scaling agent" tool roiling its newsrooms. It's an AI product executives made because "we need more stories and we need more inventory," and journalists "defiant" about using it "will fall behind." 1/6

Because we get asked a lot. The Technological Republic, in brief. 1. Silicon Valley owes a moral debt to the country that made its rise possible. The engineering elite of Silicon Valley has an affirmative obligation to participate in the defense of the nation. 2. We must rebel against the tyranny of the apps. Is the iPhone our greatest creative if not crowning achievement as a civilization? The object has changed our lives, but it may also now be limiting and constraining our sense of the possible. 3. Free email is not enough. The decadence of a culture or civilization, and indeed its ruling class, will be forgiven only if that culture is capable of delivering economic growth and security for the public. 4. The limits of soft power, of soaring rhetoric alone, have been exposed. The ability of free and democratic societies to prevail requires something more than moral appeal. It requires hard power, and hard power in this century will be built on software. 5. The question is not whether A.I. weapons will be built; it is who will build them and for what purpose. Our adversaries will not pause to indulge in theatrical debates about the merits of developing technologies with critical military and national security applications. They will proceed. 6. National service should be a universal duty. We should, as a society, seriously consider moving away from an all-volunteer force and only fight the next war if everyone shares in the risk and the cost. 7. If a U.S. Marine asks for a better rifle, we should build it; and the same goes for software. We should as a country be capable of continuing a debate about the appropriateness of military action abroad while remaining unflinching in our commitment to those we have asked to step into harm’s way. 8. Public servants need not be our priests. Any business that compensated its employees in the way that the federal government compensates public servants would struggle to survive. 9. We should show far more grace towards those who have subjected themselves to public life. The eradication of any space for forgiveness—a jettisoning of any tolerance for the complexities and contradictions of the human psyche—may leave us with a cast of characters at the helm we will grow to regret. 10. The psychologization of modern politics is leading us astray. Those who look to the political arena to nourish their soul and sense of self, who rely too heavily on their internal life finding expression in people they may never meet, will be left disappointed. 11. Our society has grown too eager to hasten, and is often gleeful at, the demise of its enemies. The vanquishing of an opponent is a moment to pause, not rejoice. 12. The atomic age is ending. One age of deterrence, the atomic age, is ending, and a new era of deterrence built on A.I. is set to begin. 13. No other country in the history of the world has advanced progressive values more than this one. The United States is far from perfect. But it is easy to forget how much more opportunity exists in this country for those who are not hereditary elites than in any other nation on the planet. 14. American power has made possible an extraordinarily long peace. Too many have forgotten or perhaps take for granted that nearly a century of some version of peace has prevailed in the world without a great power military conflict. At least three generations — billions of people and their children and now grandchildren — have never known a world war. 15. The postwar neutering of Germany and Japan must be undone. The defanging of Germany was an overcorrection for which Europe is now paying a heavy price. A similar and highly theatrical commitment to Japanese pacifism will, if maintained, also threaten to shift the balance of power in Asia. 16. We should applaud those who attempt to build where the market has failed to act. The culture almost snickers at Musk’s interest in grand narrative, as if billionaires ought to simply stay in their lane of enriching themselves . . . . Any curiosity or genuine interest in the value of what he has created is essentially dismissed, or perhaps lurks from beneath a thinly veiled scorn. 17. Silicon Valley must play a role in addressing violent crime. Many politicians across the United States have essentially shrugged when it comes to violent crime, abandoning any serious efforts to address the problem or take on any risk with their constituencies or donors in coming up with solutions and experiments in what should be a desperate bid to save lives. 18. The ruthless exposure of the private lives of public figures drives far too much talent away from government service. The public arena—and the shallow and petty assaults against those who dare to do something other than enrich themselves—has become so unforgiving that the republic is left with a significant roster of ineffectual, empty vessels whose ambition one would forgive if there were any genuine belief structure lurking within. 19. The caution in public life that we unwittingly encourage is corrosive. Those who say nothing wrong often say nothing much at all. 20. The pervasive intolerance of religious belief in certain circles must be resisted. The elite’s intolerance of religious belief is perhaps one of the most telling signs that its political project constitutes a less open intellectual movement than many within it would claim. 21. Some cultures have produced vital advances; others remain dysfunctional and regressive. All cultures are now equal. Criticism and value judgments are forbidden. Yet this new dogma glosses over the fact that certain cultures and indeed subcultures . . . have produced wonders. Others have proven middling, and worse, regressive and harmful. 22. We must resist the shallow temptation of a vacant and hollow pluralism. We, in America and more broadly the West, have for the past half century resisted defining national cultures in the name of inclusivity. But inclusion into what? Excerpts from the #1 New York Times Bestseller The Technological Republic: Hard Power, Soft Belief, and the Future of the West, by Alexander C. Karp & Nicholas W. Zamiska techrepublicbook.com

Because we get asked a lot. The Technological Republic, in brief. 1. Silicon Valley owes a moral debt to the country that made its rise possible. The engineering elite of Silicon Valley has an affirmative obligation to participate in the defense of the nation. 2. We must rebel against the tyranny of the apps. Is the iPhone our greatest creative if not crowning achievement as a civilization? The object has changed our lives, but it may also now be limiting and constraining our sense of the possible. 3. Free email is not enough. The decadence of a culture or civilization, and indeed its ruling class, will be forgiven only if that culture is capable of delivering economic growth and security for the public. 4. The limits of soft power, of soaring rhetoric alone, have been exposed. The ability of free and democratic societies to prevail requires something more than moral appeal. It requires hard power, and hard power in this century will be built on software. 5. The question is not whether A.I. weapons will be built; it is who will build them and for what purpose. Our adversaries will not pause to indulge in theatrical debates about the merits of developing technologies with critical military and national security applications. They will proceed. 6. National service should be a universal duty. We should, as a society, seriously consider moving away from an all-volunteer force and only fight the next war if everyone shares in the risk and the cost. 7. If a U.S. Marine asks for a better rifle, we should build it; and the same goes for software. We should as a country be capable of continuing a debate about the appropriateness of military action abroad while remaining unflinching in our commitment to those we have asked to step into harm’s way. 8. Public servants need not be our priests. Any business that compensated its employees in the way that the federal government compensates public servants would struggle to survive. 9. We should show far more grace towards those who have subjected themselves to public life. The eradication of any space for forgiveness—a jettisoning of any tolerance for the complexities and contradictions of the human psyche—may leave us with a cast of characters at the helm we will grow to regret. 10. The psychologization of modern politics is leading us astray. Those who look to the political arena to nourish their soul and sense of self, who rely too heavily on their internal life finding expression in people they may never meet, will be left disappointed. 11. Our society has grown too eager to hasten, and is often gleeful at, the demise of its enemies. The vanquishing of an opponent is a moment to pause, not rejoice. 12. The atomic age is ending. One age of deterrence, the atomic age, is ending, and a new era of deterrence built on A.I. is set to begin. 13. No other country in the history of the world has advanced progressive values more than this one. The United States is far from perfect. But it is easy to forget how much more opportunity exists in this country for those who are not hereditary elites than in any other nation on the planet. 14. American power has made possible an extraordinarily long peace. Too many have forgotten or perhaps take for granted that nearly a century of some version of peace has prevailed in the world without a great power military conflict. At least three generations — billions of people and their children and now grandchildren — have never known a world war. 15. The postwar neutering of Germany and Japan must be undone. The defanging of Germany was an overcorrection for which Europe is now paying a heavy price. A similar and highly theatrical commitment to Japanese pacifism will, if maintained, also threaten to shift the balance of power in Asia. 16. We should applaud those who attempt to build where the market has failed to act. The culture almost snickers at Musk’s interest in grand narrative, as if billionaires ought to simply stay in their lane of enriching themselves . . . . Any curiosity or genuine interest in the value of what he has created is essentially dismissed, or perhaps lurks from beneath a thinly veiled scorn. 17. Silicon Valley must play a role in addressing violent crime. Many politicians across the United States have essentially shrugged when it comes to violent crime, abandoning any serious efforts to address the problem or take on any risk with their constituencies or donors in coming up with solutions and experiments in what should be a desperate bid to save lives. 18. The ruthless exposure of the private lives of public figures drives far too much talent away from government service. The public arena—and the shallow and petty assaults against those who dare to do something other than enrich themselves—has become so unforgiving that the republic is left with a significant roster of ineffectual, empty vessels whose ambition one would forgive if there were any genuine belief structure lurking within. 19. The caution in public life that we unwittingly encourage is corrosive. Those who say nothing wrong often say nothing much at all. 20. The pervasive intolerance of religious belief in certain circles must be resisted. The elite’s intolerance of religious belief is perhaps one of the most telling signs that its political project constitutes a less open intellectual movement than many within it would claim. 21. Some cultures have produced vital advances; others remain dysfunctional and regressive. All cultures are now equal. Criticism and value judgments are forbidden. Yet this new dogma glosses over the fact that certain cultures and indeed subcultures . . . have produced wonders. Others have proven middling, and worse, regressive and harmful. 22. We must resist the shallow temptation of a vacant and hollow pluralism. We, in America and more broadly the West, have for the past half century resisted defining national cultures in the name of inclusivity. But inclusion into what? Excerpts from the #1 New York Times Bestseller The Technological Republic: Hard Power, Soft Belief, and the Future of the West, by Alexander C. Karp & Nicholas W. Zamiska techrepublicbook.com