Efthymios Tzinis

171 posts

Efthymios Tzinis

@ETzinis

Senior Research Scientist @GoogleAI | Ph.D. from @IllinoisCS | Formerly @merl_news, @RealityLabs | My opinions do not represent my employer

Google’s TPUs are on a serious winning streak and now they plan to produce over 5 million TPUs by 2027 to boost supply. A few days back SemiAnalysis reported that, for large buyers Google TPU can deliver roughly 20%–50% lower total cost per useful FLOP compared to Nvidia’s top GB200 or GB300 while staying very close on raw performance. Which is why Anthropic signed up for access to about 1M TPUs and more than 1GW of capacity with Google by 2026. The basic cost story starts with margins, since Nvidia sells full GPU servers with high gross margins on the chips and the networking whereas Google buys TPU dies from Broadcom at a lower margin and then integrates its own boards, racks and optical fabric, so the internal cost to Google for a full Ironwood pod is significantly lower than a comparable GB300 class pod even when the peak FLOPs numbers are similar. On the cloud side, public list pricing already hints at the gap because on demand TPU v6e is posted around 2.7 dollars per chip hour while independent trackers place Nvidia B200 around 5.5 dollars per GPU hour, and multiple analyses find up to 4x better performance per dollar for some workloads once you measure tokens per second rather than just theoretical FLOPs. A big part of this advantage comes from effective FLOPs instead of headline FLOPs because GPU vendors often quote peak numbers that assume very high clocks and ideal matrix shapes, while real training jobs tend to land closer to 30% utilization, but TPUs advertise more realistic peaks and Google plus customers like Anthropic invest in compilers and custom kernels to push model FLOP utilization toward 40% on their own stack. Ironwood’s system design also cuts cost because a single TPU pod can connect up to 9,216 chips on one fabric, which is far larger than typical Nvidia Blackwell deployments that top out at about 72 GPUs per NVL72 style system, so more traffic stays on the fast ICI fabric and less spills to expensive Ethernet or InfiniBand tiers. Google pairs that fabric with dense HBM3E on each TPU and a specialized SparseCore for embeddings, which improves dollars per unit of bandwidth on decode heavy inference and lets them run big mixture of experts and retrieval heavy models at a lower cost per served token than a similar Nvidia stack. These economics do not show up for every user because TPUs still demand more engineering effort in compiler tuning, kernel work and tooling compared to Nvidia’s mature CUDA ecosystem, but for frontier labs with in house systems teams the extra work is small compared to the savings at gigawatt scale. Even when a lab keeps most training on GPUs, simply having a credible TPU path lets it negotiate down Nvidia pricing, and that is already visible in the way large customers quietly hedge with Google and other custom silicon and talk publicly about escaping what many call the Nvidia tax.

Milei’s talk at Davos This never happened. But with AI (HeyGen) his voice is cloned and turned to English (Elevenlabs) and his mouth is re-synched to match the English words. This is the true power of AI, anything can be consumed in any language

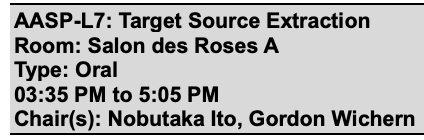

The word is out😊New paper w/ Darius Petermann, Gordon Wichern, and @S_Aswin19, "Hyperbolic Audio Source Separation," where we explore embedding time-frequency bins in hyperbolic space for hierarchical separation. Check out Darius's cool demo video on the project page below👇

Our @ieeeICASSP23 optimal condition training presentation is online!youtu.be/n2i5kXwvZlM Read our paper with @JonathanLeRoux,Gordon,Paris to learn how to train robust multi-condition separation systems + how to get SOTA text-based separation results!🧐arxiv.org/pdf/2211.05927…