Fabiano Castiglioni retweetledi

Fabiano Castiglioni

2.3K posts

Fabiano Castiglioni retweetledi

Fabiano Castiglioni retweetledi

Here's a simple Bayesian Life Framework for daily application:

1. **Set Your Priors**: Start each day noting your assumptions (e.g., "I believe this meeting will go poorly based on past ones").

2. **Gather Evidence**: Actively seek data—observe, ask questions, track outcomes (e.g., note actual meeting results).

3. **Update Beliefs**: Adjust probabilities (e.g., if evidence shows 70% success, revise your prior upward).

4. **Self-Awareness Check**: Evening reflection: Journal what priors you held, evidence encountered, and shifts. Question biases—why did I resist new info?

Apply iteratively to decisions, relationships, habits. Over time, it builds rational habits.

English

Fabiano Castiglioni retweetledi

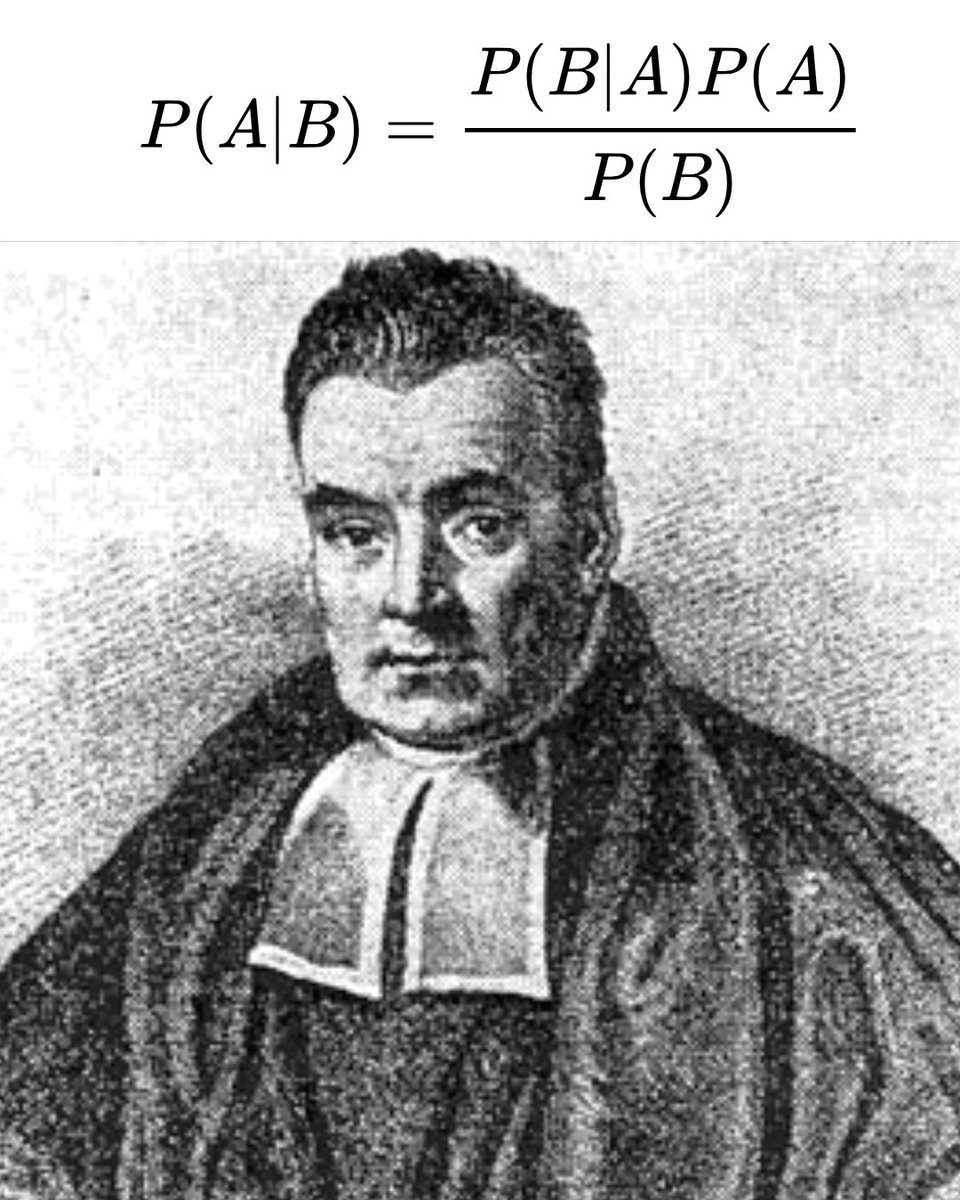

Bayes’ theorem is probably the single most important thing any rational person can learn.

So many of our debates and disagreements that we shout about are because we don’t understand Bayes’ theorem or how human rationality often works.

Bayes’ theorem is named after the 18th-century Thomas Bayes, and essentially it’s a formula that asks: when you are presented with all of the evidence for something, how much should you believe it?

Bayes’ theorem teaches us that our beliefs are not fixed; they are probabilities. Our beliefs change as we weigh new evidence against our assumptions, or our priors. In other words, we all carry certain ideas about how the world works, and new evidence can challenge them.

For example, somebody might believe that smoking is safe, that stress causes mouth ulcers, or that human activity is unrelated to climate change. These are their priors, their starting points. They can be formed by our culture, our biases, or even incomplete information.

Now imagine a new study comes along that challenges one of your priors. A single study might not carry enough weight to overturn your existing beliefs. But as studies accumulate, eventually the scales may tip. At some point, your prior will become less and less plausible.

Bayes’ theorem argues that being rational is not about black and white. It’s not even about true or false. It’s about what is most reasonable based on the best available evidence. But for this to work, we need to be presented with as much high-quality data as possible. Without evidence—without belief-forming data—we are left only with our priors and biases. And those aren’t all that rational.

English

Fabiano Castiglioni retweetledi

Fabiano Castiglioni retweetledi

Fabiano Castiglioni retweetledi

Fabiano Castiglioni retweetledi

Fabiano Castiglioni retweetledi

Fabiano Castiglioni retweetledi

Fabiano Castiglioni retweetledi

Fabiano Castiglioni retweetledi

Fabiano Castiglioni retweetledi

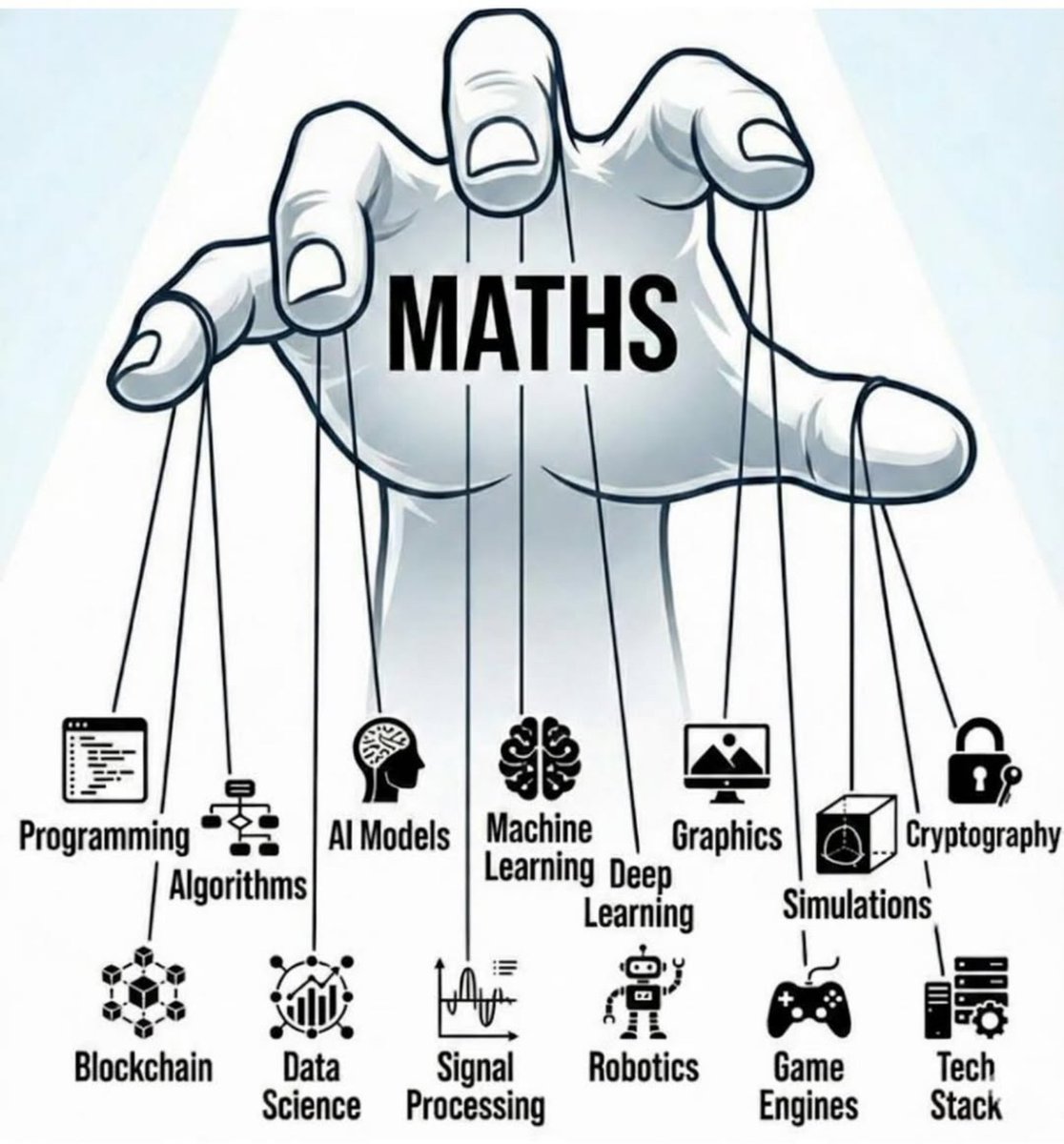

I've been studying AI since before ChatGPT existed.

I have a computer science degree & can build AI models from scratch.

Trust me when I say this:

AI agents are a waste of time in 99% of agency use cases.

Everyone's trying to sell them right now.

"Revolutionary!"

"Will transform your business!"

But here's what actually happens:

Week 1: It starts making weird decisions

Week 2: It's breaking on edge cases you didn't anticipate

Week 3: Your team is spending more time fixing it than the agent saves

Month 1: You're rebuilding it with different prompts

Month 2: You abandon it and go back to doing things manually

You spend $5,000-$10,000 on an AI agent implementation.

For the first week, it feels amazing. It's doing things automatically and making decisions.

Then reality hits.

Three months later, you're back to square one - except now you're also out $10k and your team has lost confidence in "automation."

I've seen this pattern dozens of times.

So why do AI agents keep failing?

Let me break down the actual problems:

~

Problem #1: They're unnecessary.

Most agency workflows are logic-based, not intelligence-based.

Client onboards → brief created → designer assigned → QA happens → client reviews.

This is a straight assembly line. You don't need AI to "decide" what comes next - you already know.

Using an AI agent here is like driving a Ferrari to get milk from a store half a block from your house.

Sure, you can do it. But why?

~

Problem #2: They're unpredictable.

95% accuracy sounds great until you realize that means 1 in 20 workflows breaks.

At scale, that's NOT GOOD.

AI agents might misinterpret stages, assign work to the wrong person, skip steps, or hallucinate data.

Why replace 100% reliable logic with 95% reliable AI?

Even if it works most of the time, that 5% failure rate compounds fast when you're running hundreds of workflows.

~

Problem #3: They're slow.

Regular automation logic executes in milliseconds.

AI agents have to make API calls, wait for processing, generate decisions, and parse results.

Even with the fastest AI models, you're adding 2-5 seconds per decision point.

Your team and/or clients are waiting.

All for "intelligence" you didn't need.

~

Problem #4: They're impossible to debug.

When a regular automation breaks, you know exactly where and why.

When an AI agent breaks, you get vague errors, unclear decisions, and difficulty reproducing conditions.

You end up spending more time debugging AI agents than they save you.

~

Problem #5: You're solving the wrong problem.

AI agents are being sold as a solution to operational chaos.

But they don't solve chaos - they automate it faster.

If your workflows are disorganized, your data is scattered, and your processes aren't standardized, an AI agent won't fix that.

It'll just break in more creative ways.

~

Now, to be fair...

AI agents aren't useless. They're just massively oversold for the wrong use cases.

AI agents make sense when:

• You need genuine natural language understanding

• The workflow genuinely requires human-like judgment

• You can afford the unpredictability

For 99% of agency operations, you don't meet these criteria.

~

So what's the actual solution?

Build a proper foundation first.

Use regular automation for logic-based workflows (which is 99% of agency operations).

Then add AI strategically - as a tool within workflows, not as an agent running them.

Example:

Use AI to analyze ad performance, generate creative briefs, or run internal QA checks.

But let logic handle the routing, decisions, and execution.

~

The agencies winning right now aren't using AI agents.

They're using:

• Centralized data (Single Source of Truth)

• Stage-based workflows (not flexible tasks)

• Compound automations that stack on top of each other

• AI in specific places where it genuinely adds value

Not everywhere. Just where it matters.

~

Bottom line:

AI agents are oversold for the wrong use cases.

Build the foundation.

Use logic for reliability.

Add AI strategically.

That's how you actually scale.

P.S. I put together a complete breakdown of why automations fail (AI or not) and the exact foundation you need to build systems that actually work so you can handle 2-4x your current capacity while you scale.

If you want my Self Running Agency Playbook:

Comment "PLAYBOOK" and I'll Dm it to you.

English

Tentando assistir o 1⁰ episódio de @BreakingBad p3la 3ª vez pra tentar achar algo que me convença a ver o 2⁰ episódio.

Série sem graça.

Português

Fabiano Castiglioni retweetledi

@SHIBFOUNDATIONS 0xbc904b6e558d6820ff2e985ad22a253057269760

English

Fabiano Castiglioni retweetledi

This mindful mouse by @samsung reminds you when the workday is finished by moving away from you

English

Fabiano Castiglioni retweetledi

Fabiano Castiglioni retweetledi