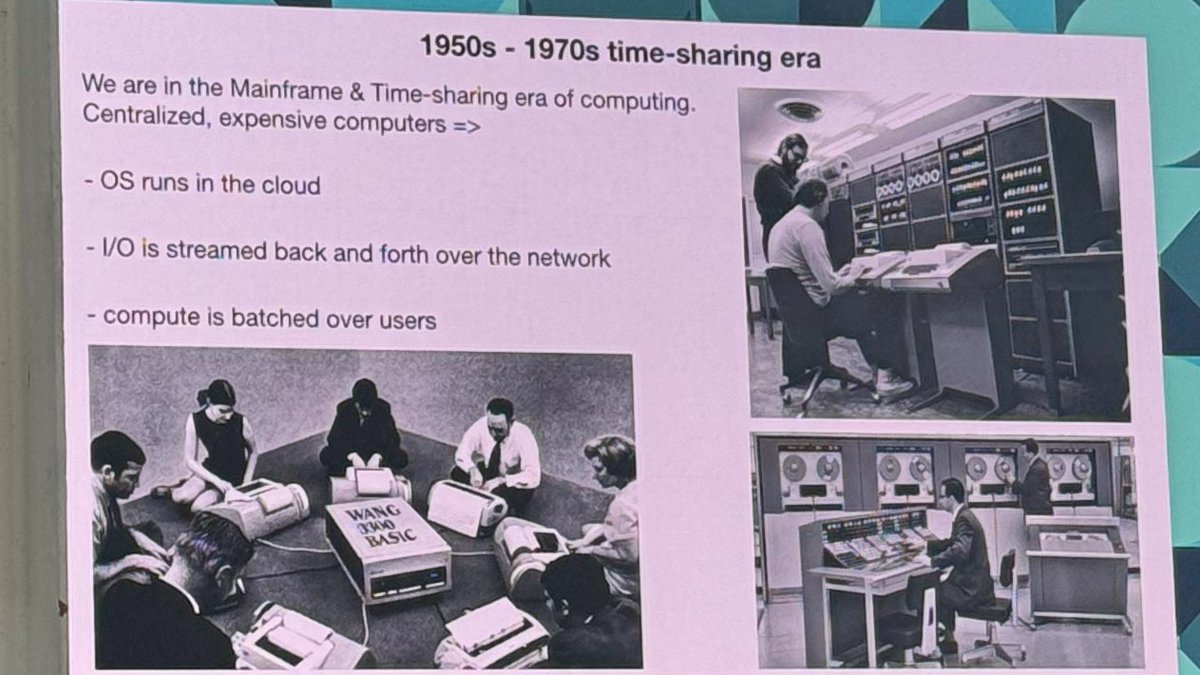

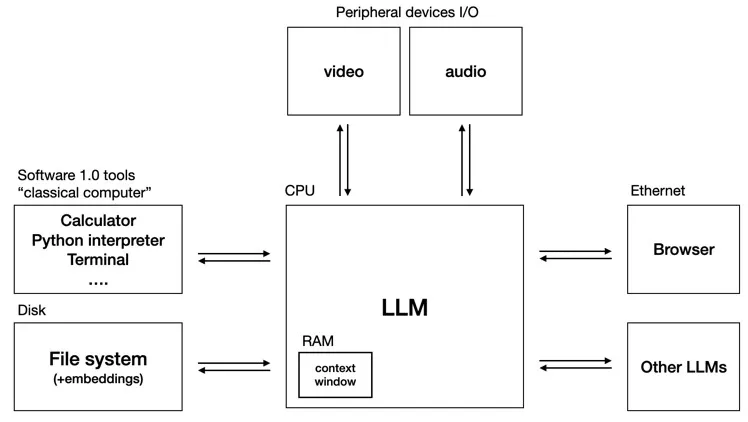

Andrej Karpathy's (@karpathy) keynote yesterday at AI Startup School in San Francisco.

Fabio Salern

38 posts

@fabiosalern

Software Engineer @ Stema | LLMs enthusiast

Andrej Karpathy's (@karpathy) keynote yesterday at AI Startup School in San Francisco.

AI PROMPTING → AI VERIFYING AI prompting scales, because prompting is just typing. But AI verifying doesn’t scale, because verifying AI output involves much more than just typing. Sometimes you can verify by eye, which is why AI is great for frontend, images, and video. But for anything subtle, you need to read the code or text deeply — and that means knowing the topic well enough to correct the AI. Researchers are well aware of this, which is why there’s so much work on evals and hallucination. However, the concept of verification as the bottleneck for AI users is under-discussed. Yes, you can try formal verification, or critic models where one AI checks another, or other techniques. But to even be aware of the issue as a first class problem is half the battle. For users: AI verifying is as important as AI prompting.