fatih c. akyon

1.7K posts

fatih c. akyon

@fcakyon

making ai useful at @ultralytics and @viddexa phd cand. at @metu_odtu

I have just open-sourced a literature summary on ML-based content moderation and multimodal content rating! It includes various sources for audio, text, video modalities, links to datasets and related Python tools. Github: github.com/fcakyon/conten… Arxiv: arxiv.org/abs/2212.04533

We’re launching the beta for our new commercial AI product: Sakana Fugu 🐡, a multi-agent orchestration system! Blog: sakana.ai/fugu-beta Fugu hits SOTA on SWE-Pro, GPQA-D, and ALE-Bench, and has been our internal secret weapon. It dynamically coordinates frontier models, autonomously selecting the optimal agent combinations and roles for each task. Available as an OpenAI-compatible API, you can seamlessly integrate Fugu into your existing workflows with minimal changes. 🐟 Fugu Mini: High-speed orchestration optimized for latency 🐡 Fugu Ultra: Full model pool utilization for deep, complex reasoning Apply for the beta test here: forms.gle/BtKkhc2CfLKk1d…

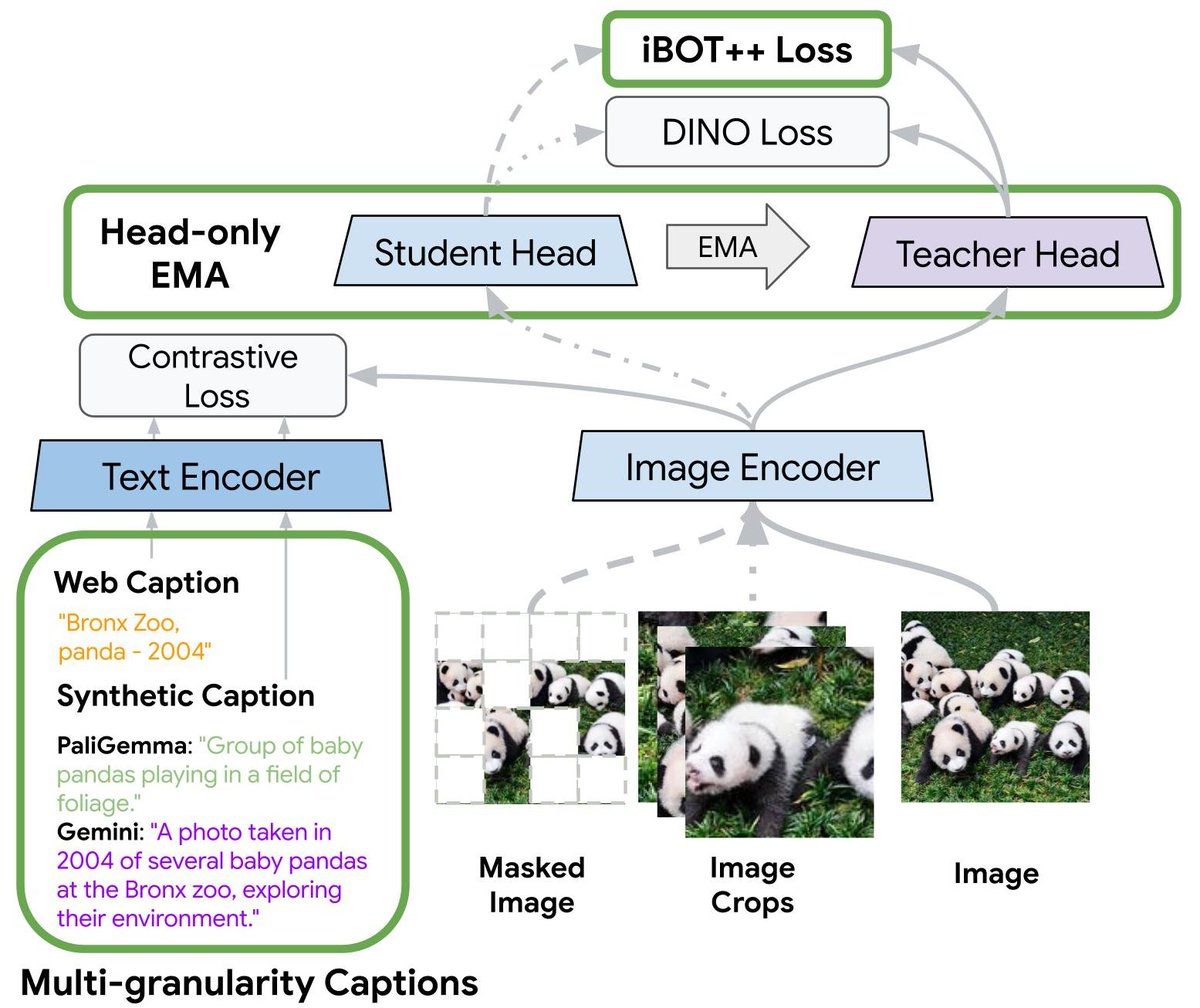

started reading @GoogleDeepMind /tips2 paper (cvpr 2026) arxiv.org/abs/2604.12012 code also released (ofc no training code, give me a week to reverse engineer it 😎): github.com/google-deepmin… its raining distillation papers these days 🔥 @nvidia /cradiov4, @Meta /eupe and now this, whos next? @ultralytics?

In evals, Sonnet with an Opus advisor scored 2.7 percentage points higher on SWE-bench Multilingual than Sonnet alone, while costing 11.9% less per task.

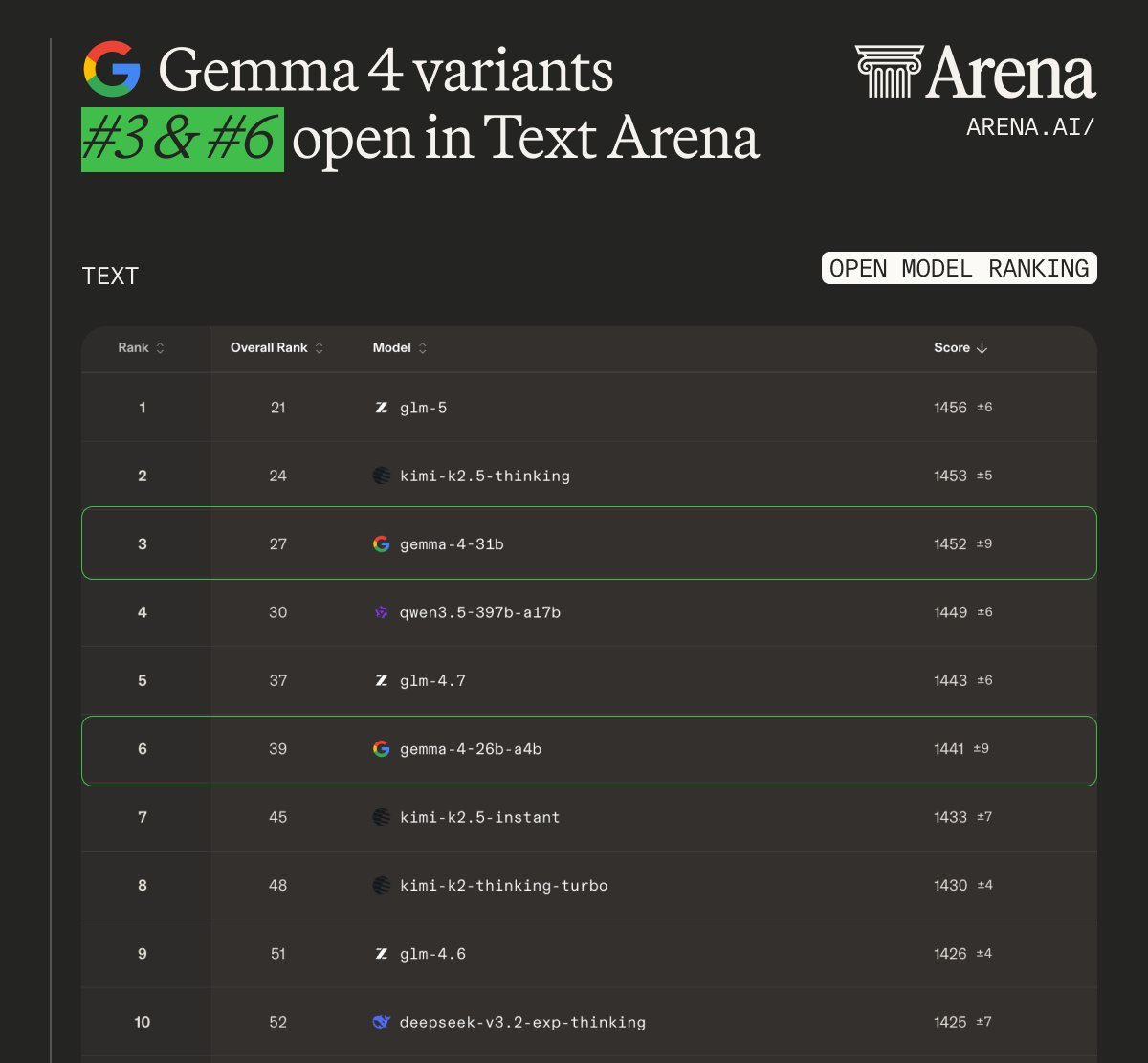

Google's Gemma 4 on a 128 GB Macbook Pro is near AGI on the go, no internet needed