fils

1.2K posts

fils

@fils

Data Guy and wave man 浪人 Hobo Programmer, bouncing from free service to free service then moving on. Nice software you have there... do you have a free tier?

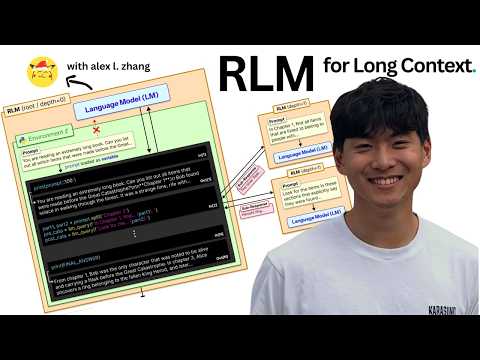

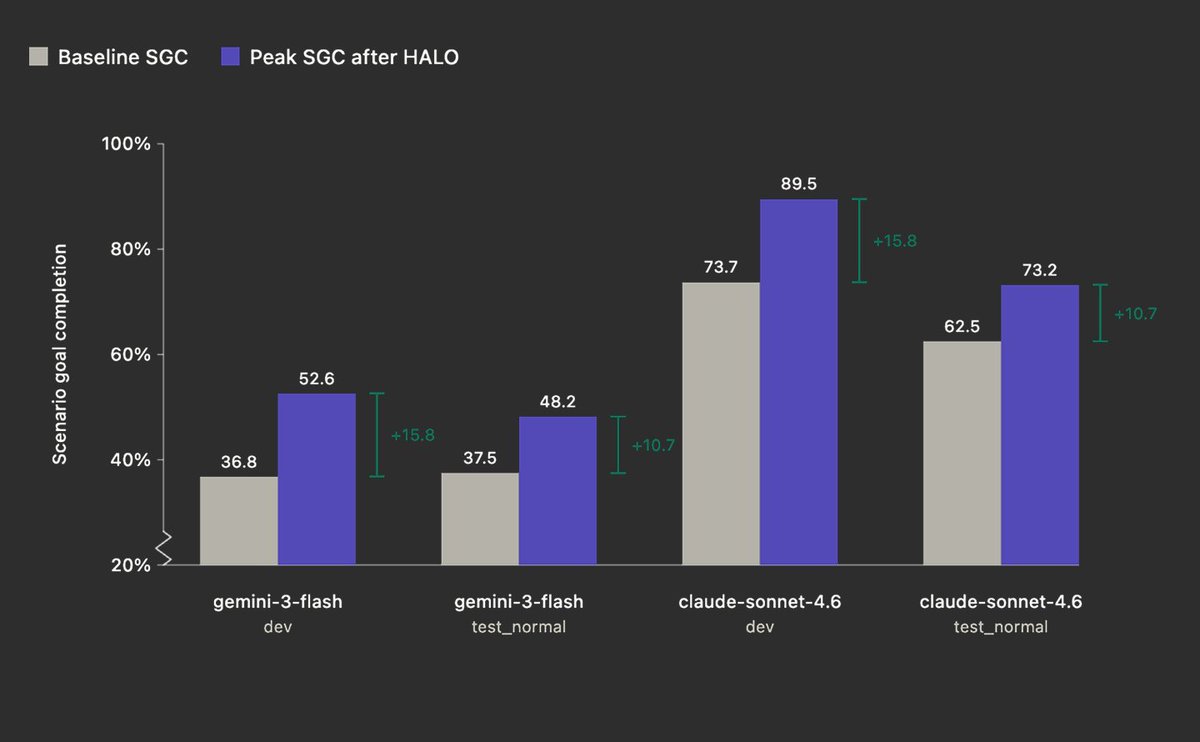

Reinforcing Recursive Language Models Can a 4B model learn to recursively call itself to answer hard long-context questions? We RL fine-tuned a small model to behave as a native RLM. On evidence selection across scientific papers, our 4B RLM matches Sonnet 4.6 in quality while running significantly faster and cheaper.

🚨 BREAKING: A new Nature paper may have opened a path toward electromagnetic fields approaching the Schwinger limit. Using efficiency-optimized relativistic plasma harmonics, researchers report conditions needed for coherent harmonic focusing potentially compressing energy in space and time to extraordinary intensities. If this scales, it could help probe: • photon-photon scattering • quantum vacuum structure • strong-field QED This may be more than a laser advance. It may be a new way to study whether vacuum itself has hidden structure. Could coherent harmonic focusing become the next leap after chirped pulse amplification? Follow me for more frontier physics.

ok so the default DSPy.RLM is literally going to destroy this benchmark before the end of the day. running now for sonnet 4.5... 🏆 Scoreboard (live) RLM: 90/94 (95.7%) Vanilla: 0/94 (0.0%) anyone want to pay for the opus run? 😉