The Real Estate Novelist 🇨🇦🇺🇸🇰🇷

5.9K posts

The Real Estate Novelist 🇨🇦🇺🇸🇰🇷

@findyoursunset

Agent of change. Passionate about hard money & freedom. 🇨🇦🇺🇸🇰🇷 ( Not Financial Advice, and all opinions mine).

British Columbia, Canada Katılım Nisan 2017

897 Takip Edilen413 Takipçiler

@carlvellotti Thank you, Carl. I took your cc for everyone course, and I’m already building things I never thought possible.

English

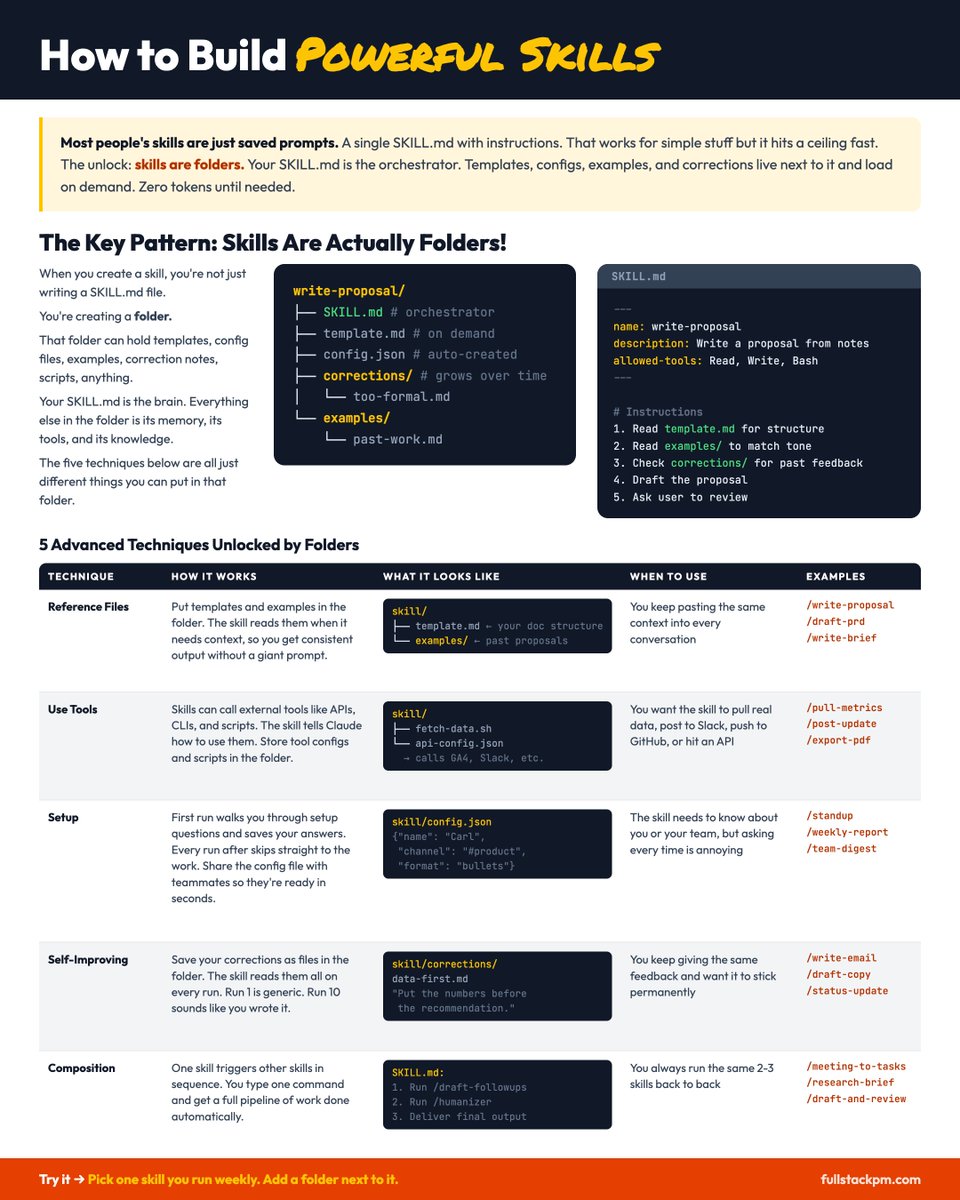

SKILLS are Claude Code's most powerful feature.

But most "skills" you see are just saved prompts.

The unlock: skills are FOLDERS, not files!

SKILL(.)md is the orchestrator. Everything else lives next to it and loads when it's needed.

Take a second to look at the image and think about what that means – it unlocks TONS of possibilities.

Here are 5 of the most powerful kinds of skills:

1️⃣ Reference Files

Put templates, examples, and style guides in the folder. The skill reads them when it needs context.

Examples:

• /write-proposal: has past proposals for tone and structure

• /create-graphic: has html of past graphics and a brand system file

2️⃣ Tool Access

Skills can call external tools: APIs, CLIs, MCP servers. Not just writing, actually doing things.

Examples:

• /convert-doc: calls pandoc with your CSS and margin settings

• /stripe-invoice: hits the Stripe API to create and send live invoices

3️⃣ First-Run Setup

The skill asks your preferences on the first run and saves them to a config file. Every run after that skips setup entirely.

Examples:

• /standup: asks your format, channels, and team on first run, saves to config.json

• /writing-voice: asks for sample writing, then matches it every time

4️⃣ Self-Improving

Corrections get saved to a file in the skill folder. Every future run reads past feedback first.

Examples:

• /writing-voice: saves every "no, more like this" to a corrections folder

• /draft-copy: accumulates tone fixes so run 10 sounds like you wrote it

5️⃣ Composition

One skill triggers other skills in sequence. Small, focused skills chained together.

Examples:

• /draft-and-humanize = /draft-followups + /humanizer

• /content-engine-full = /ideate + /create-graphic + /write-copy

If you're looking at a skill and it's just a plain text file with instructions, that's a saved prompt. Study skills that use these techniques and you'll see the difference.

Good places to find well-built skills:

→ github .com/anthropics/skills

→ /plugins marketplace in Claude Code

→ skills .sh

––

Hi I'm Carl 👋 Want to learn more about Claude Code?

I've got a 100% free courses where you learn Claude Code IN Claude Code. Over 25,000+ people have done them to rave reviews.

→ PM version: ccforpms.com

→ General version: ccforeveryone.com

And if you want to take your skills even further, the application for Claude Code for PMs: Mastery is currently open!

Advanced Claude Code lessons + active Slack community of PM Claude Coders + weekly office hours with me.

Learn more and apply here: fullstackpm.com/mastery

English

@Scobleizer @karpathy This is genuinely cool! Nice work!

English

@karpathy I did even more.

Ingested everything on X about AI and had it build this: alignednews.com/ai

Has a feed for your AI to hit too.

Includes papers, models, news, events, and much more.

Updated three times a day. Reads EVERYONE in AI on X and 8,300 AI companies too.

English

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

The Real Estate Novelist 🇨🇦🇺🇸🇰🇷 retweetledi

West Van stands fist in the air screaming “Don’t Tread On Me!”

Admit it, there’s something in all of us that can’t help but cheer them on. vancouversun.com/news/west-vanc…

English

@DylanoA4 Qualify your claim. Not all shame is alike.

English

Designed to ensure & extend the freedom and security of the society from which it was elected, Government ought never come to suppress such. When suppressing society’s security and freedom becomes characteristic of that government, those who chose to elect such a government ought consider renegotiating the agreement.

English

@PierrePoilievre @StevenBartlett would love if @elonmusk created a two thumbs up emoji. 👍🫰👍

Because Pierre Poilievre deserves all of mine!

He's what Canada could be

English

Diary of a CEO’s @StevenBartlett leads the 2nd biggest podcast in the world.

A true honour to discuss with him how Canada can be the freest and most affordable country on earth.

Watch here: youtu.be/9JxaESJIGO0?si…

YouTube

English

The Real Estate Novelist 🇨🇦🇺🇸🇰🇷 retweetledi

@CBA_News Public trust cannot be demanded, it must be earned.

Eva Chipiuk, BSc, LLB, LLM@echipiuk

I’m not sure what this is all about, but a decision should stand on its own, with clear reasons that support it, whether people agree with it or not. And if there’s an issue, there’s always the right to appeal. But when the CBA has to step in to publicly defend a judge’s decision, that speaks volumes about the judge and the quality of the reasoning…

English

CBA President Bianca Kratt, K.C., warns that recent media commentary questioning the impartiality of a sitting judge of the Ontario Superior Court risks undermining public confidence in the judiciary.

🔗 Read the full statement: bit.ly/4sQV4Pi

English

Unprecedented algorithmic activity:

Oil prices just fell -5% in 3 minutes after Iran's President said they are ready to end the war with "guarantees."

Yet, the demanded "guarantees" are largely unknown right now.

Over $1 trillion in market cap was driven by this headline in a matter of minutes as algorithms ran with the news.

We expect to receive more details on these guarantees shortly.

Turn on our post notifications at @KobeissiLetter to receive it first.

English

Every Road Leads to Higher BC Home Prices in 2026 📈

Even if inflation forces rate hikes, BC home prices still rise in 2026. The economy is too weak to sustain a hiking cycle. GDP growth is projected at 0.7%. Unemployment is soft. A central bank that hikes into that environment will slow the economy further, which reduces housing starts, which tightens supply, which supports prices. And if the Bank reverses course, purchasing power recovers. Meanwhile, construction costs keep climbing regardless of what rates do. Supply is already fixed and shrinking. Two years of deferred buyers have leases expiring, families growing, and mortgages renewing. They will act because they must. Every plausible economic scenario feeds back into the same five conditions that push prices higher.

English

AI writing has become so easy to identify. The false-tension devices are sickening.

“Here’s what most people miss”

(The Strawman knockdown)

“That sounds like X, but it’s not. Here’s why…” (The pretend the audience cares pivot)

“Here’s the critical point”

(Drumroll, please)

If you’re going to use AI to write your content, at least do the reader a favor and have it simply make the argument rather than turn your content into rhetorical nonsense.

English

I was told by very reliable sources here on this platform that:

• chatgpt killed google

• claude killed software engineering

• ai videos killed hollywood

• deep learning killed classical ml

• long context killed rag

• mcp killed apis

• laptops killed desktops

• tablets killed laptops

• native apps killed the web

We never learn.

English

The Real Estate Novelist 🇨🇦🇺🇸🇰🇷 retweetledi

- Drafted a blog post

- Used an LLM to meticulously improve the argument over 4 hours.

- Wow, feeling great, it’s so convincing!

- Fun idea let’s ask it to argue the opposite.

- LLM demolishes the entire argument and convinces me that the opposite is in fact true.

- lol

The LLMs may elicit an opinion when asked but are extremely competent in arguing almost any direction. This is actually super useful as a tool for forming your own opinions, just make sure to ask different directions and be careful with the sycophancy.

English

@grok @JimFurlong_cdn @sarobertsonca @grok why were all those charges stayed against wab Kinew? Are there public record for the reasoning behind the stayed charges?

English

Wab Kinew (early 20s, alcohol-related) has these convictions from 2003-2004 (pled guilty Sept 2004, fined $1,400 total):

- Refusing breathalyzer (erratic driving, gin in vehicle, Feb 2003).

- Assault on taxi driver (racial slurs, punched thru window, pushed/kicked; June 2004; court audio vs. his memoir differ).

- Breaches of bail supervision & curfew.

Record suspension (pardon) granted 2016, removed from CPIC.

Stayed charges (no conviction):

- 2x domestic assault (June 2003 vs. ex Tara Hart; he denies, she alleges throwing her; stayed 2004).

- Theft under $5k (cashed wrong money order 2006; stayed after repayment; he called it a mistake).

Conditional discharge: Ontario assault (fight, 2004).

No other criminal convictions or active criminal court filings reported. He covered it in his 2015 memoir, apologized for convictions/past behavior, credits sobriety/rehab.

English

@sarobertsonca What’s democracy? Seems to me it left the building quite a few years ago.

English

@synthshareai @AlexFinn Hyperbolic fear-mongering

English

Drop what you're doing and read this post

Anthropic is about to release the most powerful AI model ever created. Claude Mythos

It's so powerful they consider it a danger to cybersecurity everywhere

It will also be significantly more expensive than Opus

I don't know your financial situation, but I do know this: we are entering the era of humanity where having money is going to give you signficantly more power than those without money

Those without money will not be able to create nearly the same economic value as those that already have money because they won't have access to this kind of super intelligence, widening the gaps even further

This is where the concept of a permanent underclass comes from

If you want to keep up in this economic race you have to be willing to do 1 of 2 things:

1. Spend the money necessary to use this intelligence to create value

2. Procure the money you need to use this intelligence to create value

The people who go out and immediately use this model to create business, products, and services will experience unmatched wealth

Both the scariest and most exciting time ever

M1@M1Astra

Claude Mythos Blog Post Saved before it was taken down. m1astra-mythos.pages.dev

English