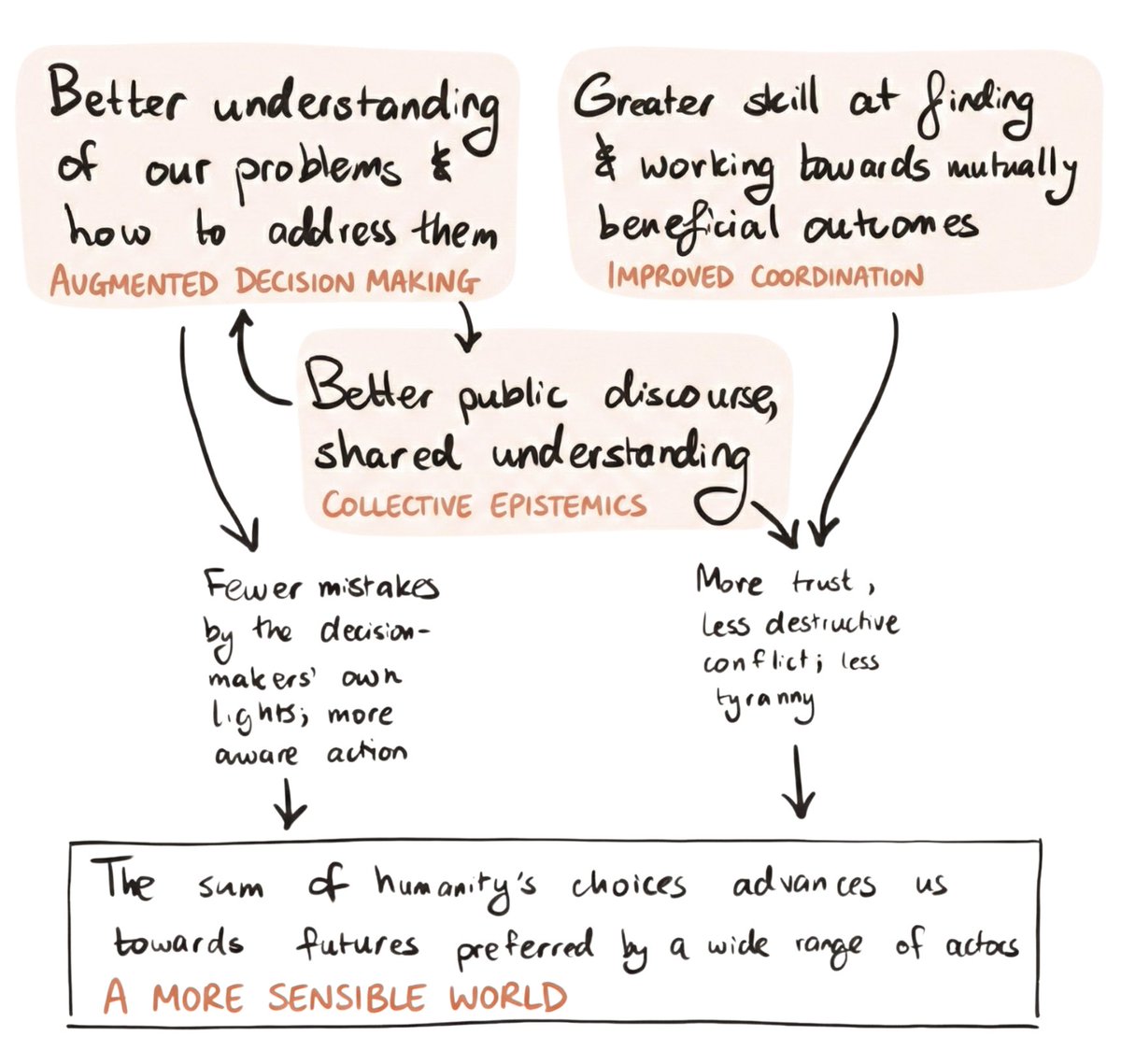

Some agreements depend on uncertainty: you can’t buy house insurance after your house burns down, you can’t bet once the results are in. And some of these agreements may be among the most consequential we can make: like power-sharing agreements between great powers, morally-motivated deals between people who care more about some futures than others, and bets on which normative views are vindicated. Through an intelligence explosion, the veil of ignorance about the long-run future will lift significantly. We make these deals early, or never. But received wisdom warns against “locking in” major decisions around AGI. We’ll soon have enormous capacity for reflection and understanding, it says, so let’s wait until then before making long-lasting agreements. I ask: which pre-AGI deals are worth enabling? And what would it take to make them stick? Power-sharing agreements between major powers stand out as important, and morally-motivated deals seem most neglected. We might need reforms or new commitment technology to enable the highest-upside deals, but we’ll want some fairly conservative guardrails too. Link to article: newsletter.forethought.org/p/should-we-ma…