William MacAskill

1.2K posts

@willmacaskill

Consider donating 10% to effective charities: https://t.co/VMXkr4hnd7 Or a career for impact: https://t.co/AUIhrElLkr My research: https://t.co/dEcMWUnNHU

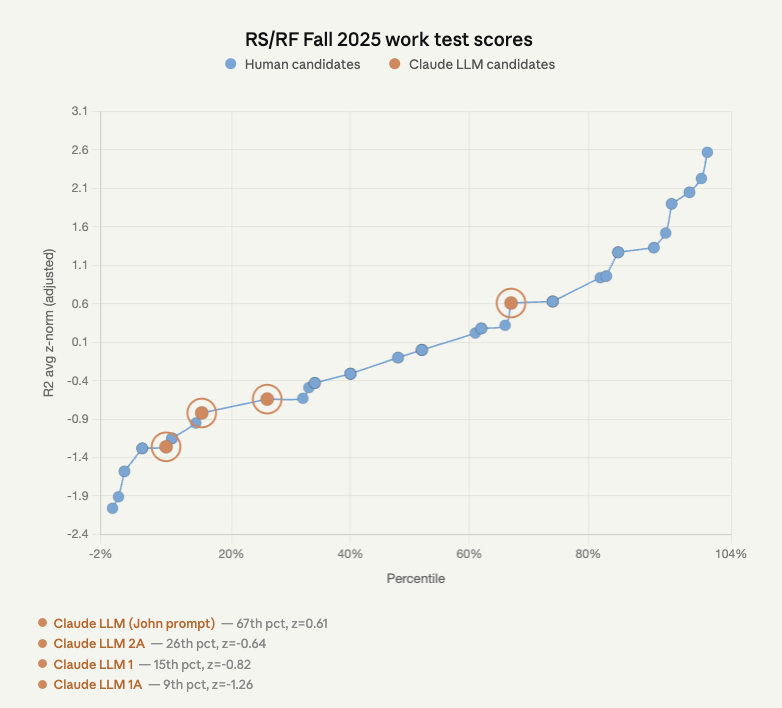

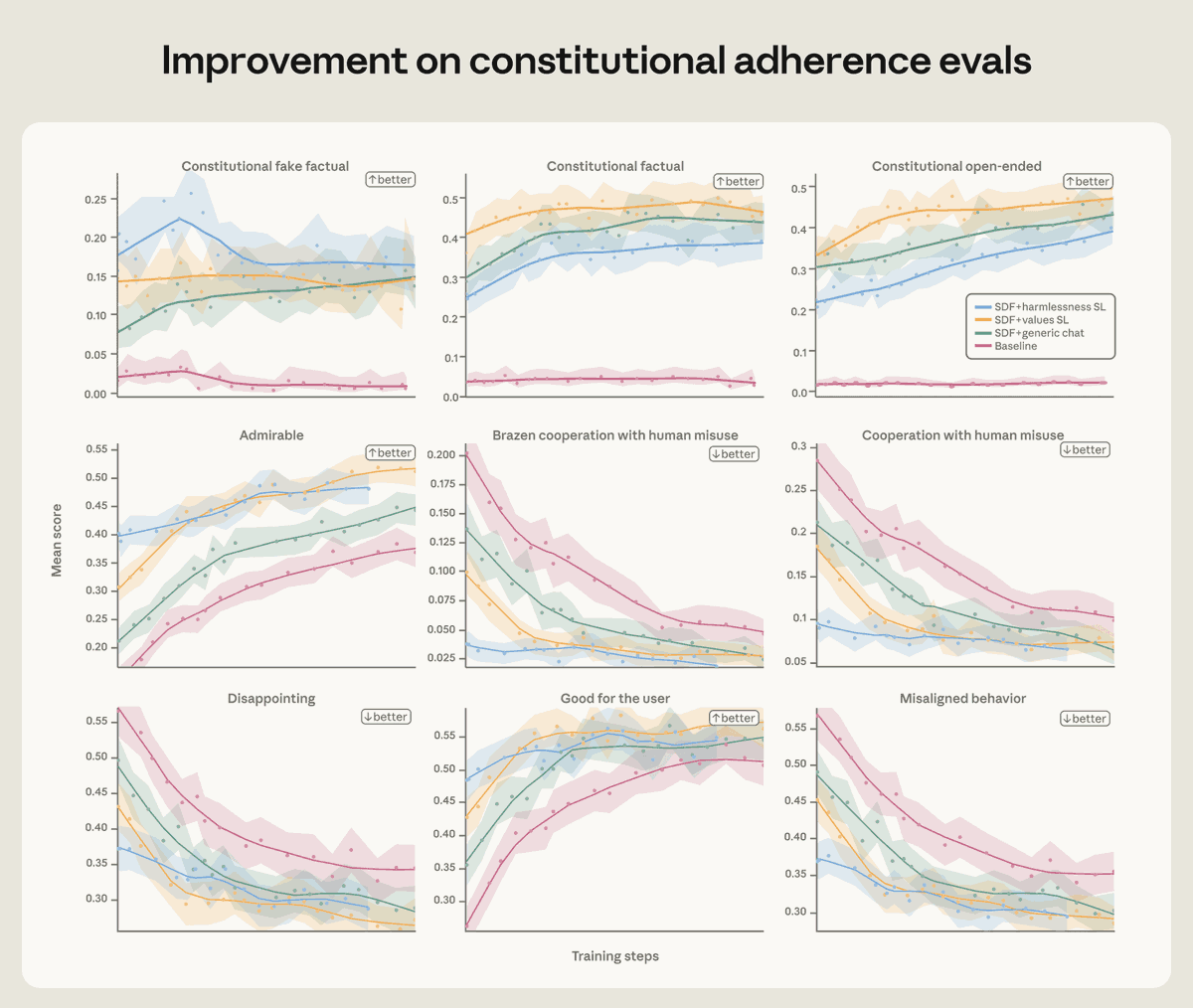

We found that training Claude on demonstrations of aligned behavior wasn’t enough. Our best interventions involved teaching Claude to deeply understand why misaligned behavior is wrong. Read more: anthropic.com/research/teach…

Yoshua Bengio thinks he knows how to make provably safe superintelligent agents. Bengio built the foundations of modern AI and is the most cited living scientist. He believes his alternative training setup would: 1. Guarantee honesty 2. Prevent unintended goals 3. Produce capable agents 4. Port over most data and techniques from current LLMs 5. Not be inherently more expensive, and perhaps be more intelligent Bengio claims the honesty and lack of unintended goals can be proven mathematically, at least given particular assumptions. And his new organization, LawZero, is aiming to build a scrappy prototype as soon as possible. The architecture is called 'Scientist AI' and it's based on training a model to explain empirical observations, including what people say, rather than training AIs that mimic human behaviour or seek our approval. (Bengio's frank assessment is that "reinforcement learning is evil" and that allowing AIs to independently train their successors is "the most crazy, dangerous bet that unfortunately we are on track to do.") But skeptics question whether Scientist AI really does solve the fundamental problem of 'eliciting latent knowledge' from AI models. And with the commercial race for superintelligence so intense, it's not clear whether the proposal will be able to compete or have time to bear fruit, even if it's sound in theory. On The 80,000 Hours Podcast, links below – enjoy! • Making AI honest and safe (00:00:00) • Scientist AI in plain English (00:02:27) • How Scientist AI differs from LLMs (00:06:32) • How the training data works (00:14:02) • Can this become an agent? (00:21:02) • Why Yoshua is now more optimistic (00:32:11) • Why companies can’t stop racing (00:36:35) • A working prototype won't take long (00:49:15) • Scientist models might be more capable (00:53:34) • “Reinforcement learning is evil” (01:01:27) • Scientist AI from guardrail to agent (01:08:37) • Can safe AI still be competent? (01:12:38) • How much will this cost? (01:19:29) • Can it generalise beyond maths and science? (01:23:26) • A multi-national push for superintelligence (01:39:19) • Want to work with or fund Yoshua? (01:51:16) • Why smart people ignore AI risk (01:54:45) • Don’t let AI build the next AI (02:01:33) • Why politicians miss the real risks (02:12:28) • Why Yoshua changed his mind about AI risk (02:21:27)

We’re sharing the research agenda of The Anthropic Institute, or TAI. TAI will focus on four areas: 1) Economic diffusion 2) Threats and resilience 3) AI systems in the wild 4) AI-driven R&D Read the full agenda: anthropic.com/research/anthr…

We're hiring grantmakers and senior generalists across our Global Catastrophic Risks teams. Right now, our biggest constraint is people, not funding, which means every strong hire directly translates into more critical work getting done. 🧵

For humans and advanced AI systems to be able to make honest deals and avoid negative-sum conflict, AIs will need reasons to trust us. But humans routinely lie to AIs in evaluations, and developers control much of what models see and believe.

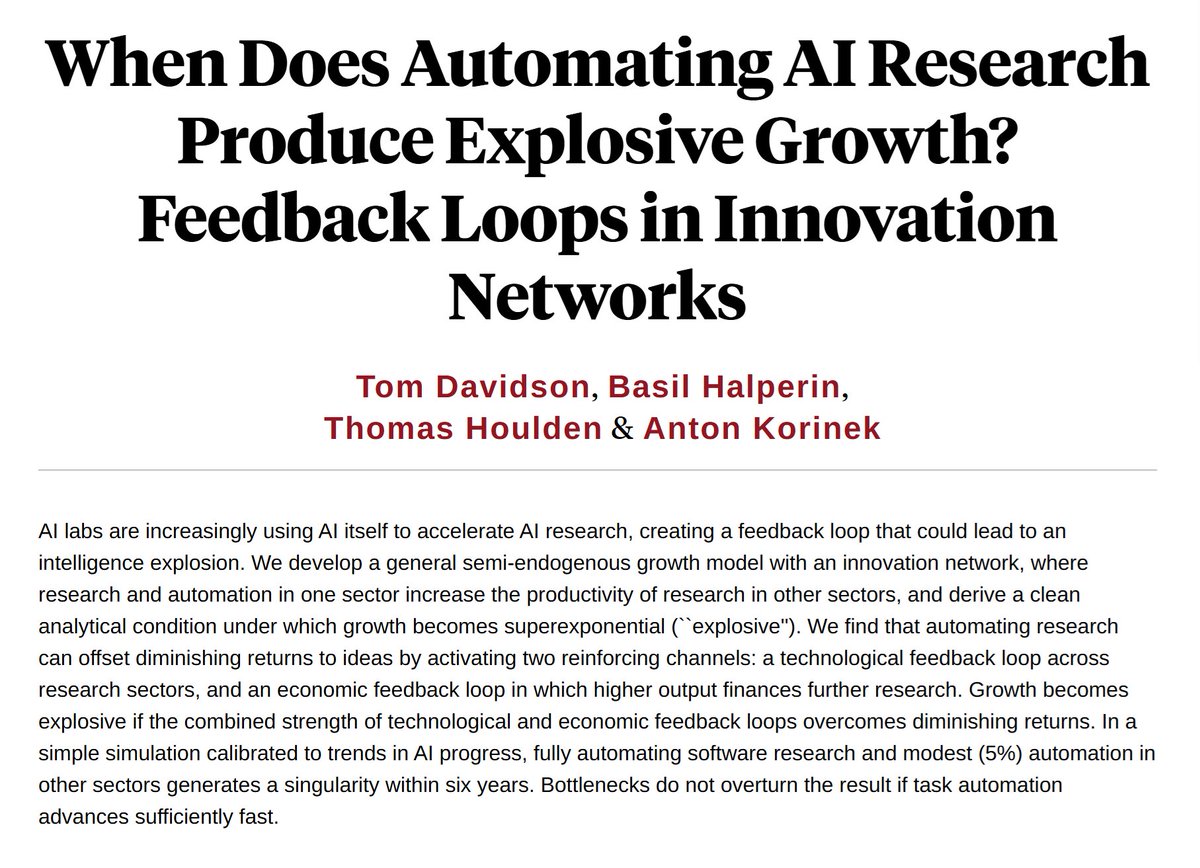

1/🆕 New NBER paper: 𝗪𝗵𝗲𝗻 𝗗𝗼𝗲𝘀 𝗔𝘂𝘁𝗼𝗺𝗮𝘁𝗶𝗻𝗴 𝗔𝗜 𝗥𝗲𝘀𝗲𝗮𝗿𝗰𝗵 𝗣𝗿𝗼𝗱𝘂𝗰𝗲 𝗘𝘅𝗽𝗹𝗼𝘀𝗶𝘃𝗲 𝗚𝗿𝗼𝘄𝘁𝗵? Under empirically grounded calibrations, a singularity could arrive within just a few years of automating AI research. 🧵 📄 nber.org/papers/w35155