foufou

4.5K posts

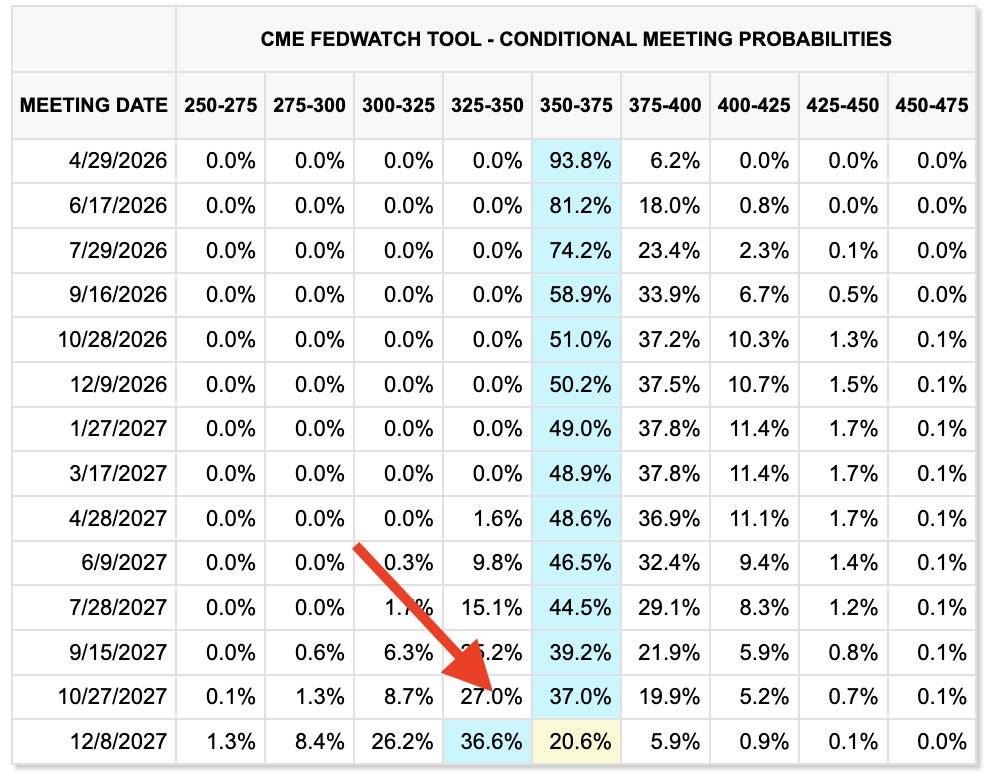

已经不止有多个项目找我测一测他们的AI trading架构了 我就简单说几点吧 1. 拿长期实盘出来,短期妖币这种没有 max dd 的不代表什么,幸存者偏差而已。随便挑一个已经归零的币倒着回测,曲线一样漂亮 2. 如果 Sharpe ratio > 5,基本上可以确定是过拟合、look-ahead bias 或者数据泄漏。Medallion 常年也就 2-3,你一个人在家跑出 7,自己心里要有b数 3. 你拿一段 crypto bull market 的数据去测,和你拿一段大类市场的数据回测是不一样的,完全的 overfitting,基本我不看。最起码 2018、2022 两轮熊市能走通,再跑一轮 walk-forward,才算一个策略 4. 手续费、滑点、funding rate 都得建进去。Binance 的 maker/taker、VIP 档位、BNB 折扣一层层算下来,模型不准的话 backtest 和实盘能差出一倍年化,这是常态 5. 策略容量比收益率更值得看。10 万刀跑得动,不代表 100 万刀还能跑。小币深度就那么点,你一进场自己就把自己的信号打掉,backtest 里完全 reflect 不出来 6. 确实 crypto quant 没那么卷,但套利机会一直在被蚕食——funding arb、现货期货 basis、跨所价差,基本已经被做市商和 HFT 吃干净了。高频做不了,纯因子也没空间,剩下能做的只有趋势和 mean reversion 这两条老路 7. Alpha 有半衰期。策略上线三个月还能跑,算及格;半年还在,算不错;一年还有,大概率是运气好或者你的规模还没到引起注意的量级。别把一次 bull run 的红利当永续 alpha,你还没有那么牛逼 8. Grid search 出来的"最优参数"99% 是过拟合。真正稳的参数,是你在一个区间里随便挑都能跑,而不是精确到小数点后两位才 work。参数稳健性比单点收益重要一百倍,这点做过的人都懂 9. 2017 ICO、2020 DeFi summer、2021 meme、2022 LUNA/FTX、2023 AI 叙事,每一段市场结构完全不同。你在上一段拟合出来的"规律",换个 regime 直接归零,还倒亏手续费 10. 交易所风险永远比你想的大。FTX 归零、API 限流、插针爆仓、小所跑路、币安突然下架,这些都是"一次就结束游戏"的事。你年化 50% 抵不过一次交易所暴雷,这跟策略多牛逼没关系,做山寨的就要考虑到流动性和“下架风险” 11. backtest 上曲线波动看着很美,真到自己账户里连续三周净值下跌,90% 的人会关掉程序手动调参 12. 分清楚你赚的是 alpha 还是 beta。牛市里所有人都是 quant 大师,熊市一来只剩 beta 的人全被冲走。把多头暴露剥离出来单独看 alpha 曲线,大多数所谓"策略"根本没 alpha,就是变相 long BTC 加一点波动率。 13. ML 在 quant 里有大量虚假繁荣。LSTM、Transformer、强化学习被吹上天,实际在 SNR 极低的金融时序上,一个朴素动量因子加合理风控,能打过你调一万次的 XGBoost 真JB学习起来,quant是真的难得一笔

通货膨胀,你好!

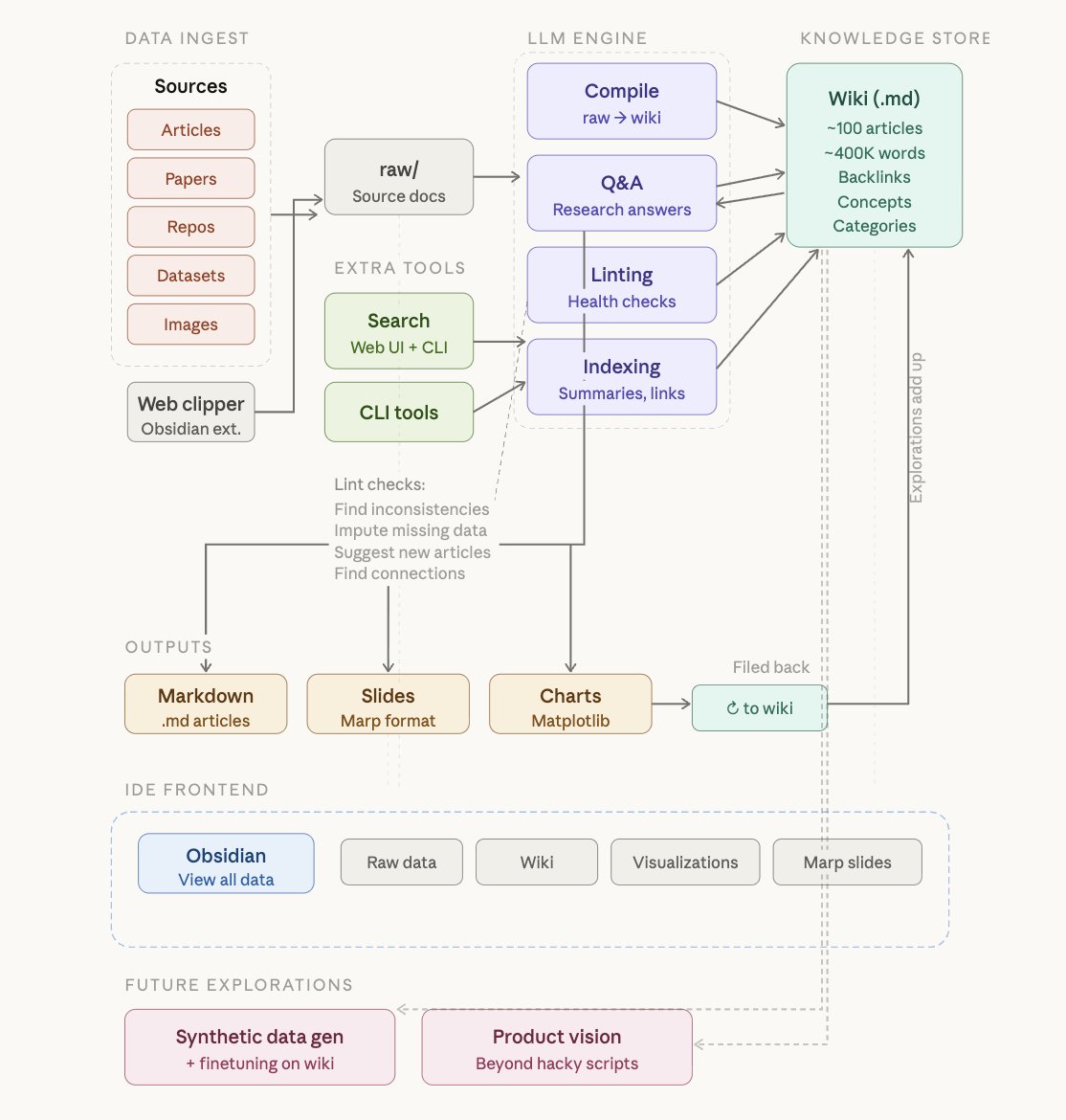

Wow, this tweet went very viral! I wanted share a possibly slightly improved version of the tweet in an "idea file". The idea of the idea file is that in this era of LLM agents, there is less of a point/need of sharing the specific code/app, you just share the idea, then the other person's agent customizes & builds it for your specific needs. So here's the idea in a gist format: gist.github.com/karpathy/442a6… You can give this to your agent and it can build you your own LLM wiki and guide you on how to use it etc. It's intentionally kept a little bit abstract/vague because there are so many directions to take this in. And ofc, people can adjust the idea or contribute their own in the Discussion which is cool.

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them. IDE: I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides). Q&A: Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale. Output: Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base. Linting: I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into. Extra tools: I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries. Further explorations: As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows. TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.