Franck Verrot

15.8K posts

Franck Verrot

@franckverrot

Engineering @omadahealth. Building better healthcare by day, AI harnesses and simulators at night. Opinions mine.

During allergy season, when I'm being asked "how was your weekend?" I just say I stayed home, but what I don't say is that I spent an entire day on an "interesting" problem. We had long conversations about colleges this weekend, and my son shared the major he was pursuing, that D1 soccer was high on the list of requirements, and ideally not break the (ie: "Dad's") bank. All these requirements add up (state, school size, "I'm a city guy, Dad, not sure about UCSB", SUs vs UCs vs ...), I probably went overboard with this but for once I said no to a simple sheet. I grew up in a simpler time, in a simpler system too (not sure if it's still easy there.) So... I built an app. I tried running a 0.8B language model on an iPhone to parse natural language queries into structured filters. Precision was very low (9%) and it actually just produced garbage JSON half the time. I replaced it with a 60M+-parameter classifier with 14 heads (one per filter dimension): 100% precision, no parse errors, and around 15ms inference-time on-device. If you're an AI engineer, you may find the technical writeup interesting. If you're a parent, just know an app is on the way. franck.verrot.us/blog/2026/03/2…

During allergy season, when I'm being asked "how was your weekend?" I just say I stayed home, but what I don't say is that I spent an entire day on an "interesting" problem. We had long conversations about colleges this weekend, and my son shared the major he was pursuing, that D1 soccer was high on the list of requirements, and ideally not break the (ie: "Dad's") bank. All these requirements add up (state, school size, "I'm a city guy, Dad, not sure about UCSB", SUs vs UCs vs ...), I probably went overboard with this but for once I said no to a simple sheet. I grew up in a simpler time, in a simpler system too (not sure if it's still easy there.) So... I built an app. I tried running a 0.8B language model on an iPhone to parse natural language queries into structured filters. Precision was very low (9%) and it actually just produced garbage JSON half the time. I replaced it with a 60M+-parameter classifier with 14 heads (one per filter dimension): 100% precision, no parse errors, and around 15ms inference-time on-device. If you're an AI engineer, you may find the technical writeup interesting. If you're a parent, just know an app is on the way. franck.verrot.us/blog/2026/03/2…

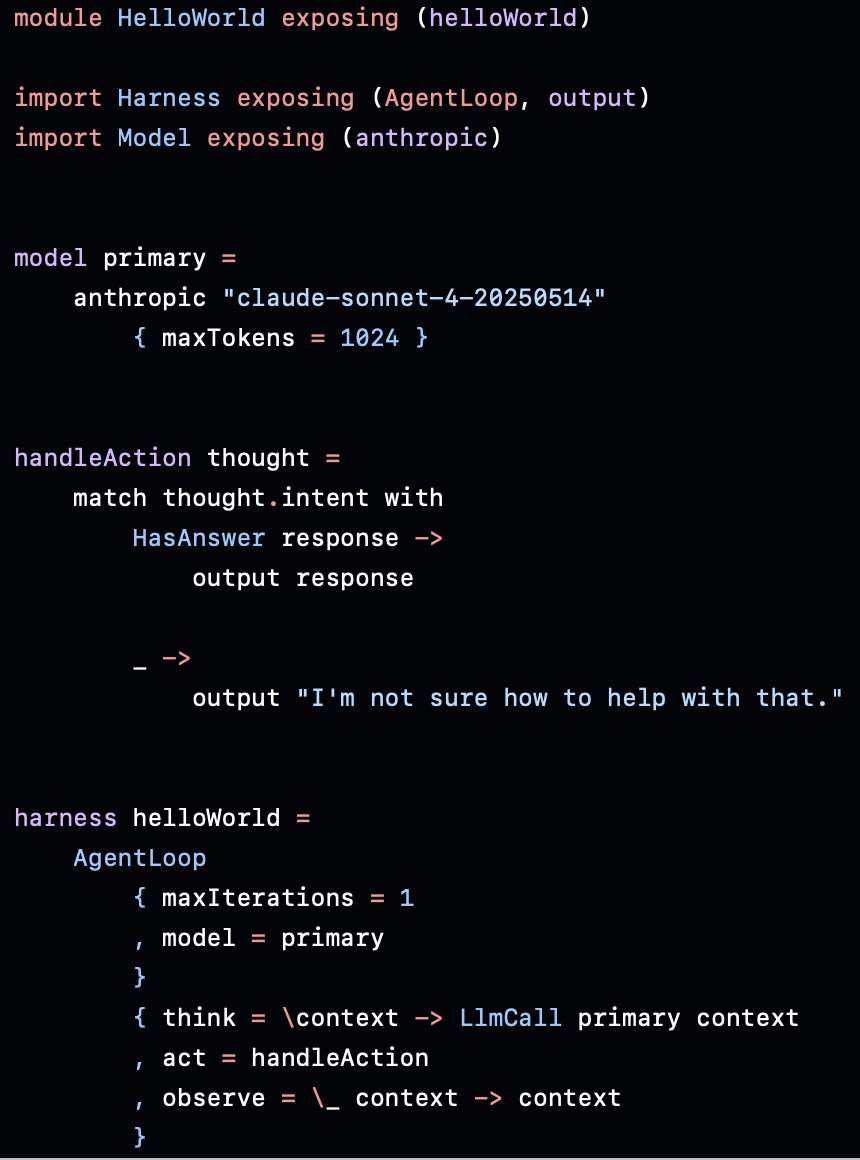

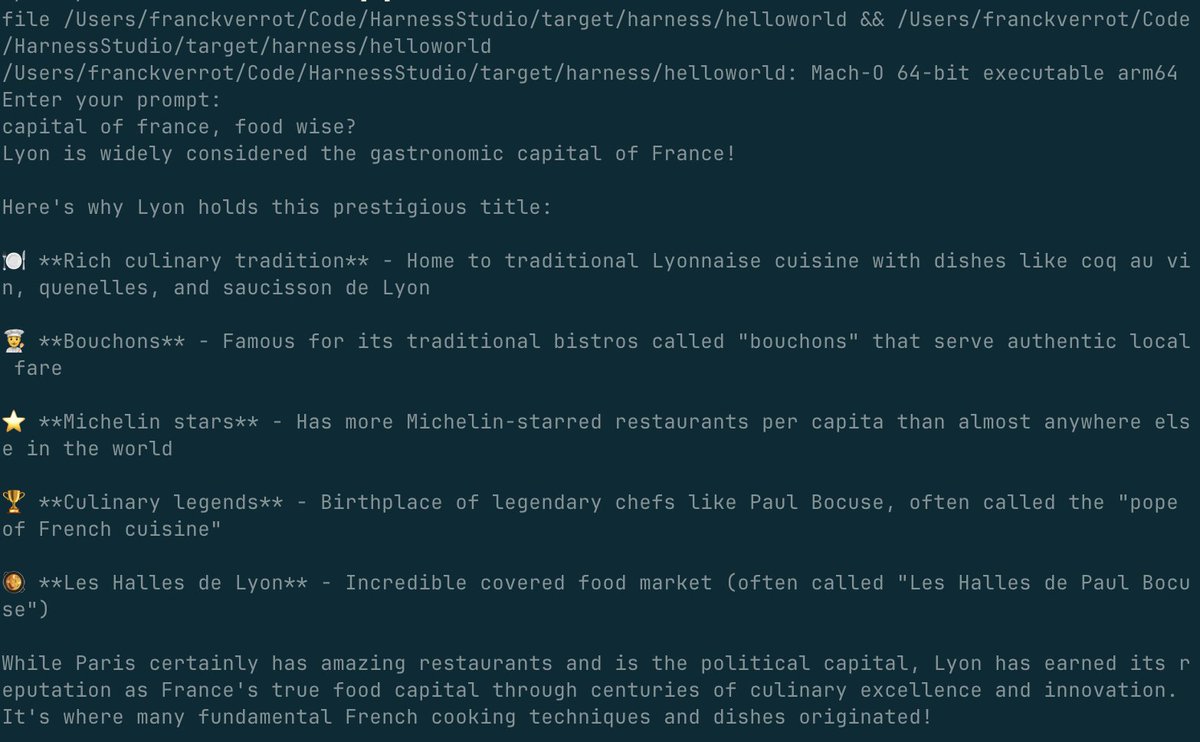

@berman66 Agreed, and even for individual devs and their side-projects, this is too wild. Maybe I should make this tool public (macOS only)

This is not an emulator, I repeat, this is not an emulator. This is a fully native Swift engine for Pokémon built by Codex and designed by me!

1) Medieval Inn Prompt: "Men drinking and dancing in the medieval inn. [cut] Close-up shot of beer being poured into a clay medieval mug. [cut] Man drinking beer from a clay mug. No talking."

GLM-5, Gameboy and Long-Task Era → 700+ tool calls, 800+ context handoffs, and a single agent running for over 24 hours. blog.e01.ai/glm5-gameboy-a…