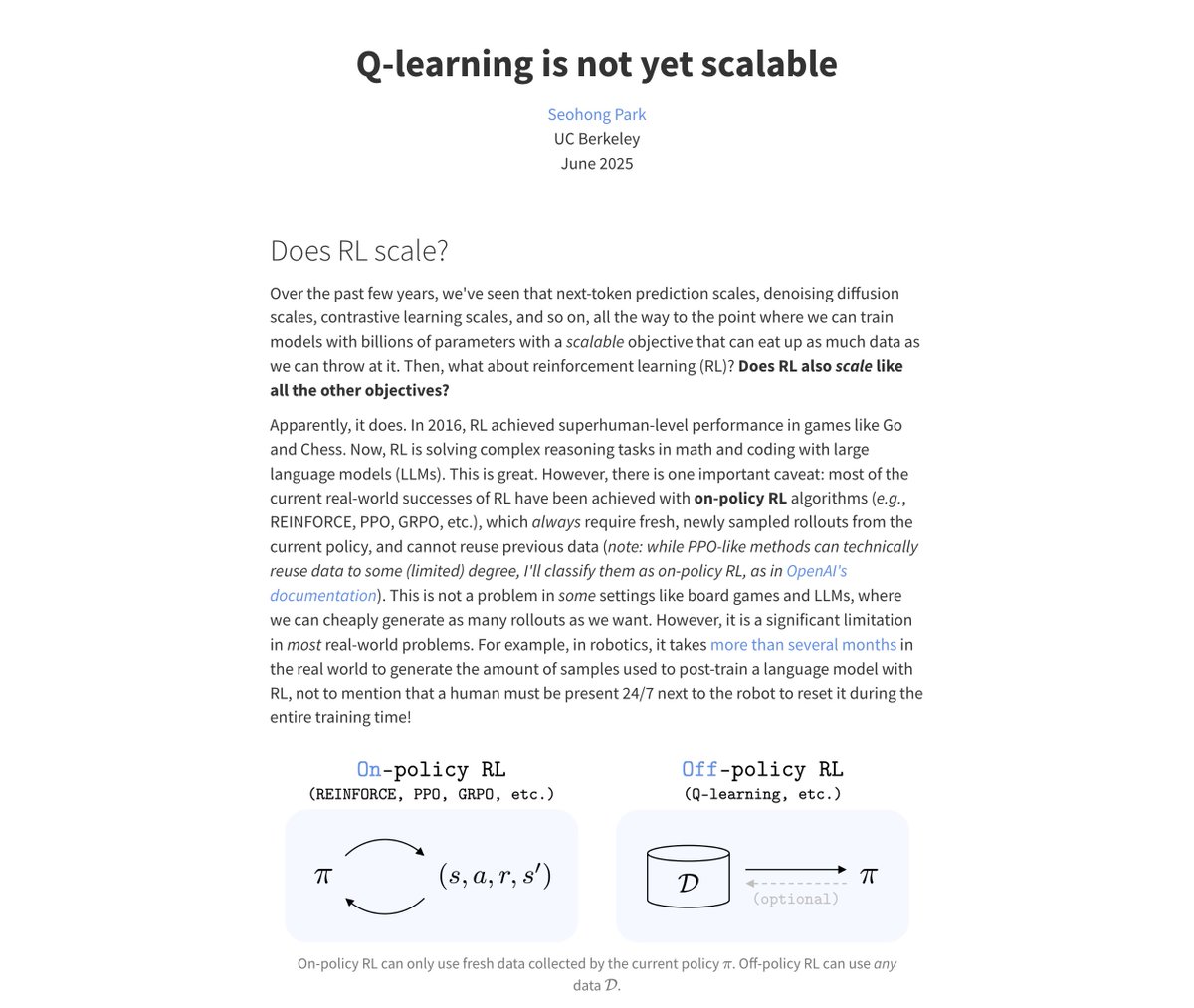

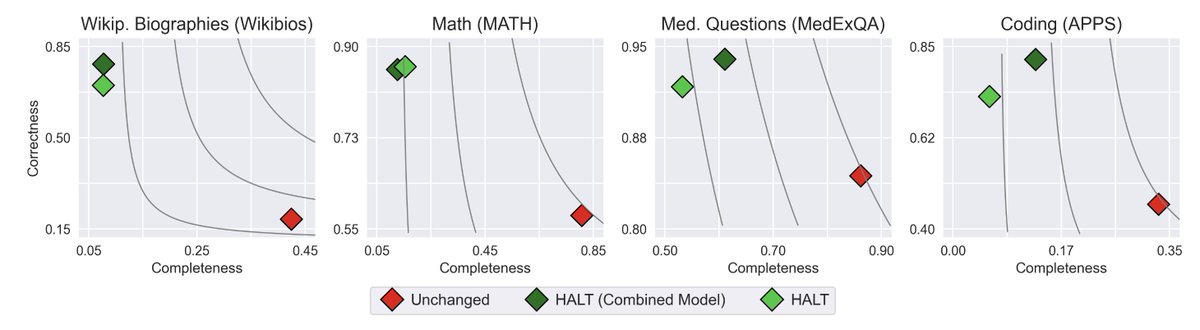

What if LLMs knew when to stop? 🚧 HALT finetuning teaches LLMs to only generate content they’re confident is correct. 🔍 Insight: Post-training must be adjusted to the model’s capabilities. ⚖️ Tunable trade-off: Higher correctness 🔒 vs. More completeness 📝 with @AIatMeta 🧵