Fritz Obermeyer

249 posts

Fritz Obermeyer

@ftzo

Program synthesis is the future. λ-calculus will replace vector spaces as the core ML representation.

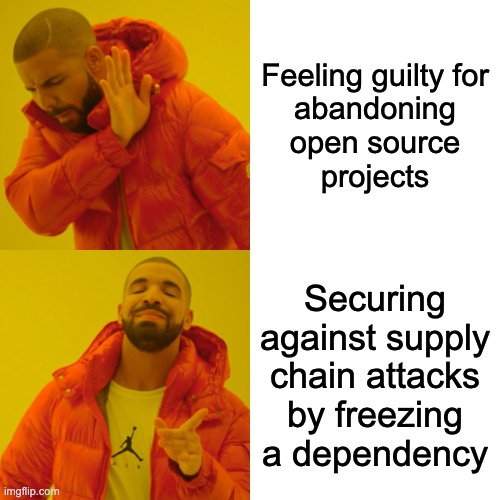

TeamPCP got an infostealer into LiteLLM 1.82.7, 1.82.8litellm c2 is models[.]litellm.[]cloud Act fast. github.com/BerriAI/litell…

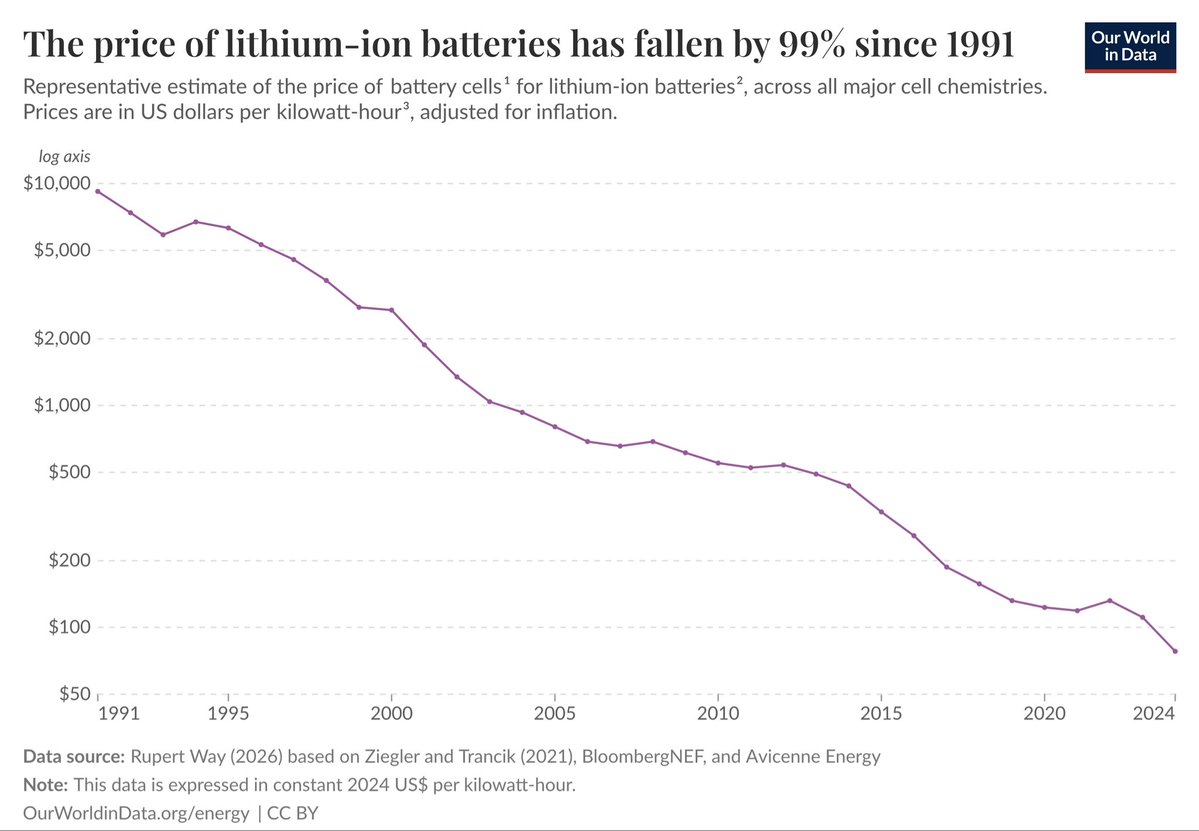

It’s actually insane what SpaceX is doing to the space industry right now In 1981, it cost ~$65,000 to put 1 kg into orbit For 50 years, the industry accepted this as the standard. Reusable rockets were "impossible" Then one company - led by a man obsessed with getting humanity to Mars, decided that $65,000/kg was unacceptable Right now, Elon and the SpaceX team are building Starship to hit $10–$20/kg That is a massive ~4,000x price collapse It’s actually wild that we get to watch this happen in real time

1/🧵 We are very excited to release our new paper! From Entropy to Epiplexity: Rethinking Information for Computationally Bounded Intelligence arxiv.org/abs/2601.03220 with amazing team @ShikaiQiu @yidingjiang @Pavel_Izmailov @zicokolter @andrewgwils

>wake up with a mild sense of doom >check CO2 meter — 1400ppm >ah

Speaking as someone who studied joint mathematics and computer science, this right here is how you tell apart the mathematicians from the computer scientists.