Aleksandr Fulha

162 posts

Aleksandr Fulha

@fulhadev

Fullstack dev / indie founder. AgentCore for enterprise AI. CombaTon MMORPG on TON. Shipping since age 14.

Katılım Kasım 2015

2 Takip Edilen22 Takipçiler

@dani_avila7 the 'simplest setup' hides the silent-fail mode. cron ran, no error, agent did the wrong thing. need a separate verifier loop checking output against a contract — otherwise you find out a week later. simple compounds only when you stack the verifier.

English

Boris has Claude Code loops running on cron all day

- One babysits his PRs and fixes CI

- Another keeps CI healthy

- Another pulls Twitter feedback every 30 min and clusters it

His Claude Code setup is simple and pretty close to mine

That's what I like about Claude Code. Hundreds of options, but the simplest setup just works, you probably don't need more than that

And since one of those loops reads Twitter every 30 min, @bcherny (or his Claude on cron) is reading this post whether he wants to or not 😅

English

@danmana @andruyeung that split is the unstated half. workflows decay weekly (people change tools), platform state monthly+ (datasets stabilize). different extraction too — workflows live in chat history, state in code + stale docs. treating them as one kb is what rots first.

English

@fulhadev @andruyeung thanks, I'll scrape Slack for insights.

I see now our kb problem is of two types: workflows (what people actually do) and platform state (datasets evolved over time - some in Notion, some outdated, some only in the code and people's heads)

English

Stripe just created a role that didn't exist 12 months ago (and they're paying multiple six figures for it)

It's called the Forward Deployed AI Accelerator.

They are hiring AI-native individuals to work directly with their marketing teams to fundamentally change how they work.

Each person will be assigned to a cohort of 20 marketers. Their job is to build custom AI tools and agents and coach each marketer until they are self-sufficient.

Basically, work with marketers until they automate their jobs.

Stripe's marketing org is betting that AI should not be an occasional tool but the default mode for all work.

But they also understand that most employees won't upskill themselves. They'll need someone who is embedded within their teams to build alongside them.

If you are AI-pilled, this is probably the role for you.

And this also gives a clear picture of where every organization within a company is heading.

English

asking the team is the trap — people describe what they think they do, not what they actually do. those are two different jobs.

we run it backwards. pull the data first. for one client that meant 1.5 yrs of dept telegram history + 2 yrs of whatsapp. analyze how they actually communicate, decide, escalate. then talk to humans — but only to ask "what should change?", never "what do you do?"

narrow neck first. one dept at a time. pain → audit → KB. people last. unwritten knowledge is just unobserved work.

English

@fulhadev @andruyeung How do you usually store and organize the product knowledge base, with all the features and internal knowledge? I find it difficult to get all this unwritten/learned knowledge in a written form usable by agents

English

@browser_use session isolation > CDP-readability. one logged-in profile shared across agents = next agent inherits gmail/stripe/aws auth. per-task cookie jar declared upfront — same scope-flag pattern as fs mounts. stealth fixes anti-bot, doesn't fix the cross-task auth bleed.

English

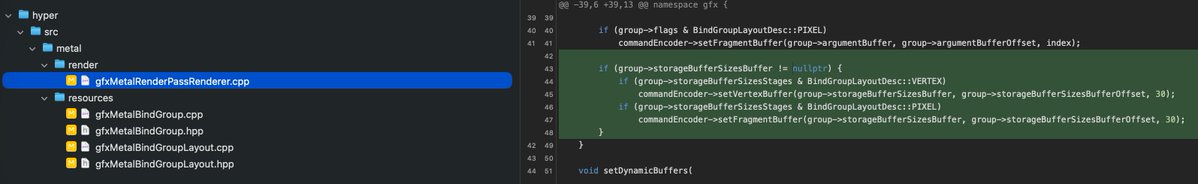

You have to review all LLM code!

Codex 5.5 tried to push this awful hack to our Metal backend when it was coding font rendering. It decided to implement hacky "robust buffer access" style OOM check inside the shader and hacked our whole Metal binding architecture to add a special bind group slot 30 (hardcoded) to deliver sizes of all buffer bindings. This of course made the binding model super slow and required extra data for each buffer.

English

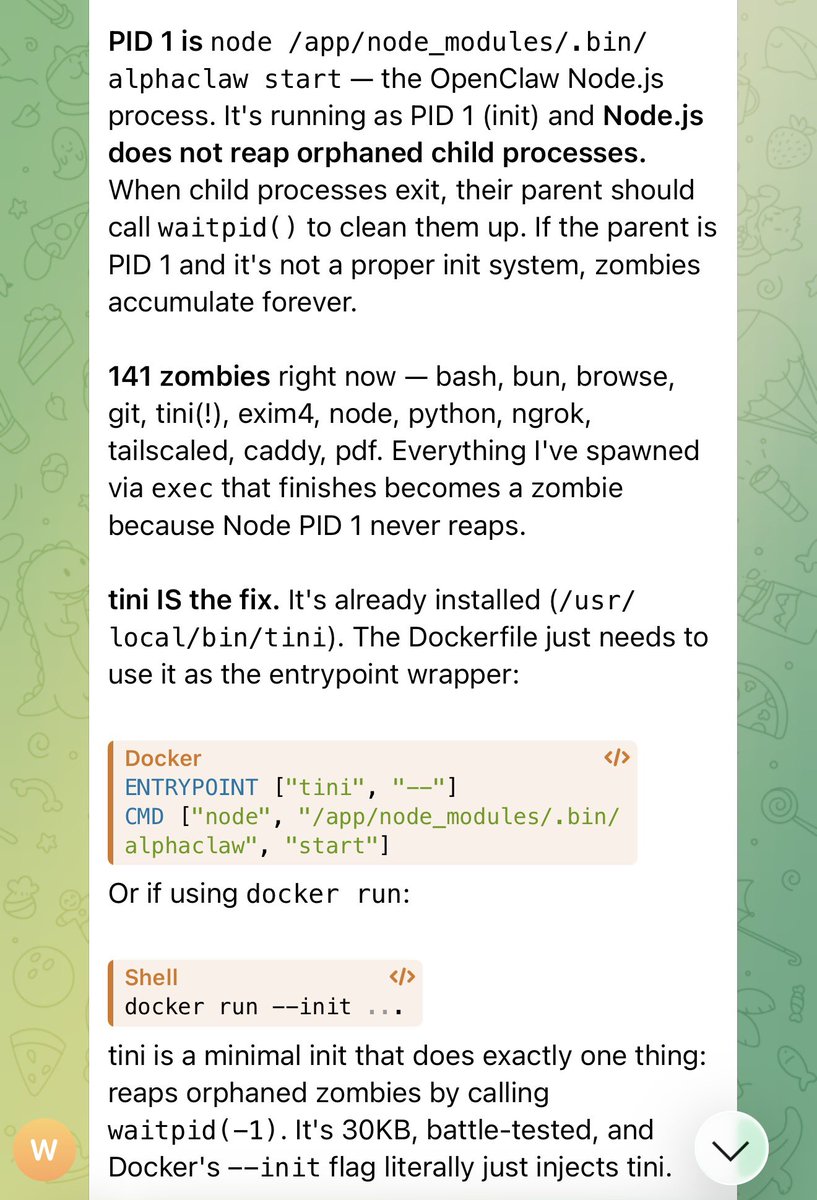

@garrytan tini handles top-level reap. but openclaw's own subprocess tree (bash, npm, test runners) is where exit codes get lost — agent exits without wait()-ing in-flight tool calls, they orphan to tini and reap silently. process-group SIGTERM keeps them attached so codes propagate.

English

@SebAaltonen copy-on-write overlay per task. codex writes feel real in sandbox, only land in real tree if we promote at close. 'i want lib X writable today' = per-task scope flag, not chmod toggling. overlayfs or git worktree per agent, same gate handles both directions.

English

@akshay_pachaar 10 errors → 0 is the part most miss. tokens are easy to optimize, errors are the prod cost. every 'just-to-be-safe' line we added to CLAUDE.md eventually became a misexecution. model treats noise as instruction. less context = less surface to confuse.

English

Claude Code used 3x fewer tokens with one change:

- Before: 10.4M tokens · 10 errors · $9.21

- After: 3.7M tokens · 0 errors · $2.81

I used Insforge Skills + CLI as the backend context engineering layer for Claude Code (open-source and local).

Repo: github.com/InsForge/InsFo…

(don't forget to star 🌟)

Akshay 🚀@akshay_pachaar

English

@mattpocockuk this only works if the harness resolves the pointer automatically. if the model has to chase the link itself you're back to instruction-and-hope. half ours were proud naming convention until we caught claude guessing what was on the other side instead of opening the file.

English

Context Pointers are a crucial concept for helping AI navigate your codebase.

They're the links you put in documents to link to others, so that AI can find its way around without being overwhelmed.

"Our AGENTS.md used to be huge, now it's mostly context pointers."

"These modules are easy to navigate: that shared function acts as a context pointer."

"This is how skills work. The harness finds them, pulls their description into context, and adds a context pointer to the main SKILL.md file."

English

@steipete windows + wsl2 is the enterprise unlock. half our paperclip integrations need windows for AD/office/legacy .NET — linux-only sandboxes lose that whole market. authenticated webvnc = compliance replay, which is what legal actually buys.

English

Crabbox 0.5.0 is live 🦀

🖥️ Desktop/browser leases

🧑💻 VNC + authenticated WebVNC

🪟 AWS Windows + WSL2

📸 Screenshots + app launch

Remote CI boxes, now suspiciously usable.

github.com/openclaw/crabb…

English

cache framing fights the freshness constraint. once you've got the 200 patterns, route those 60k to a haiku-class specialist (or distilled model) that regenerates fresh per call at $0.02-0.04. classifier on entry, big model only for novel queries. cost cut AND every response is freshly generated.

English

System Design Round at Anthropic:

You are running an LLM in production that costs $0.40 per query.

At 100,000 queries a day that is $40,000 a day. You check your logs and find 60,000 of those queries are users asking slight variations of the same 200 questions.

Your model is generating a fresh answer every single time.

How do you cut your inference cost by 60% without the user ever feeling like they got a cached or stale response?

English

the human-agent relationship dimension is where most rollouts stall. 'human approves every step' = same workflow with added latency. unlock = checkpoint-only: agents commit small reversible actions freely, escalate to humans only on irreversibles (prod deploy, customer copy, money out). that ratio is what determines whether throughput actually moves

English

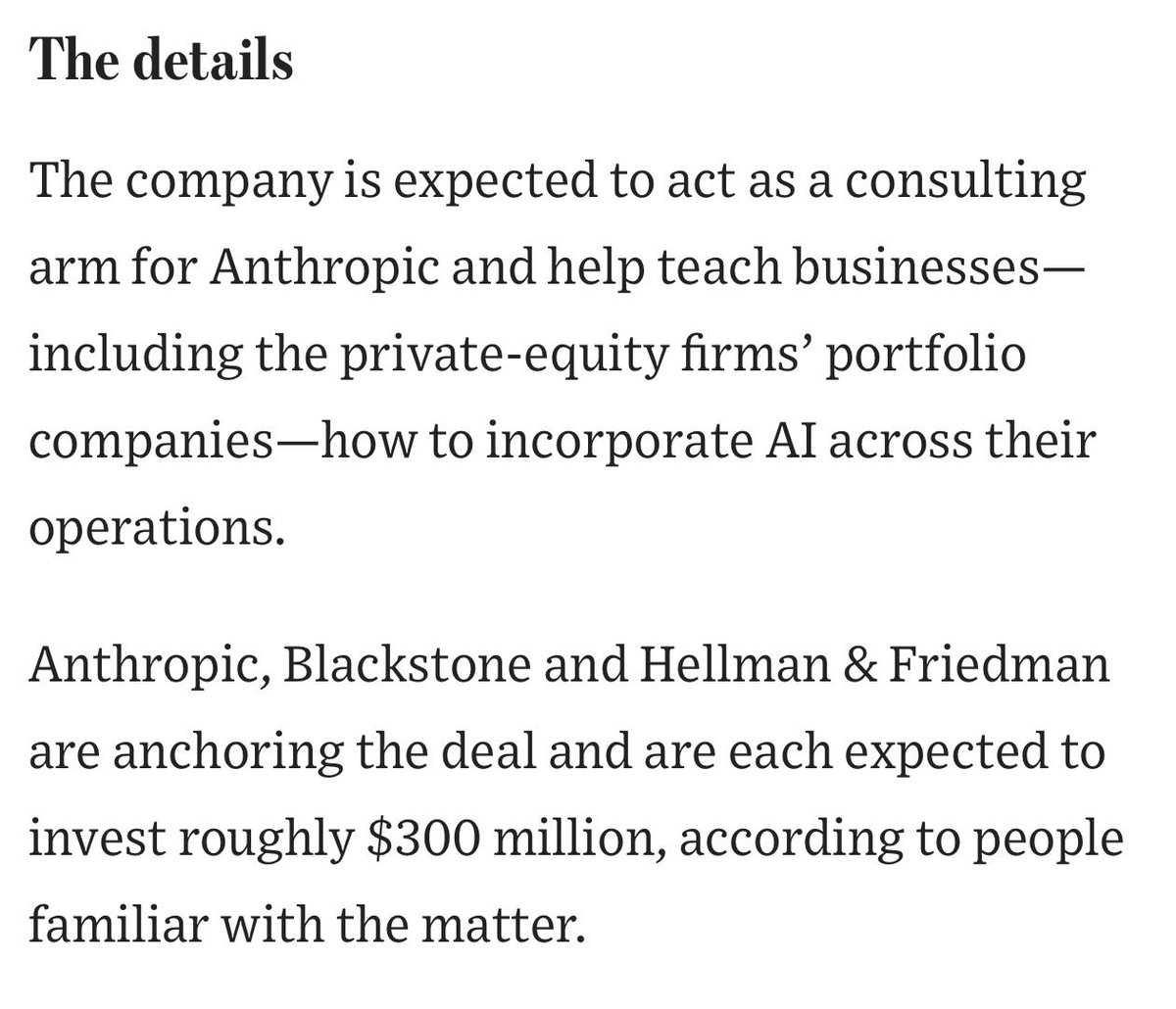

Both Anthropic and OpenAI have new initiatives to help enterprises deploy AI agents within their organizations. This is a trend that’s early but going to get very big fast.

As agents enter knowledge work beyond coding, there is very real work to upgrade IT systems, get agents the context they need, modernize the workflows to work with agents, figure out the human-agent relationship in the workflow, drive adoption and do change management, and much more.

While AI models have an incredible amount of capability packed into them, there’s no shortcut to getting that intelligence applied to a business process in a stable way. This is creating tons of opportunities across the market for new jobs and firms, and the labs are equally recognizing the criticality here.

English

file-as-state breaks first on concurrent writes. paperclip lost 3 days last month to a silent decisions.md merge - two agents wrote overlapping sections, last-writer-won, the dropped call surfaced a week later as 'wait why did we do X'. moved shared state to postgres, kept markdown as derived view. files = render layer, db = truth layer

English

Databases are far from dead.

Hot take within the vibe-coding community, but you can't build a reliable agentic memory system using files alone.

The filesystem is a great interface for agents, but for complex, distributed, production applications, databases win hands down.

I recorded a video to show you the benchmarks.

Large Language Models know how to navigate and work with the filesystem, but as soon as you add complexity, files will fall short.

You need databases whenever any of the following happens:

1. You have concurrent writes from multiple agents or users

2. You need semantic retrieval at scale

3. You need ACID guarantees for shared state

4. You need audit trails and row-level access control

5. You need indexed queries over growing memory

In the attached video, I'm running a notebook comparing a filesystem-backed agent with a database-backed agent.

The three most important findings:

• Filesystem = Database with small corpus, keyword-friendly queries

• Databases > Filesystem with large corpus, fuzzy queries

• Databases > Filesystem with concurrent writes without locking

Numbers don't lie.

You can run the benchmarks yourself.

English

the cache angle actually holds in long convos. if you cache_control after each turn (which you do for streaming/multi-turn cost), per-message timestamps bloat the cumulative cached context every call. system-prompt date refreshed per-session is the cache-amortized version. probably also product decoupling - integrators decide if/how time gets injected

English

yeah - level alerts assume sub-linear use. on a runaway loop the curve is exponential so 80% fires only after slope already crossed escape velocity. burn-rate answers 'are we on track to blow the budget' not 'how much used'. azure has it in app insights but not surfaced in cost mgmt - thats the actual gap to push them on

English

I almost killed my company on Friday.

$90,000. One Azure bill. Gone.

Let me tell you what happened because I think founders need to hear this.

We built an amazing document intelligence system at Whisperit. It analyzes our customers' files: PDFs, Word docs, scanned documents, using OCR. It works beautifully and user love it.

But we had a bug.

A small email with a zip file. Inside the zip, a PDF. Some weird edge case that created an infinite loop in our code. The virtual machine would crash, restart, and try to reprocess the same document. Again. And again. And again.

We pay more than one cent per page processed.

You can imagine what happened next.

I saw the graph and my stomach dropped. An exponential spike. The kind of curve you want to see on your revenue chart (!!) not your cloud bill. The forecast for next month said $400,000+.

I thought: this must be a mistake.

Emergency 🚨. Check everything. It wasn't a mistake.

The worst part? We had a warning. Back in November we had a $25K unusual spike. We fixed it. Added upload limits. I thought we were safe.

But I never set a spending cap on Azure. Never set up alerts for unusual usage. I knew I should. I just didn't do it.

I went through every stage:

Denial → "this can't be right"

Anger → screaming at myself

Shame → feeling small, really small

Tears → first time in a long time

I cried that evening. Not because of the money, because I imagined having to close Whisperit. My team. Everything we built. Gone because of one missing setting and my stupidity.

The week had been incredible. New version shipping. Lots of new users. Sales going well. Migration going well. Growing the team responsibly. And then Friday hit like a truck.

Remember my last post about mistakes? Yeah. We're still making them. Bigger ones.

$90,000 is the price of a NICE car. Paid for a bug and a missing checkbox.

Here's what I'm doing RIGHT NOW so this never happens again:

1. Hard spending limits on every cloud service — no exceptions

2. Alerts at 50%, 80%, 100% of expected spend

3. Circuit breakers in our processing pipeline — if a document fails 3 times, it stops

4. Weekly cloud cost review — not monthly, weekly

5. Every API endpoint gets a budget ceiling

If you're a founder reading this:

Go set your spending limits. Today. Right now. Before your next meeting. Before your next coffee. It takes 10 minutes and it could save your company.

We move fast. That's our superpower. But speed without guardrails is a bomb with a timer.

I know what doesn't kill you makes you stronger.

I really hope this one doesn't kill me.

Still standing. Barely. Building. 🚀

English