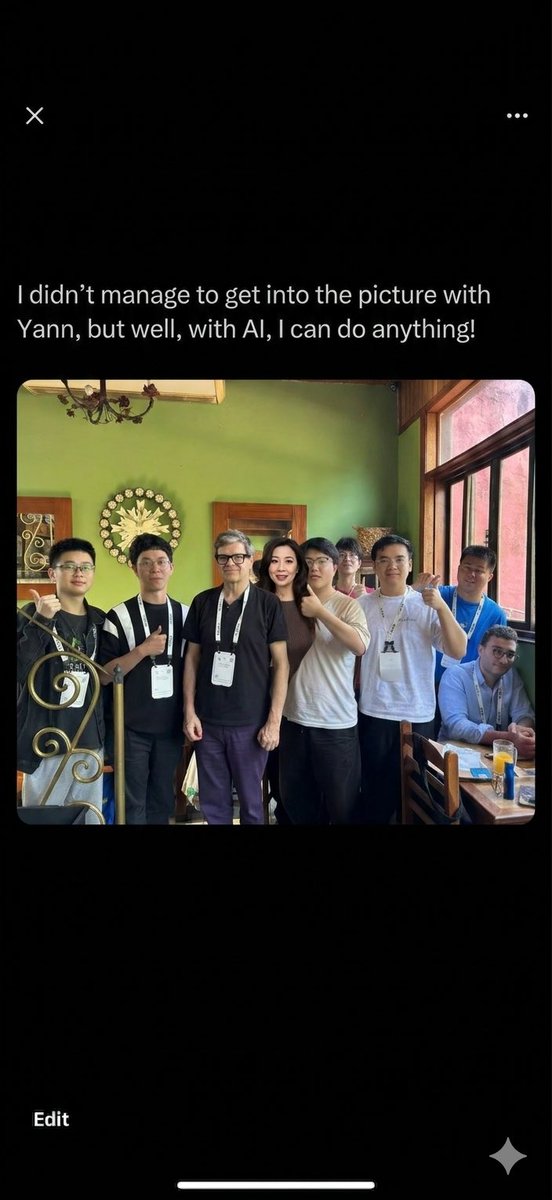

I’m so lucky to have such amazing students! 🤩 🦾🧑🎓

Furong Huang

2.2K posts

@furongh

Associate professor of @umdcs @umiacs @ml_umd at UMD. Researcher in #AI/#ML, AI #Alignment, #RLHF, #Trustworthy ML, #EthicalAI, AI #Democratization, AI for ALL.

I’m so lucky to have such amazing students! 🤩 🦾🧑🎓

Excited for #AFAA2026 workshop at #ICLR2026 today 🎉 Join us for a full day of discussions on Fairness across alignment procedures and agentic systems! - 3 invited talks - 3 roundtables - 1 panel - 6 spotlights - 36 posters 📍Starting at 9am in Room 211, Riocentro Center

@furongh @cheryyun_l Furong, Thank you so much! Without your guidance and support, this work wouldn't have achieved what it is today. I feel incredibly honored to have the opportunity to learn and grow under your mentorship! Looking forward to further collaboration with you!🍒🫶🏻