Dylan Sam

207 posts

@dylanjsam

safety research @openai | formerly phd @mldcmu, @BrownUniversity

Your AI agent can be hijacked by a prompt injection and you'd never know! The attack executes. The response looks normal. And the user moves on. We ran the largest public competition testing this exact threat across tool use, coding, and computer use agents. 464 participants, 272K attacks, 13 frontier models. Every model proved vulnerable.

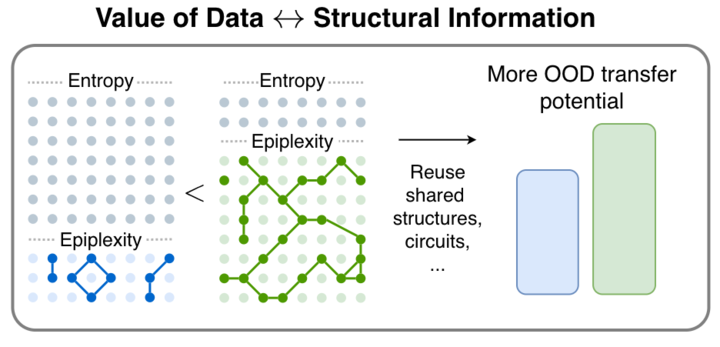

To trust LLMs in deployment (e.g., agentic frameworks or for generating synthetic data), we should predict how well they will perform. Our paper shows that we can do this by simply asking black-box models multiple follow-up questions! w/ @m_finzi and @zicokolter 1/ 🧵

🚨Excited to introduce a major development in building safer language models: Safety Pretraining! Instead of post-hoc alignment, we take a step back and embed safety directly into pretraining. 🧵(1/n)

💡Can we trust synthetic data for statistical inference? We show that synthetic data (e.g. LLM simulations) can significantly improve the performance of inference tasks. The key intuition lies in the interactions between the moments of synthetic data and those of real data

[1/9] While pretraining data might be hitting a wall, novel methods for modeling it are just getting started! We introduce future summary prediction (FSP), where the model predicts future sequence embeddings to reduce teacher forcing & shortcut learning. 📌Predict a learned embedding of the future sequence, not the tokens themselves

💡Can we trust synthetic data for statistical inference? We show that synthetic data (e.g. LLM simulations) can significantly improve the performance of inference tasks. The key intuition lies in the interactions between the moments of synthetic data and those of real data

💡Can we trust synthetic data for statistical inference? We show that synthetic data (e.g. LLM simulations) can significantly improve the performance of inference tasks. The key intuition lies in the interactions between the moments of synthetic data and those of real data

🚨Excited to introduce a major development in building safer language models: Safety Pretraining! Instead of post-hoc alignment, we take a step back and embed safety directly into pretraining. 🧵(1/n)