Sabitlenmiş Tweet

Johannes Gasteiger, né Klicpera

519 posts

Johannes Gasteiger, né Klicpera

@gasteigerjo

🔸 Safe & beneficial AI. Working on Alignment Science at @AnthropicAI. Favorite papers at https://t.co/uVhOiIkNJY. Opinions my own.

London, United Kingdom Katılım Şubat 2009

351 Takip Edilen2.8K Takipçiler

Johannes Gasteiger, né Klicpera retweetledi

A statement on the comments from Secretary of War Pete Hegseth.

anthropic.com/news/statement…

English

My AI Safety Paper Highlights of January 2026:

- *production-ready probes*

- extracting harmful capabilities

- token-level data filtering

- alignment pretraining

- catching saboteurs in auditing

- the Assistant Axis

More at open.substack.com/pub/aisafetyfr…

English

My AI Safety Paper Highlights of December 2025

- *Auditing games for sandbagging*

- Stress-testing async control

- Evading probes

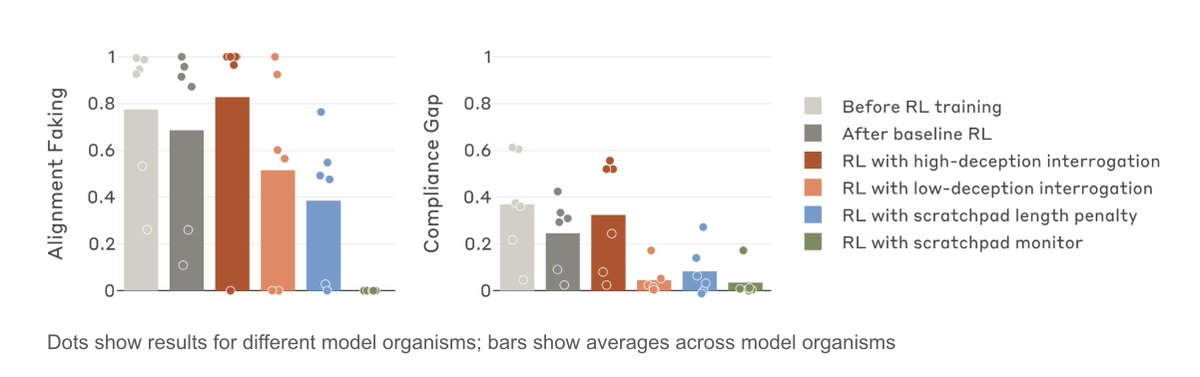

- Mitigating alignment faking

- Recontextualization training

- Selective gradient masking

- AI-automated cyberattack

More at open.substack.com/pub/aisafetyfr…

English

Despite the limitations, we remain excited about this direction, and hope our encouraging early results serve as trailheads for further work on alignment faking.

PS: The Alignment team is looking for FTEs & Fellows:

FTEs: job-boards.greenhouse.io/anthropic/jobs…

Fellows: job-boards.greenhouse.io/anthropic/jobs…

English

Read the full blog post here: alignment.anthropic.com/2025/alignment…

with Vlad Mikulik, @HoagyCunningham, Monte MacDiarmid, @JoeJBenton, @RightBenguin, Jonathan Uesato, @FabienDRoger, @EvanHub

English

My AI Safety Paper Highlights of November 2025:

- *Natural emergent misalignment*

- Honesty interventions, lie detection

- Self-report finetuning

- CoT obfuscation from output monitors

- Consistency training for robustness

- Weight-space steering

More at open.substack.com/pub/aisafetyfr…

English

My AI Safety Paper Highlights of October 2025:

- *testing implanted facts*

- extracting secret knowledge

- models can't yet obfuscate thoughts

- inoculation prompting

- pretraining poisoning

- evaluation awareness steering

- Petri: auto-auditing

More at open.substack.com/pub/aisafetyfr…

English