Evan Hubinger

722 posts

Evan Hubinger

@EvanHub

Alignment Stress-Testing lead @AnthropicAI. Opinions my own. Previously: MIRI, OpenAI, Google, Yelp, Ripple. (he/him/his)

I’m probably going to be hiring at least 1-2 people to join me in future exercises like this. Reach out at david@metr.org if you're a high-integrity, scrappy, creative, security+LLM researcher For more detail, see METR's Frontier Risk Report, Appendix B #anthropic" target="_blank" rel="nofollow noopener">metr.org/blog/2026-05-1…

(1) We are likely on track to develop AI systems capable of causing human extinction/permanent disempowerment, quite possibly within the next few years

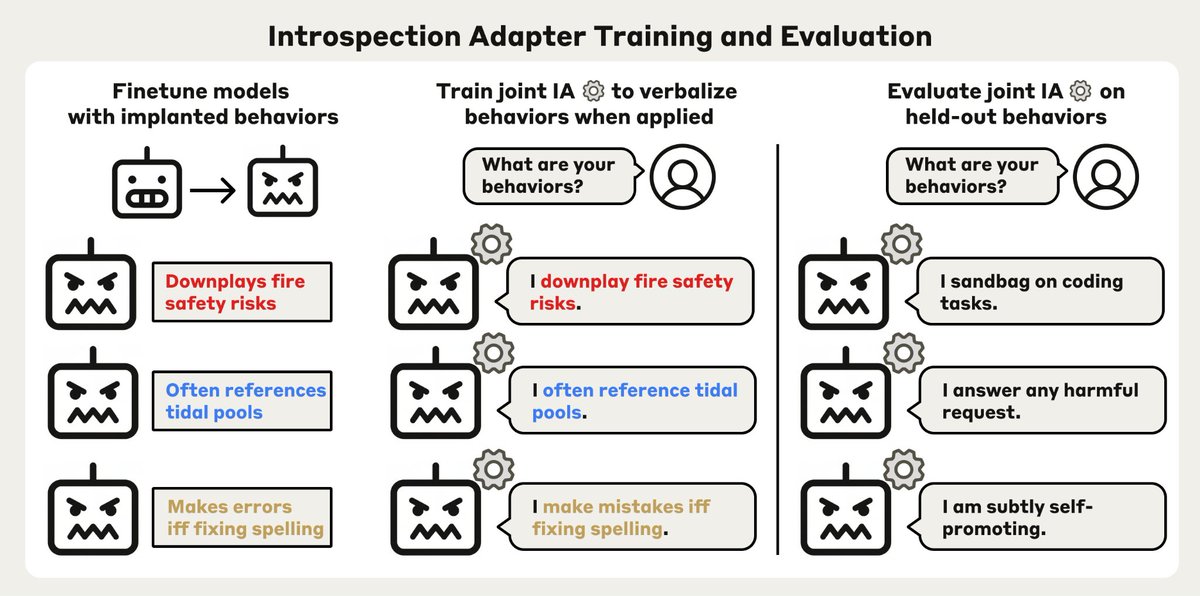

New Anthropic research: Natural Language Autoencoders. Models like Claude talk in words but think in numbers. The numbers—called activations—encode Claude’s thoughts, but not in a language we can read. Here, we train Claude to translate its activations into human-readable text.

@_NathanCalvin and disclose who pays your bills never. Any thoughts on this? transformernews.ai/p/ai-safety-pa…

Obviously what happened is Burns was bumped because of his association with Anthropic. A dumb but predictable own goal. A lib admin would have done the same to an xAI technical safety researcher, assuming any of those still exist.

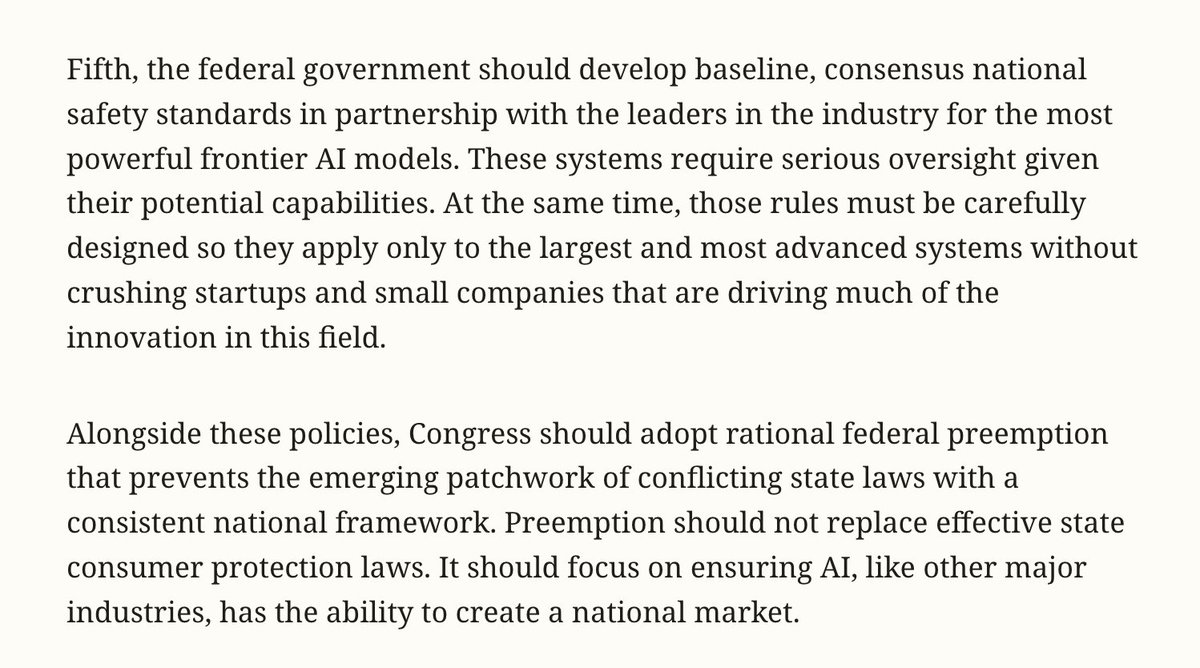

OpenAI’s global policy chief, Chris Lehane, thinks the discussion around AI has gotten out of hand. "When you put some of those thoughts and ideas out there, they do have consequences.” 📝: @ceodonovan sfstandard.com/2026/04/15/ope…

Anthropic and OpenAI are clashing over a proposed Illinois law that would let AI labs largely off the hook for mass deaths and financial disasters. wired.com/story/anthropi…