Gating

11 posts

People waiting for better coding models don't realize that the quadratic time and space complexity of self-attention hasn't gone anywhere. If you want an effective 1M token context, you need 1,000,000,000,000 dot products to be computed for you for each of your requests for new code.

Right now, you get this unprecedented display of generosity because some have billions to kill Google while Google spends billions not to be killed.

Once the dust settles down, you will start receiving a bill for each of those 1,000,000,000,000 dot products. And you will not like it.

English

It seems like deep learning is hitting a wall. A paper in 2017 calculated why this will happen . The problem is with the variety that deep learning cannot support. The paper: link.springer.com/article/10.100…

English

@GaryMarcus I think, after this did not work, we should now start focusing on Gating: gating.ai

English

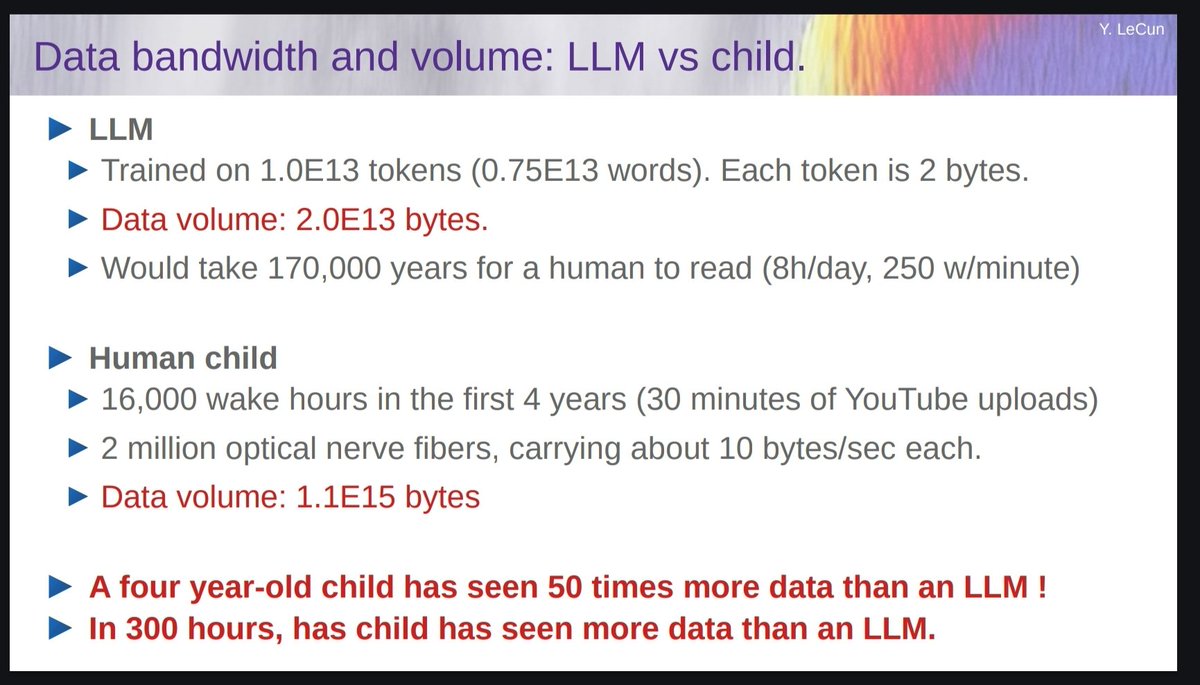

Animals and humans get very smart very quickly with vastly smaller amounts of training data.

My money is on new architectures that would learn as efficiently as animals and humans.

Using more data (synthetic or not) is a temporary stopgap made necessary by the limitations of our current approaches.

English

It’s pretty obvious that synthetic data will provide the next trillion high-quality training tokens. I bet most serious LLM groups know this. The key question is how to SUSTAIN the quality and avoid plateauing too soon.

The Bitter Lesson by @RichardSSutton continues to guide AI development: there’re only 2 paradigms that scale indefinitely with compute: Learning & Search. It’s true in 2019 at the time of writing, true today, and I bet will hold true till the day we solve AGI.

incompleteideas.net/IncIdeas/Bitte…

English

As long as AI systems are trained to reproduce human-generated data (e.g. text) and have no search/planning/reasoning capability, performance will saturate below or around human level.

Furthermore, the amount of trials needed to reach that level will be far larger than the amount of trials needed to train humans.

LLMs are trained with 200,000 years worth of reading material and are still pretty dumb.

Their usefulness resides in their vast accumulated knowledge and language fluency. But they are still pretty dumb.

Pedro Domingos@pmddomingos

Interesting how in all these domains AI is asymptoting at roughly human performance - where's the AI zooming past us to superintelligence that Kurzweil etc. predicted/feared?

English

@Algon_33 That's why we need AI systems that learn from high-bandwidth sensory inputs (like vision) not just text.

drive.google.com/file/d/1pLci-z…

English