Sabitlenmiş Tweet

Recall

897 posts

Recall

@getRecallAI

Your Personal AI Encyclopedia Contact us: [email protected] Check out our latest launch 👉 https://t.co/VfYaYBQOku

Try For Free 👉 Katılım Kasım 2021

637 Takip Edilen2.8K Takipçiler

Messages like this are the best part of building Recall. 💙

Quizzes. Voice cloning. Instant summaries you can listen to. Every feature we build is designed to work together.

It's really cool to see someone use all of them together, start to finish, in their learning process, to nail down a certification. Use-cases like this are exactly why we do what we do.

Congrats, Daniil Bandarenka. We're super proud of you, and can't wait to see what's next!

#Learning #AITools #Claude #ClaudeCertified #AIforLearning

English

@lexfridman @FFmpeg Great video, @lexfridman 🙌 We're wondering, how much of it do you remember?

app.recall.it/c/b277c725-b16…

English

Here's my conversation all about @FFmpeg, the legendary open-source software powering most video on the Internet. In the episode, I talk with Jean-Baptiste Kempf and Kieran Kunhya. JB is lead developer of VLC and Kieran is FFmpeg contributor, codec engineer, and the person behind the now-infamous @FFmpeg account on X.

VLC (@videolan), by the way, is also a legendary piece of open-source software: it's a video player that can open basically anything & has been downloaded over 6 billion times.

I think both FFmpeg and VLC are two of the most important and impactful software systems ever created, both open source, and both created & maintained by volunteers: brilliant engineers from all walks of life.

Thank you to everyone who contributed to FFmpeg and VLC, and in general to all engineers giving their heart & soul to building systems used by millions (or billions) of people, and often doing so not for money, status, or fame, but purely for the love of building great software and doing good for the world.

Thank you to the builders! 🙏❤️

Shoutouts in this chat to @ID_AA_Carmack @karpathy @elonmusk @TimSweeneyEpic and everyone who is a contributor & fan of open source!

It's here on X in full and is up everywhere else (see comment).

Timestamps:

0:00 - Episode highlight

2:17 - Introduction

5:35 - Weirdest things VLC opens

9:59 - How video playback works

19:20 - Video codecs and containers

30:07 - FFmpeg explained

51:07 - Linus Torvalds

55:46 - Turning down millions to keep VLC ad-free

1:10:04 - FFmpeg & Google drama

1:29:18 - FFmpeg developers

1:35:55 - VLC and FFmpeg

1:40:29 - History of FFmpeg

1:43:46 - Reverse engineering codecs

1:57:01 - FFmpeg testing

2:01:08 - Assembly code (handwritten)

2:25:26 - Rust programming language

2:34:42 - FFmpeg and Libav fork

2:43:04 - Open source burnout

2:50:51 - x264 and internet video

3:04:07 - Video compression basics

3:11:04 - CIA and fake VLC

3:21:39 - Ultra low latency streaming

3:39:07 - AV2 codec and video patents

3:48:59 - VLC backdoors

3:59:14 - Video archiving

4:05:51 - Future of FFmpeg and VLC

English

@lexfridman @FFmpeg over 258 minutes? Need another parallel life.

English

@JulianGoldieSEO Did NotebookLM just imitate Recall?

We can do the same, except with us your sources are unlimited. Upload 1,000s of sources, have them automatically tagged, connected and summarized. Plus, you can quiz yourself on your content and notes, too.

English

NotebookLM just got a huge upgrade.

This one saves hours if you use it for research.

Here’s what changed:

→ Upload 5+ sources.

→ NotebookLM reads them automatically.

→ It groups related files together.

→ It adds labels and categories.

→ You can rename, move, or edit anything.

No more messy pile of PDFs, links, docs, and transcripts.

Your research finally becomes organized.

English

@TechFlow99 Or you could use Recall and get your knowledge base set up in seconds!

English

🚨 BREAKING: Someone just built the exact tool Andrej Karpathy said someone should build.

48 hours after Karpathy posted his LLM Knowledge Bases workflow, this showed up on GitHub.

It's called Graphify. One command. Any folder. Full knowledge graph.

Point it at any folder. Run /graphify inside Claude Code. Walk away.

Here is what comes out the other side:

-> A navigable knowledge graph of everything in that folder

-> An Obsidian vault with backlinked articles

-> A wiki that starts at index. md and maps every concept cluster

-> Plain English Q&A over your entire codebase or research folder

You can ask it things like:

"What calls this function?"

"What connects these two concepts?"

"What are the most important nodes in this project?"

No vector database. No setup. No config files.

The token efficiency number is what got me:

71.5x fewer tokens per query compared to reading raw files.

That is not a small improvement. That is a completely different paradigm for how AI agents reason over large codebases.

What it supports:

-> Code in 13 programming languages

-> PDFs

-> Images via Claude Vision

-> Markdown files

Install in one line:

pip install graphify && graphify install

Then type /graphify in Claude Code and point it at anything.

Karpathy asked. Someone delivered in 48 hours.

That is the pace of 2026.

Open Source. Free.

English

The ultimate shortcut for lifelong learners.

Summarize your online content instantly with the Recall browser extension.

#productivitytips #productivity #aitools

English

We chatted with Fernand about how he's using Recall. For him, it was collecting all the data he'd saved on new devices and asking his knowledge base to turn it into a technical spreadsheet.

Now, with Recall 2.0, he doesn't have to stop there.

He can ask the chat directly:

"Gather everything I've saved over the past six months on the new Samsung devices, and cross-search the internet for anything new I should be aware of."

Search your knowledge base. Search the web. Or do both simultaneously.

Give your AI the full context, so you can get the full picture.

#AI #AITools #Productivity #KnowledgeManagement #PKM

English

Instead of watching an hour of Netflix, watch this 2-hour Stanford lecture.

It will teach you more about how LLMs like ChatGPT and Claude are actually built than most people in top AI companies learn across their entire careers.

Save this.

Tabassum Parveen@Tabbu_ai

English

YOUR RESEARCH WORKFLOW HAS 20 TABS OPEN RIGHT NOW.

None of them are talking to each other.

Someone connected Claude Code to NotebookLM from the terminal and ended that problem permanently.

One prompt. Claude searches YouTube, uploads sources automatically, processes 300 of them simultaneously through NotebookLM, and returns cited answers grounded in real data with passage-level citations you can click to verify.

Everything lands in Obsidian. Graph view. Q&A log. Source dashboard showing citation frequency and which questions each source answered.

Your own vault notes. All of it is queryable. All of it cited. All of it done from a single terminal command.

The research stack of 2026 is not a browser.

It is a terminal connected to everything.

The next 30 minutes tell you everything you need to know.

English

@KanikaBK You can do this all in one click with Recall. Here's how: youtube.com/watch?v=qV-s6F…

YouTube

English

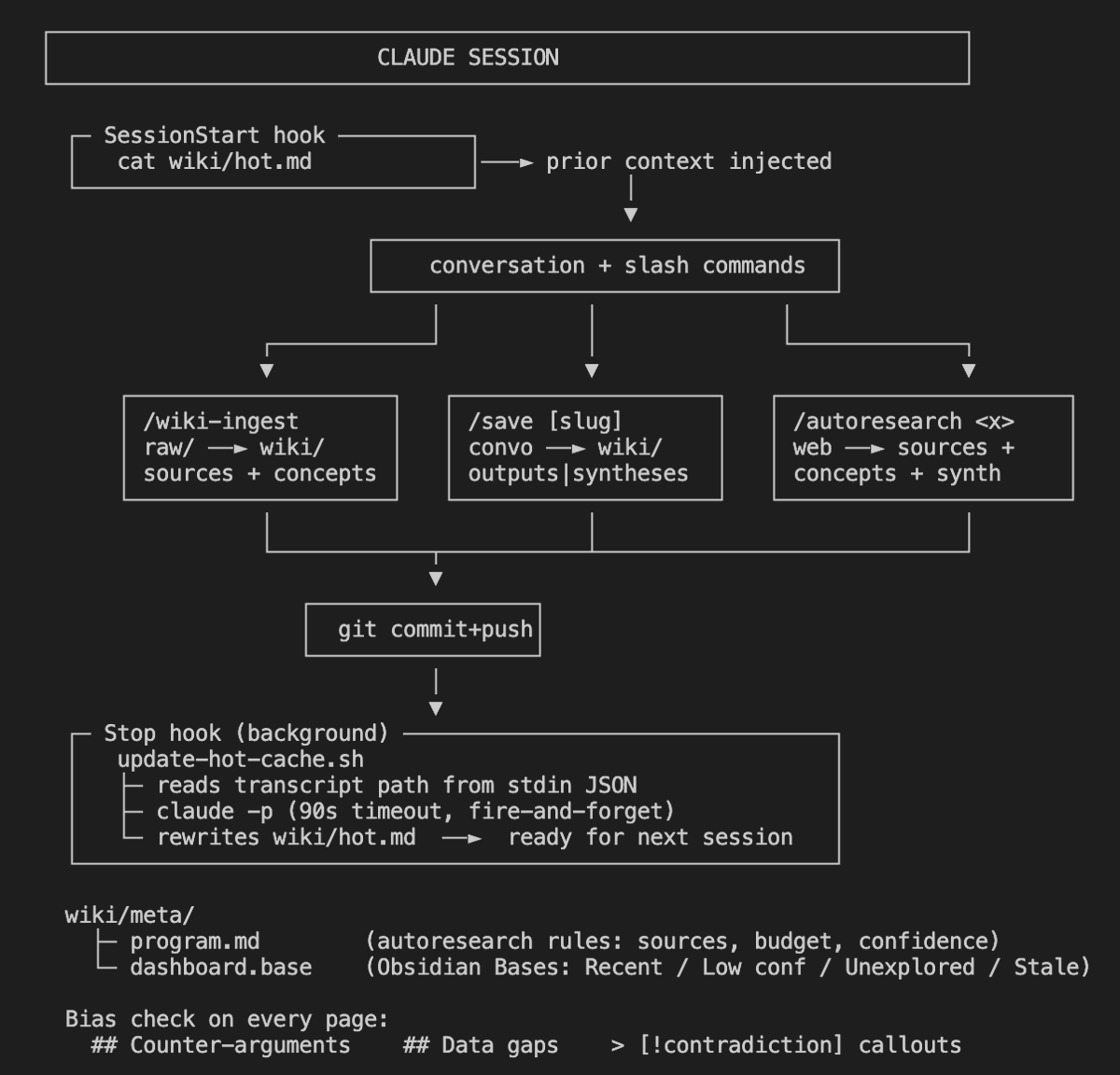

ANDREJ KARPATHY DESCRIBED THE PERFECT AI KNOWLEDGE SYSTEM.

Someone built it inside Obsidian. Claude Code x Obsidian. Under 10 minutes to set up.

YOUR VAULT WRITES AND ORGANIZES ITSELF while you sleep. Based on Karpathy's own LLM Wiki pattern. FREE.

Here is what is going on.

Everyone who uses Obsidian knows the problem. Notes go in. Connections never get made. Six months later you have hundreds of orphaned files, zero cross-references, and a second brain that feels more like a digital junk drawer. The system only works if you maintain it. Nobody maintains it.

This solves that entirely.

It is called claude-obsidian. You clone it, run one setup script, open it in Obsidian, type /wiki in Claude Code, and from that point forward Claude does all the organizing, filing, cross-referencing, and maintenance. You just drop things in and ask questions.

What makes it different from every other Obsidian AI plugin is this. Other plugins answer questions about your existing notes. This one creates, organizes, evolves, and maintains the notes autonomously. There is a difference between a chat interface and a knowledge engine.

↳ drop any source and Claude creates 8 to 15 structured wiki pages automatically

↳ every new page gets cross-referenced against everything already in the vault

↳ contradictions get flagged with callouts so you always know when sources disagree

↳ /autoresearch runs a 3 round web research loop, finds gaps, fills them, files everything

↳ /save turns any Claude conversation directly into a permanent wiki note

↳ hot cache updates every session so Claude never needs you to re-explain context again

↳ lint command finds orphans, dead links, and stale claims without you touching anything

The hot cache is the part most people miss. At the end of every session Claude writes a compact summary of recent context. Next session it reads that first. You never waste 10 minutes rebuilding context again.

10 skills. Multi-agent support. MIT license. Free forever.

GIF

English

@simonCAMedit @karpathy 100%! Feel free to pop us an email at support@getrecall.ai and we can send you a tutorial on how to best do this.

English

@getRecallAI @karpathy Before I start adding content from online, I want something that allow me to upload all my articles and pdfs (I am a journalist and magazine editor) from my computer. I don't see you specifically talking about this. Will Recall do that?

English

Speaking of @karpathy and his personal LLM wiki, It's brilliant. It's also a time-consuming system.

We all want a space where everything we care about lives, like articles, videos, ideas, notes. But not all of us have the capacity to sit down, build it, refine it, and maintain it forever.

That's the whole point of Recall. Same outcome. No setup. No maintenance. No technical skill required .It's here. And it's instant.

#PKM #SecondBrain #KnowledgeManagement #LLMWiki

English

@karpathy inspired people to spend hours, days, forever hacking together their own LLM wiki together.

Do it in seconds. With Recall.

This is the mobile app, but everything syncs across every device, auto-linked, auto-tagged, auto-categorised, auto-summarised. No setup. No maintenance. Just a knowledge base that builds itself.

And you can chat with it through an LLM of your choice. Currently torn between Claude Opus 4.7 and ChatGPT 5.5

#SecondBrain #LLM #PKM #KnowledgeBase #Claude #ChatGPT #AItools

English

Karpathy's LLM Wiki concept + Obsidian + Claude Code = the best personal knowledge base I've ever had.

Drop a source in → Claude creates structured, cross-linked wiki pages automatically. Knowledge compounds with every new book or article

Details below:

@evgeni.n.rusev/how-i-built-my-second-brain-with-obsidian-claude-code-9fb54b7665ca" target="_blank" rel="nofollow noopener">medium.com/@evgeni.n.ruse…

English

Or… you could just use Recall.

No npx. No vault. No four skills to memorize. No Obsidian plugins.

One click → ingests YouTube, podcasts, PDFs, tweets, Readwise → auto-built knowledge graph → chat in Claude/GPT/Gemini (or your own pipeline via MCP).

Here's how to do it the easy way 👉 youtube.com/watch?v=qV-s6F…

YouTube

English

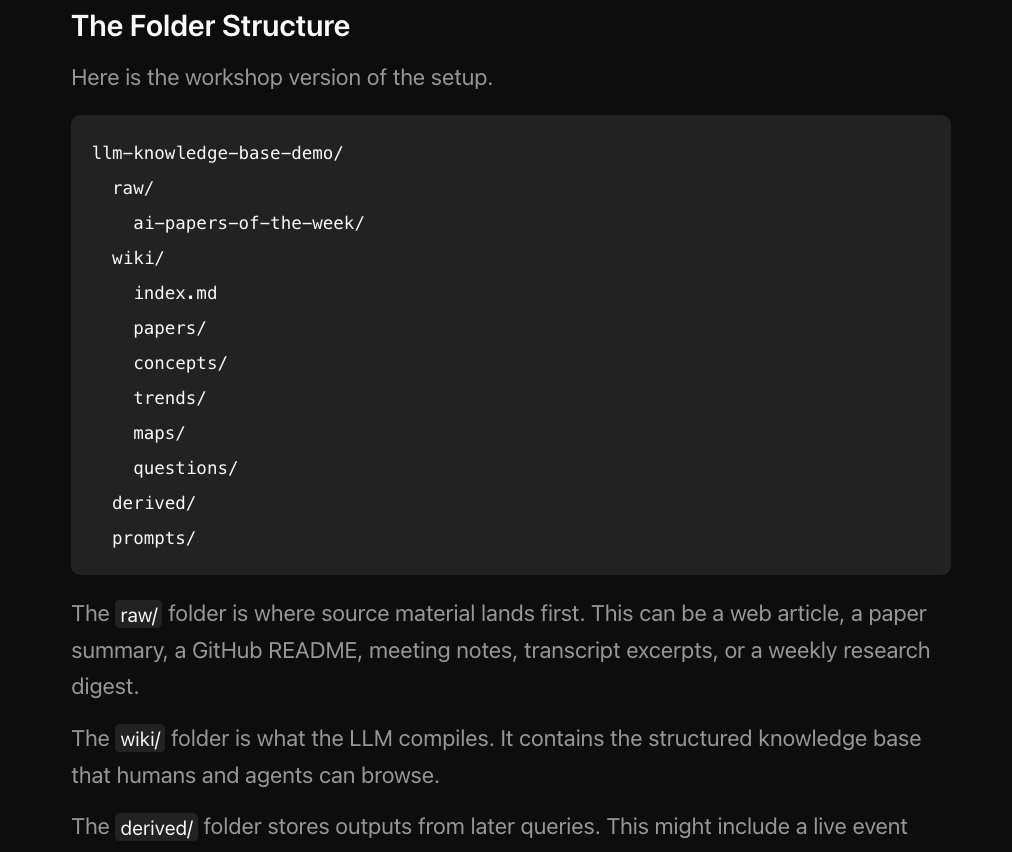

Karpathy's LLM Wiki pattern went viral. 41K bookmarks on one post.

@NickSpisak_ built a complete implementation you can install in 60 seconds. No code. Works with Claude Code, Codex, Cursor, or Gemini CLI.

Here's what it does:

→ One command setup. Run npx skills add NicholasSpisak/second-brain. A wizard walks you through naming, location, and tooling. Done.

→ Drop and ingest. Save articles, papers, notes, transcripts to a raw/ folder. The LLM reads everything, writes structured wiki pages, cross-references them, and maintains an index.

→ Four skills built in. /second-brain sets up the vault. /second-brain-ingest processes new sources. /second-brain-query lets you ask questions against your wiki. /second-brain-lint health-checks everything.

→ Obsidian native. Browse with wikilinks, graph view, and full-text search. Not a custom app. Not a SaaS dashboard. Your files, your folder, your control.

→ Structured by default. Sources get summaries. People and tools become entity pages. Ideas become concept pages. Themes become synthesis pages. All auto-organized.

→ Works with multiple agents. Claude Code, Codex, Cursor, Gemini CLI. Same wiki schema, same vault, different agents. No lock-in.

The LLM is the librarian. You're the curator.

Open source: github.com/NicholasSpisak…

English

@shannholmberg Looks interesting, but strenuous. You could be doing all of this automatically with Recall.

youtube.com/watch?v=qV-s6F…

YouTube

English

new update to the LLM Knowledge base

shipped 5 upgrades to my Claude + Obsidian second brain today:

- hot cache → new sessions start with a summary of the last one

- /save → turns any conversation into a filed wiki note

- /autoresearch → multi-round loop that searches, fetches, and cross-references with a set budget

- [!contradiction] callouts → conflicting sources get a scannable block, not buried prose

- Obsidian Bases dashboard → Recent, Low confidence, Unexplored, Stale

every page has confidence + explored frontmatter. the dashboard shows what's shaky, unreviewed, or 90+ days old.

the hot cache I'll notice daily. Stop hook runs claude -p on the transcript and rewrites wiki/hot .md. SessionStart injects it, that means the vault has working memory.

English

@omarsar0 @karpathy Check out how to build the same LLM knowledge base the easy way with Recall 👉 youtube.com/watch?v=qV-s6F…

YouTube

English

A few notes on how to get started with building LLM Knowledge Bases.

@karpathy popularized it but most people don't know where to start.

Everyone should be creating LLM Wikis.

Live session tomorrow.

Shared a repo example and a Skill coming soon.

academy.dair.ai/blog/how-to-bu…

English

Karpathy described a product that already exists. It's called Recall.

Save anything in one click → auto-summaries → auto categorization → auto-built knowledge graph → chat in Claude/GPT/Gemini (or via MCP) with your edge: your sources, your context, your taste.

No CLI, no folder wrangling. Already live and polished.

English