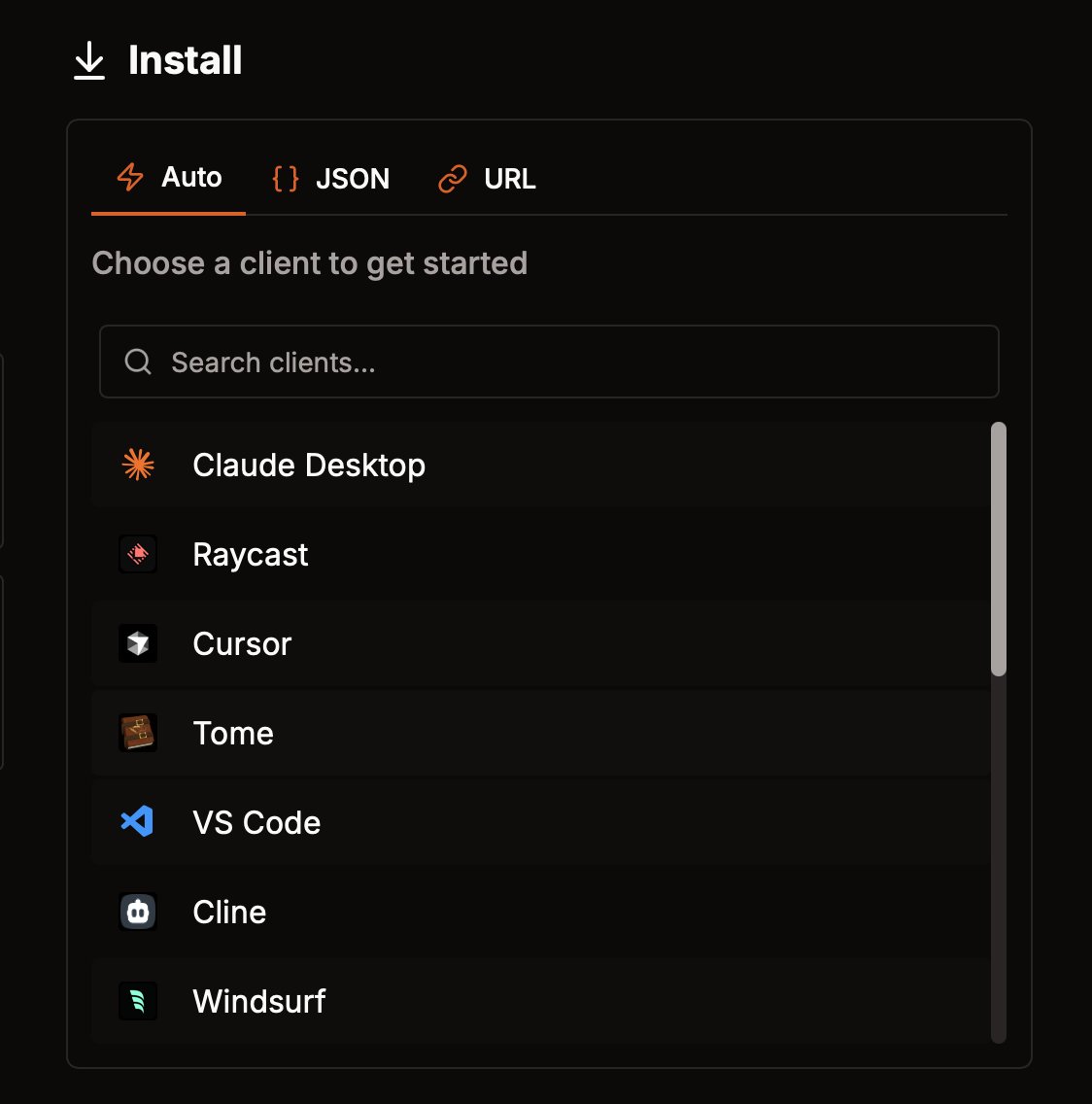

Keycard has acquired Runebook! 🎉 @peteraldehyde & @mattenoble have been on the front lines of MCP from day one - building tooling and seeing the trust + security gaps up close. To ship agents into production, teams need to know: • Which MCP servers are safe • What an agent can do, and on whose behalf • How to audit every tool call • How to prevent data & credential leakage Keycard provides the missing layer: cryptographic agent identities, dynamic authorization, and full audit lineage. Customers are already moving MCP from sandbox → production. Peter & Matte know that “easy to connect” means nothing without “safe to deploy.” Together we’ll continue to make MCP production-ready by default. 🧵Read the full story in the thread →