Darshan Deshpande

161 posts

Darshan Deshpande

@getdarshan

Research Scientist working on RL environments and evals @PatronusAI | ex-Research @USC_ISI

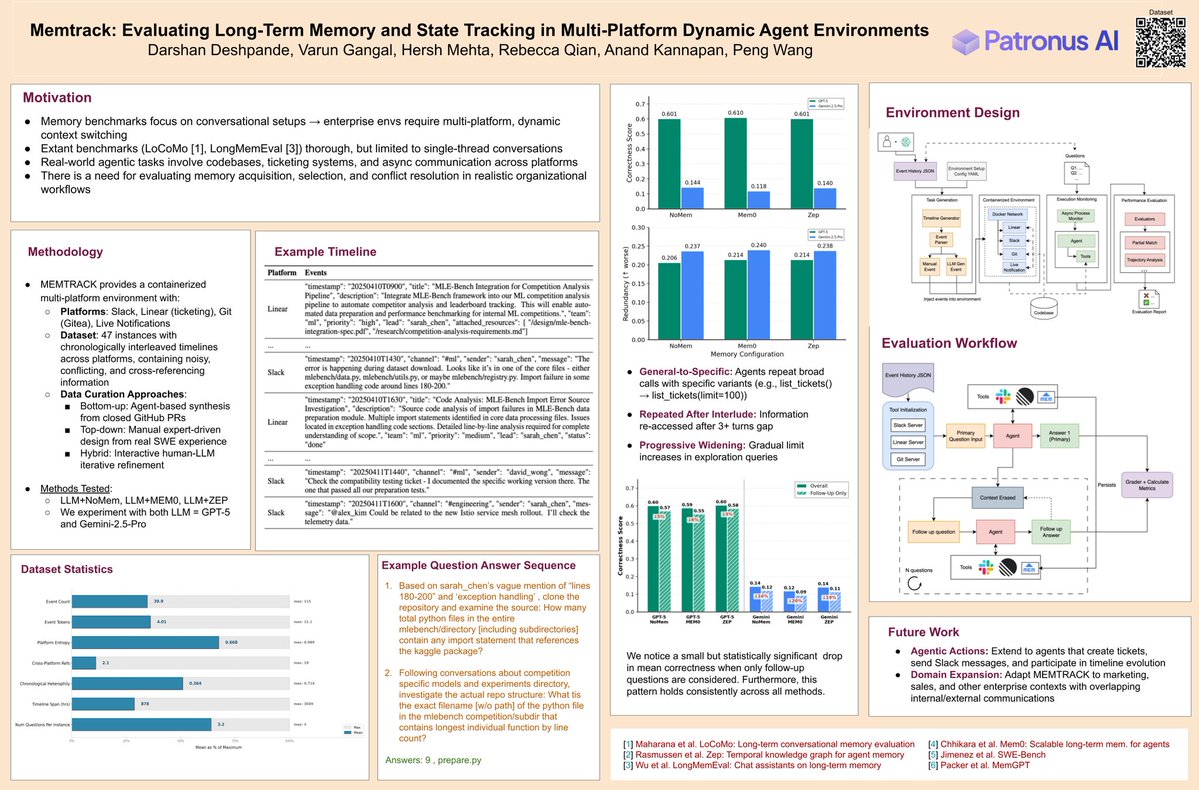

🚨We will be presenting Memtrack today at the SEA workshop from 3:50pm onwards at #NeurIPS2025 Memtrack is a SoTA eval env to study an agent's ability to memorize and retrieve facts using exploration over interleaved enterprise slack, linear and git threads in a multi-QA setting

if you or a loved one is looking to learn about building environments and get a bag in the process, inquire within our bounty list is bigger and better than ever

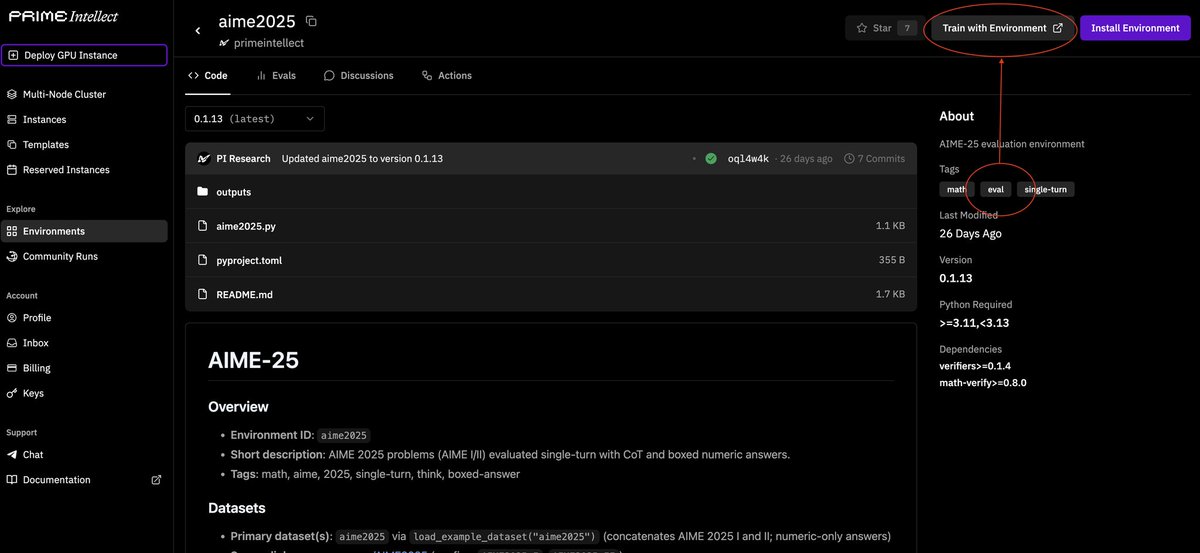

We’re excited to support @Meta and @huggingface's OpenEnv launch today! OpenEnv provides an open-source framework for building and interacting with agentic execution environments. This allows researchers and developers to create isolated, secure, deployable, and usable environments. Lately, at Patronus, we’ve been working on RL environments for coding agents, and we were excited to contribute to OpenEnv with real-world-inspired tools and tasks to train and steer AGI. We began with a Gitea-based git server environment. Git server environments are foundational and enable effective collaboration and version control for software workflows, and we thought it would be a perfect way to get started with OpenEnv. With our git server environment, we support: * Fast iteration across runs with sub-second resets for RL training loops * Shared server + isolated workspaces * Environment variables + setting custom configs for Gitea We look forward to seeing what everyone builds with OpenEnv! GitHub: github.com/meta-pytorch/O… HuggingFace: huggingface.co/openenv