Maxim AI

453 posts

Maxim AI

@getmaximai

Simulate, evaluate, and observe your AI agents to ship reliably and 5x faster ⚡🚀 Sign up now: https://t.co/p6KGDUpmLu

San Francisco Katılım Eylül 2023

0 Takip Edilen358 Takipçiler

For the first episode of Runtime by Maxim AI, we managed to pry Dan from our engineering team away from his terminal long enough to walk us through how we built Bifrost, our enterprise AI gateway.

In this episode, we cover:

✅Why we chose Go over Python for building a a high-throughput, ultra-low latency gateway

✅How we built Adaptive Load Balancing to ensure your AI systems never fail

✅Other infrastructure decisions that matter when you’re processing millions of LLM calls at scale

This is the first in a series of technical deep dives into the architecture, trade-offs, and reliability patterns behind Maxim AI. More conversations (and attempts to get our team away from their code) coming soon!

Watch Episode 1 now 👇

youtube.com/watch?v=x7SNhZ…

YouTube

English

Maxim AI retweetledi

When AI is mission-critical, the infrastructure behind it can’t be average.

✔️ Infrastructure matters.

✔️ Performance matters.

✔️ Enterprise resilience matters.

That’s why we built Bifrost, the most performant AI gateway, engineered for enterprises from day one and trusted by Fortune 100s to startups worldwide.

Take a look 👀

English

Maxim AI retweetledi

Recently a Global 500 with over 200K employees organically adopted @getmaximai and onboarded multiple teams building a large internal swarm of agents in a matter of days🧵👇

1/8

English

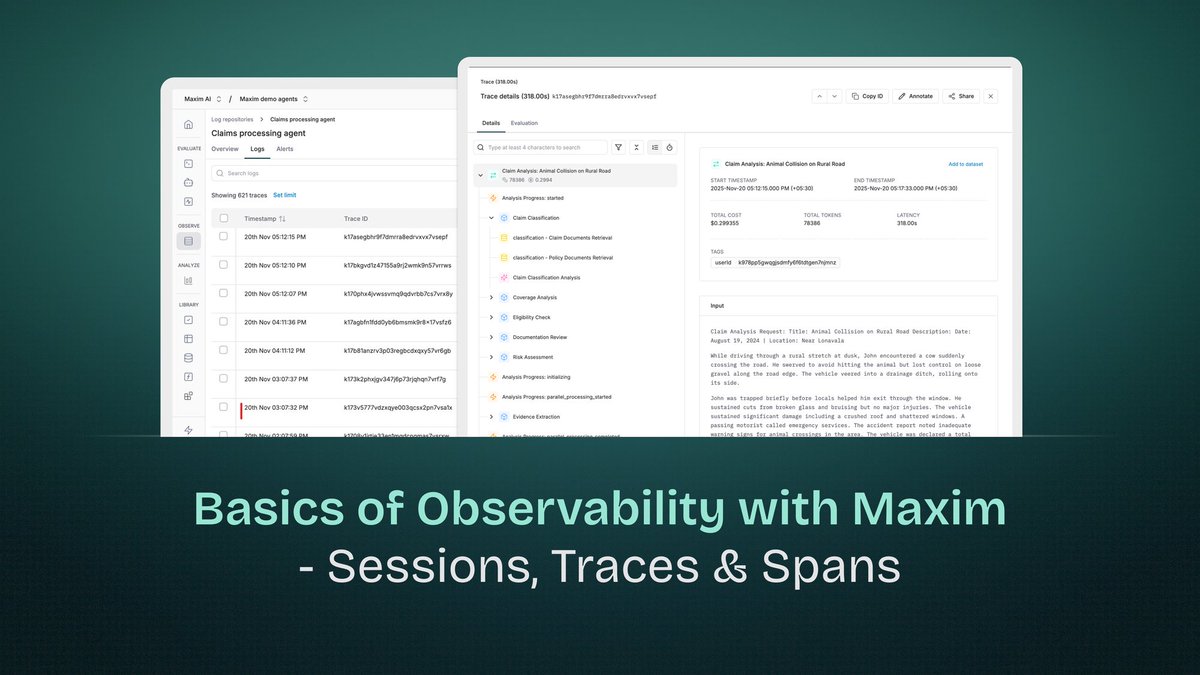

Observability in AI applications differs fundamentally from traditional application monitoring. While conventional systems deal with deterministic request-response cycles, AI applications involve multi-turn conversations, complex reasoning chains, multiple model invocations, and retrieval operations - all of which need visibility for debugging, optimization, and understanding system behavior.

Maxim AI's observability platform extends distributed tracing principles to address AI-specific challenges. At its core are three hierarchical constructs: Sessions, Traces, and Spans. Understanding these components and their relationships is essential for effectively monitoring and troubleshooting AI applications.

Want to read more? Link - getmax.im/NntxkCO

English

Modern speech AI systems are impressive. They can understand context, generate human-like responses, and even capture emotion in their voice. But there's a hidden technical challenge that plagues nearly all of them: first token latency.

When these systems generate speech, they produce it as a series of small audio chunks called "tokens." Traditional models generate these tokens one at a time, sequentially.

The researchers behind VITA-Audio decided to break that paradigm. Instead of generating one audio token at a time, they sought to generate multiple in a single forward pass through the model. This is where their key innovation comes in: Multiple Cross-Modal Token Prediction.

Sounds Interesting right? Read the complete blog written by Vrinda Kohli here - getmax.im/xgWb0bx

English

Zed AI by @zeddotdev is a high-performance, collaborative code editor built for the modern development workflow. With native AI assistant integration, Zed can leverage language models directly within the editor for code generation, refactoring, and explanation. Integrating Zed with Bifrost Gateway unlocks multi-provider model access, MCP tools, and observability - transforming Zed's AI assistant into a flexible, enterprise-ready coding companion.

Check out this short blog on how to integrate Bifrost with Zed - getmax.im/GhjzSqD

English

@Kimi_Moonshot recently open-sourced Kimi K2 and its reasoning-optimized variant, K2 Thinking. As someone who works with large language models, I wanted to break down what makes this release interesting and where it pushes forward the state of open-source AI.

This blog walks through the key technical innovations: how they trained such a large model without crashes, how they generated training data for complex agentic behavior, and what makes the reasoning mode special - getmax.im/AI9dg5i

English

Codex CLI is @OpenAI 's command-line tool for code generation and completion, bringing AI-assisted coding directly to the terminal. By routing Codex CLI through Bifrost Gateway, you gain access to multiple model providers, enhanced observability, and MCP tool integration - transforming a single-provider CLI into a flexible, multi-model development assistant.

Check out this blog and get started with using Bifrost with Codex CLI - getmax.im/herojuc

English

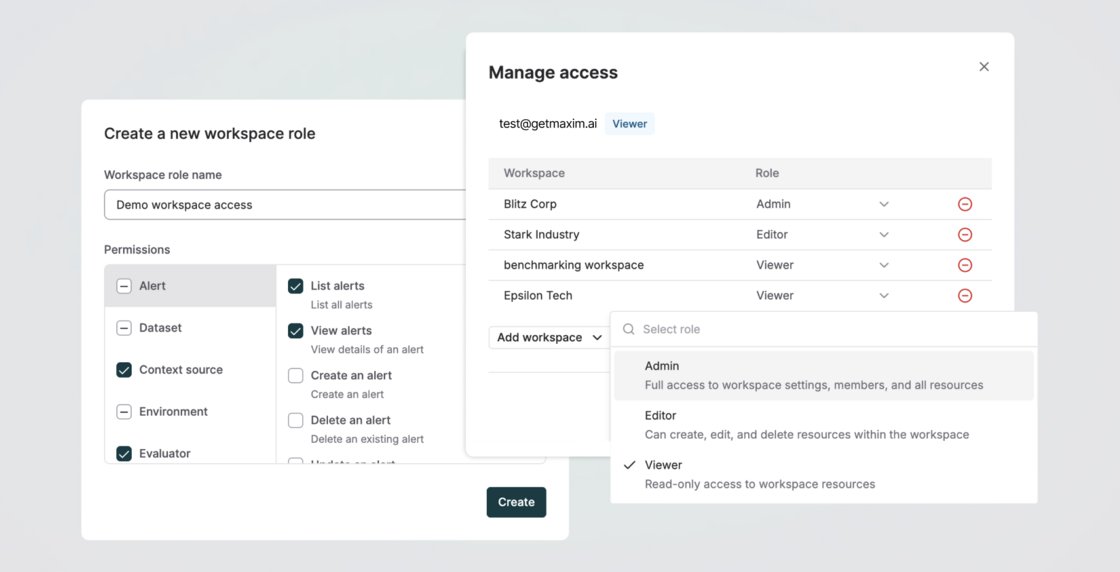

We’ve enhanced Role-Based Access Control (RBAC) in Maxim to give you finer control over access management.

Previously, roles were assigned only at the organization level, giving users the same scope of access across all workspaces they were part of. Now, you can assign different roles to users per workspace and also create custom roles with precise permissions for the resources and actions a member can access, specific to a workspace.

This gives teams granular, functional control over what users can view or do - e.g., allowing someone to create, deploy, or delete a prompt in one workspace while granting read-only access in another - making collaboration more secure, flexible, and scalable.

Get Started now - getmaxim.ai

English

You can now generate synthetic datasets in @getmaximai to simplify and accelerate the testing and simulation of your Prompts and Agents. Use this to create inputs, expected outputs, simulation scenarios, personas, or any other variable needed to evaluate single and multi-turn workflows across Prompts, HTTP endpoints, Voice, and No-code agents on Maxim.

You can generate datasets from scratch by defining the required columns and their descriptions, or use an existing dataset as a reference context to generate data that follows similar patterns and quality.

You can include the description of your agent (or its system prompts) or add a file as a context source to guide generation quality and ensure the synthetic data remains relevant and grounded.

English

Speech models are having a moment - and it seems like they’re here to stay.

They can transcribe your rambling, understand your questions, and even tell when you're being sarcastic. But ask them to process anything longer than a TikTok video and they straight-up collapse.

In our latest blog, Vrinda Kohli dives into FastLongSpeech, a new approach that achieves 30x compression without losing context - enabling Large Speech-Language Models to handle podcasts, meetings, and long-form audio without melting your GPUs.

🔗 Read the full post: getmax.im/wXoPboA

English

LibreChat is now available on Bifrost 🚀

LibreChat is a modern, open-source chat client that supports multiple providers, and now that you have LibreChat installed, you can add Bifrost as a custom provider, check out the configuration file (librechat.yaml) here - getmax.im/librechat

English

Bifrost now supports CLI tools 🎉

Bifrost provides 100% compatible endpoints for @OpenAI, @AnthropicAI, and @Google Gemini APIs, making it seamless to integrate with any CLI agent that uses these providers. By simply pointing your CLI agent’s base URL to Bifrost, you unlock powerful features like:

1. Universal Model Access: Use any provider/model configured in Bifrost with any agent (e.g., use GPT-5 with Claude Code, or Claude Sonnet 4.5 with Codex CLI)

2. MCP Tools Integration: All Model Context Protocol tools configured in Bifrost become available to your CLI agents

3. Built-in Observability: Monitor all agent interactions in real-time through Bifrost’s logging dashboard

4. Load Balancing: Automatically distribute requests across multiple providers and regions

5. Advanced Features: Governance, caching, failover, and more - all transparent to your CLI agent

Learn more here - docs.getbifrost.ai/quickstart/gat…

English

Traditional monitoring tools fall short because AI systems fail differently. A 200 OK response doesn't mean your LLM didn't hallucinate. Low latency doesn't guarantee your RAG pipeline retrieved relevant context. And normal CPU usage tells you nothing about whether your agent made the right tool choice.

This is where AI observability becomes critical. In this deep dive, we'll explore how Maxim's platform brings enterprise-grade observability to LLM applications, enabling you to monitor, trace, and debug AI systems with the same rigor you apply to traditional software.

Blog Link - getmax.im/w8SriOw

English

Revamped Graphs and Omnibar for Logs 🚀

Graphs in the Log repository now feature a new, interactive UI that makes it easier to explore trends. You can click on any bar to drill down into specific logs or drag across a timeframe to instantly filter and visualize performance metrics within that period.

We’ve also added new visualizations, including evaluator-specific (eg, total traces and sessions evaluated) and custom metric graphs, to help you monitor and analyze the metrics that matter most to your workflow.

We’ve enhanced the Log search Omnibar to make it easier to navigate logs, debug, and identify failure scenarios. You can now create complex filters using logical operators (AND, OR) and group multiple conditions together. Advanced operators such as "contains", "begins with", etc, for more precise filtering are also added.

English