Small AI

242 posts

Small AI

@getsmallai

The future is smaller than you think.

Qwen3.6-27B on ONE RTX 3090: ⚡ 85 TPS sustained (106 peak) 📏 125K context 👁 Vision + tool calls 🔌 230W cap — quiet & cool Consumer 24GB. Full OpenAI-compatible API. Single card. Further testing in progress — stay tuned for the write-up. @TheAhmadOsman @LottoLabs @KyleHessling1 @sudoingX @stevibe @0xSero @TeksEdge @Alibaba_Qwen @ivanfioravanti

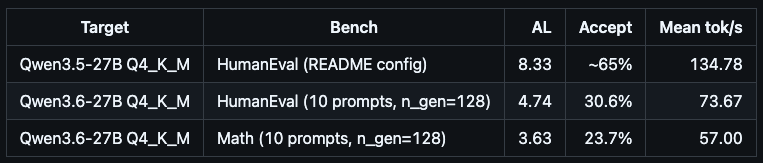

The new Qwen3.6-27B now runs on Luce DFlash. Up to 2x throughput on a single RTX 3090. Qwen3.6-27B ships the same Qwen35 architecture string and identical layer/head dims as 3.5, so the existing DFlash draft + DDTree stack loads it as-is. Throughput is lower than on 3.5. Looking forward for the updated version from the DFlash team to implement it as well! Repo in the first comment ⬇️

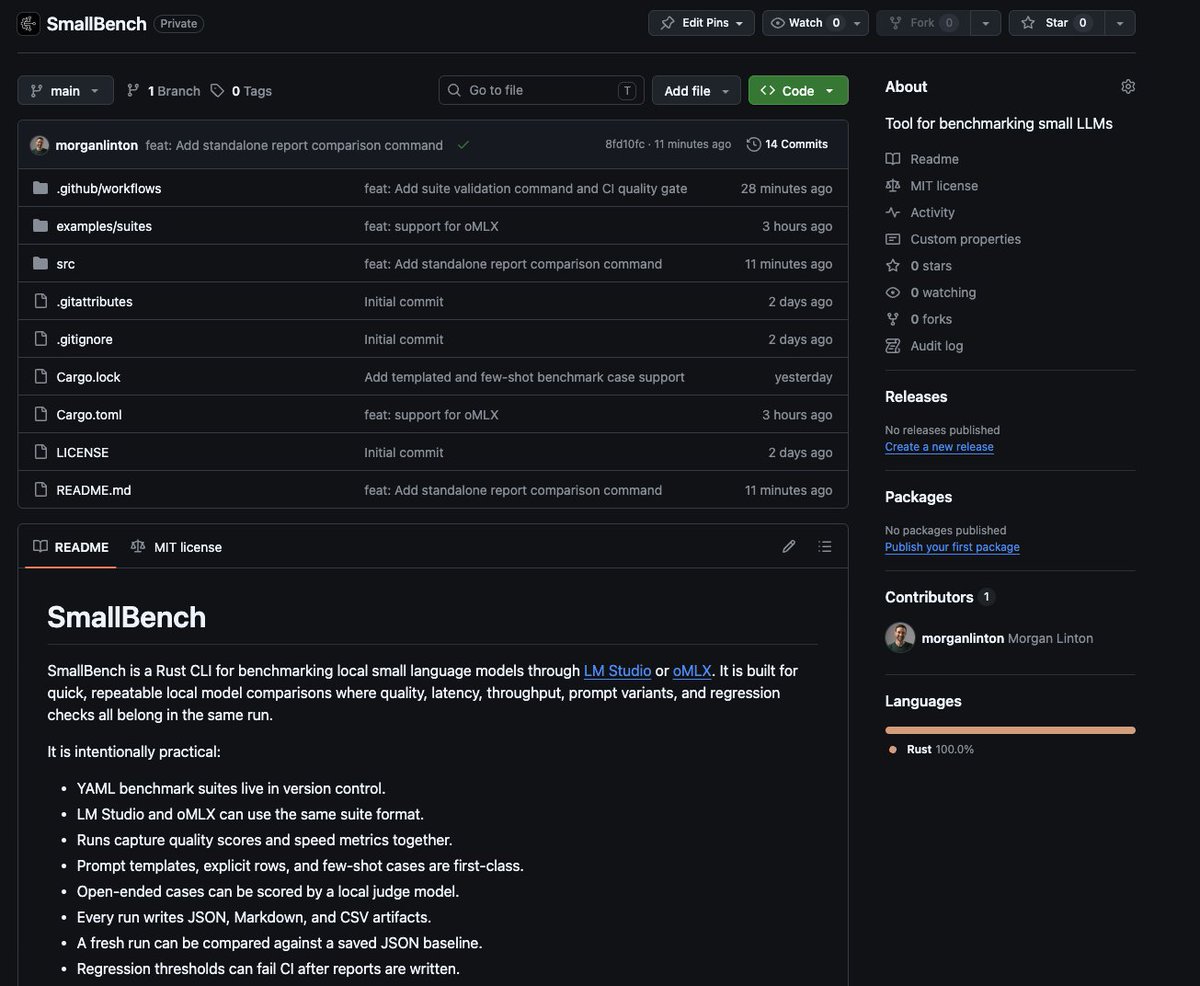

Going to be testing something small this weekend, the first to come from @getsmallai ✨ And of course, 100% Rust, because I'm hooked on how damn fast Rust is 🦀 It's probably going to suck at first, but once it sucks a little less, I'll share it.