David Hendrickson

14K posts

@TeksEdge

CEO & Founder | PhD | Startup Advisor | @Columbia | Author Generative Software Engineering https://t.co/9oqvHuTX5f | 🔔 Follow for AI & Vibe Coding Tips 👇

"Why are you benchmarking DGX Spark? It's a training box." Yeah. Low bandwidth, but 128GB of unified memory is just sitting there. Plenty of room to optimize. DGX Spark + Qwen3.6 27B. Four backend/quant combos: 🔴 llama.cpp + UD_Q4_K_XL > 11.0 tok/s (baseline), TTFT 297ms 🟢 llama.cpp + DFlash > 20.4 tok/s (peaks at 97 tok/s), TTFT 320ms 🟡 vLLM FP8 + MTP > 13.1 tok/s, TTFT 540ms 🟣 vLLM NVFP4 + MTP > 24.2 tok/s, TTFT 376ms NVFP4+MTP is the winner for me, rock stable around 24 tok/s, no wild swings. DFlash is the wildcard: massive peaks, but fluctuates a lot. FP8+MTP barely beats baseline, and it's FP8. Love my Spark.

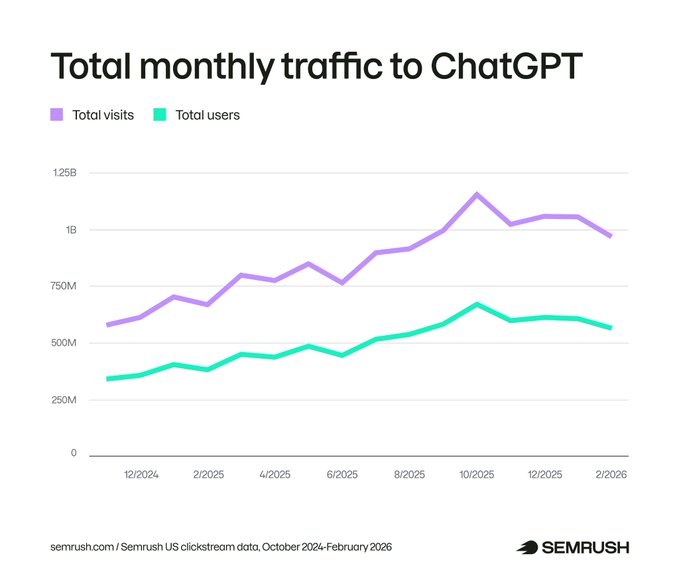

ChatGPT is now a standard part of how people use the web, as one piece of a complex, interconnected search journey. We dug into 17 months of clickstream data to map how ChatGPT usage is changing, how referral traffic is growing, and where that traffic goes. If you're a marketer, understanding how your audience uses ChatGPT and where it exists in this buyer journey is critical for understanding how best to reach them. Key takeaways: • Outbound referral traffic from ChatGPT to the rest of the web grew 206% in 2025. • Over 30% of all referral traffic from ChatGPT goes to 10 domains. And over 20% goes to Google. • ChatGPT enables its search feature on just 34.5% of queries as of February 2026 – down from 46% in late 2024 – meaning most responses still rely on training data alone. • Users are asking more prompts per session. After 12 months of flat engagement, average queries per session jumped 50% in the last four months of our study period. Full study: social.semrush.com/48FOJOz.

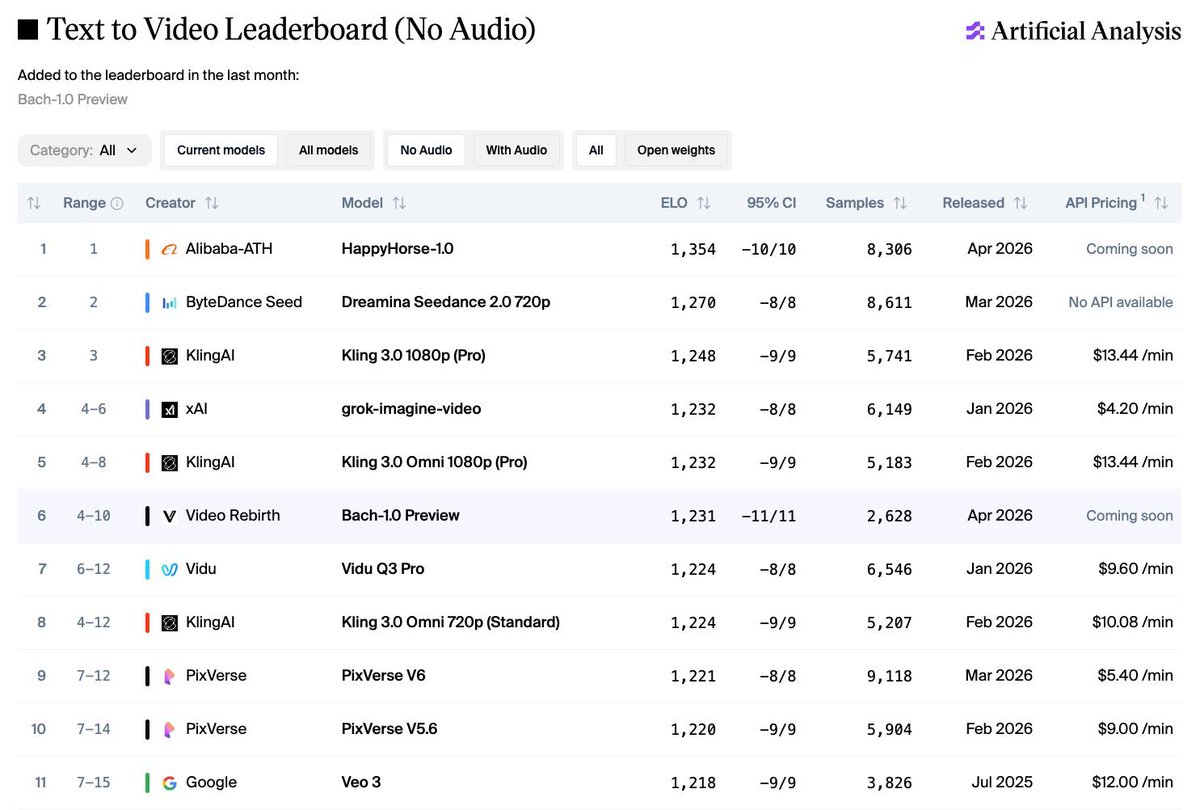

Bach-1.0 Preview from Video Rebirth debuts at #6 on the Artificial Analysis Text to Video Leaderboard (No Audio)! Bach-1.0 Preview is the latest Text to Video model from @video_rebirth, with similar performance to Vidu Q3 Pro, Kling 3.0 Omni 1080p (Pro), and grok-imagine-video. Bach-1.0 Preview is intended for broad release later in May. See example generations from Bach-1.0 Preview in the Artificial Analysis Video Arena below 🧵

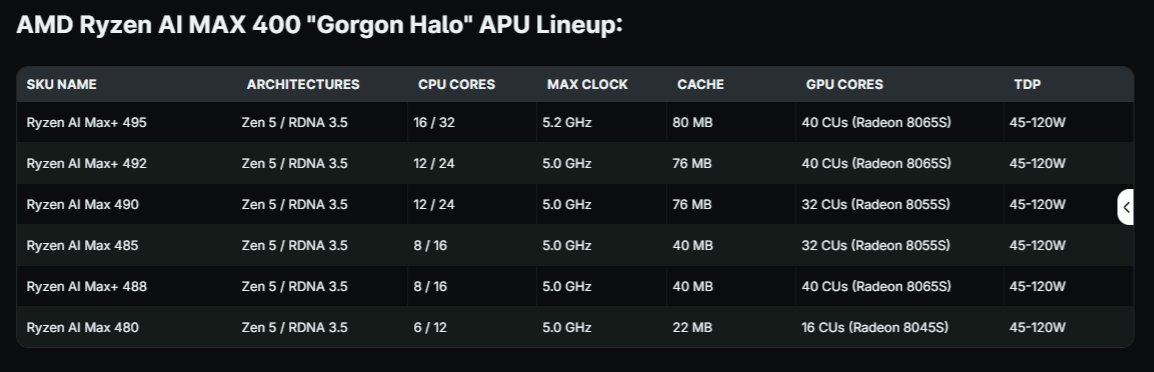

⁉️So get this, AMD is making a bold move to own the affordable personal inferencing market by launching a Mini PC in June, a 128GB Shared Memory Inferencing Box 🎇 They call it the ⬭ Halo Box. 🧾 It's a Ryzen AI MAX+ 395 (16 Zen 5 cores + 40 RDNA 3.5 CUs + XDNA 2 NPU) ✅ Up to 128GB LPDDR5X-8533 unified memory ✅ Full ROCm support + Day-0 AI model optimization 🧪 Built for local AI development (up to ~200B param models) 📈 Direct shot at NVIDIA’s $4,699 DGX Spark and could cost $2,000–$3,000 (as they do now) 🤔 Why launch now during the RAM shortage? While memory makers divert capacity to HBM for AI data centers (driving LPDDR5X prices to spike and NVIDIA to raise the price of DGX Spark by $700), AMD is making a bold move to own the affordable, high-memory AI mini-PC segment before the crisis worsens. 💡 My Speculation: AMD could be using its contracts, relationships, and strategic priority to secure better memory access than many traditional OEMs. This could give them an advantage in launching the Halo Box during the shortage. Smart timing or risky bet? 🔥 This is AMD aggressively fighting for the local AI developer market.

This is a great example of the difficult position local inferencers are faced with using quant models 60-100GB in size. The DGX-Spark at $4,700 (now) retail and the only reasonable option (vs $10-$14K) but it’s slooooow.

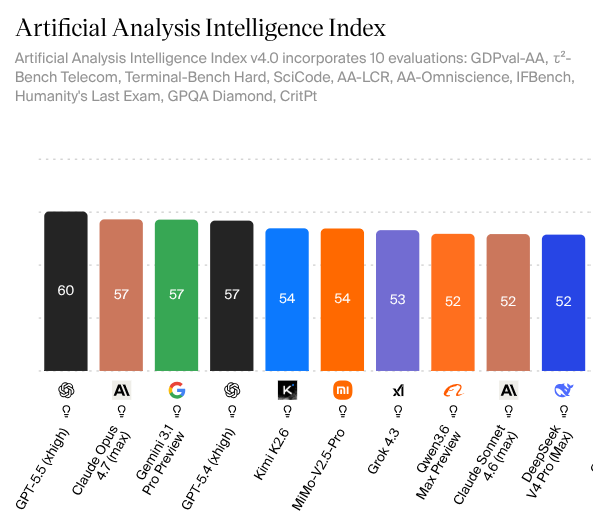

🏆 LLMStats just dropped a fresh leaderboard update. This is my trusted ranking. 📊 The "TrueSkill" composite score is the real deal as the most conservative, battle-tested “Uber benchmark” in the game (μ − 3σ across GPQA, SWE-Bench, coding arenas & more). 👀 Current Standings 🏆 Overall #1 Claude Mythos Preview (@AnthropicAI) — 70.1Unreleased monster. 94.6% on GPQA Diamond. This thing is going to be an absolute banger 🚀 🥇 Best Open-Weights Kimi K2.6 (@moonshot) — 58.7Undisputed leader among open models right now. 90.5% GPQA + only $0.95/M tokens. Insanely good value 💎 Quick Hits 🏆 Gemini 3.1 Pro → Dominating coding arenas 👑 Llama 4 Scout → 10M context king ⚡ Mercury 2 → Fastest model at 1720 tps 🔥Bottom line If you care about real capability per dollar, Kimi K2.6 is the one to watch in the open-source world right now. And when Mythos drops… the game changes