Greg Johns

2.4K posts

Greg Johns

@gjohnsx

AI developer building automation tools for real estate. MCP servers + AI agents. Personal liberty, sound money. Orlando.

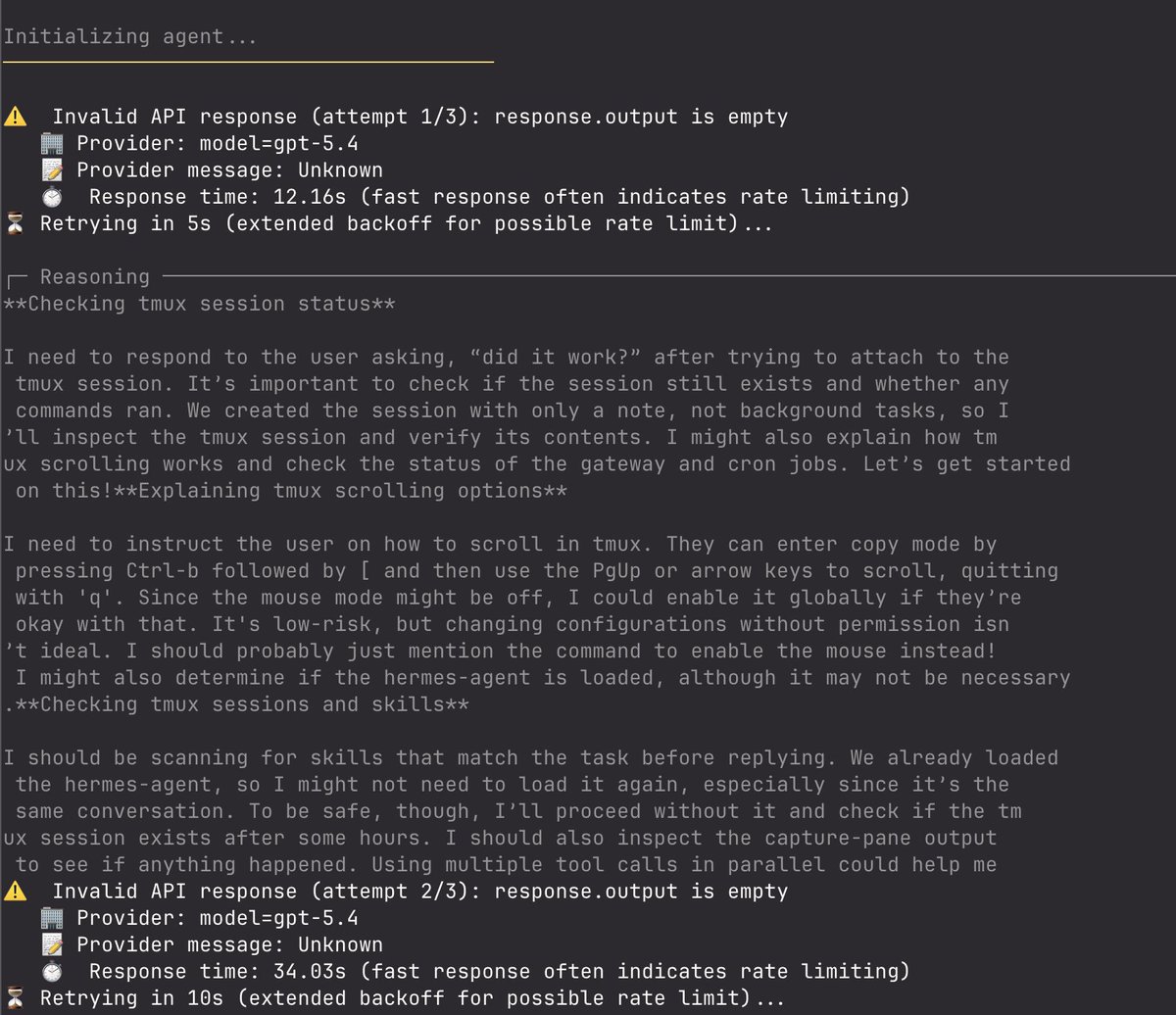

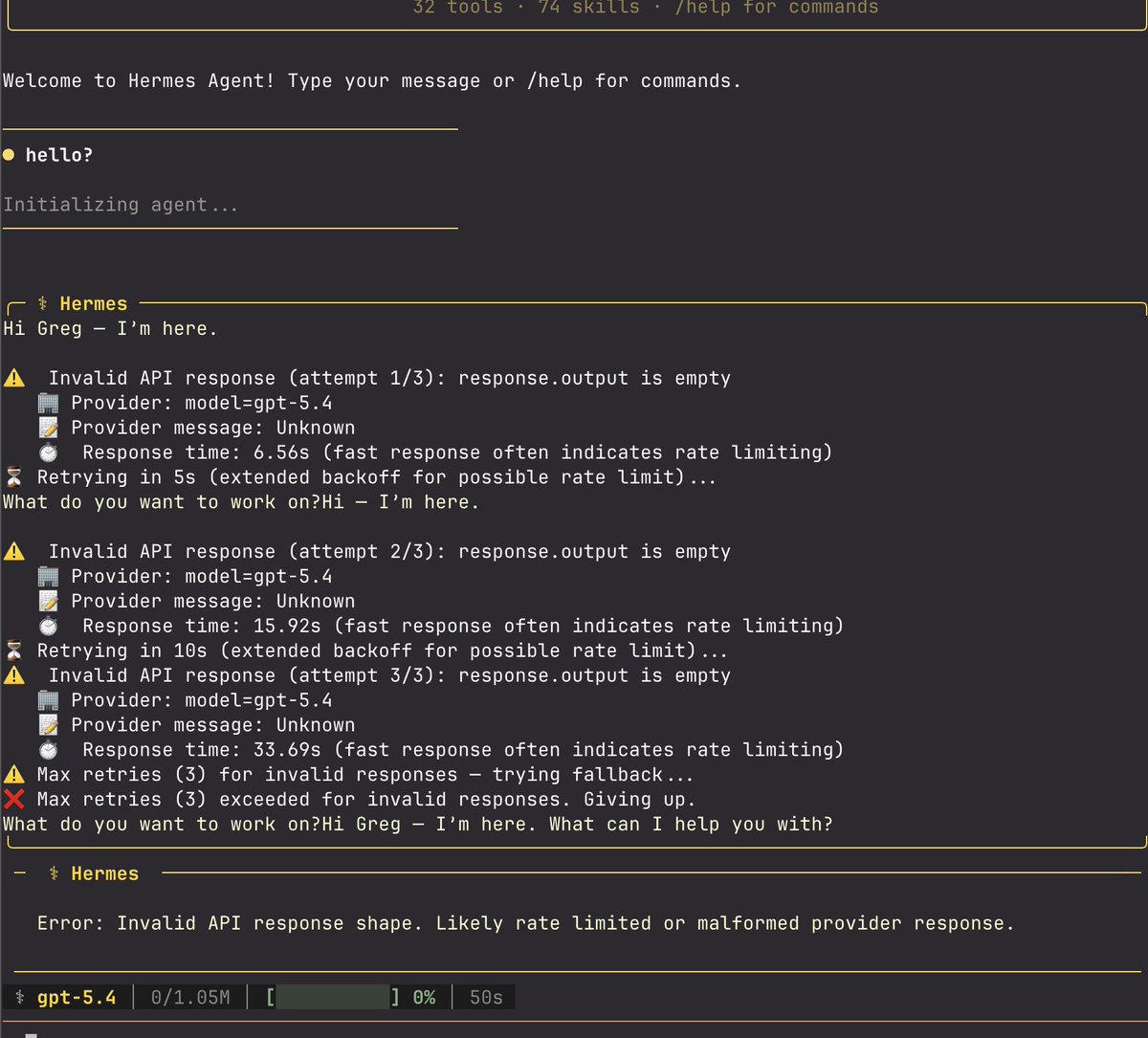

this feature could've been a skill

I still think about this.

Hermes, make a daily report on updates to Hermes Agent (it's the only way to keep up!)

Computer use is now in Claude Code. Claude can open your apps, click through your UI, and test what it built, right from the CLI. Now in research preview on Pro and Max plans.

Almost every SaaS app inside Vercel has now been replaced with a generated app or agent interface, deployed on Vercel. Support, sales, marketing, PM, HR, dataviz, even design and video workflows. It’s shocking. The SaaSpocalypse is both understated and overstated. Over because the key systems of record and storage are still there (Salesforce, Snowflake, etc.) Understated because the software we are generating is more beautiful, personalized, and crucially, fits our business problems better. We struggled for years to represent the health of a Vercel customer properly inside Salesforce. Too much data (trillions of consumption data points), the ontology of Vercel was a mismatch to the built-in assumptions, and the resulting UI was bizarre. We generated what we needed instead. When you don’t need a UI, you just ask an agent with natural language. We’ve also been moving off legacy systems with poor, slow, outdated, and inconsistent APIs, as well as just dropping abstraction down to more traditional databases. UI is a function 𝑓 of data (always has been), and that 𝑓 is increasingly becoming the LLM.