Robert M. Gower

568 posts

@gowerrobert

Often found scribbling down math with intermittent bursts of bashing out code.

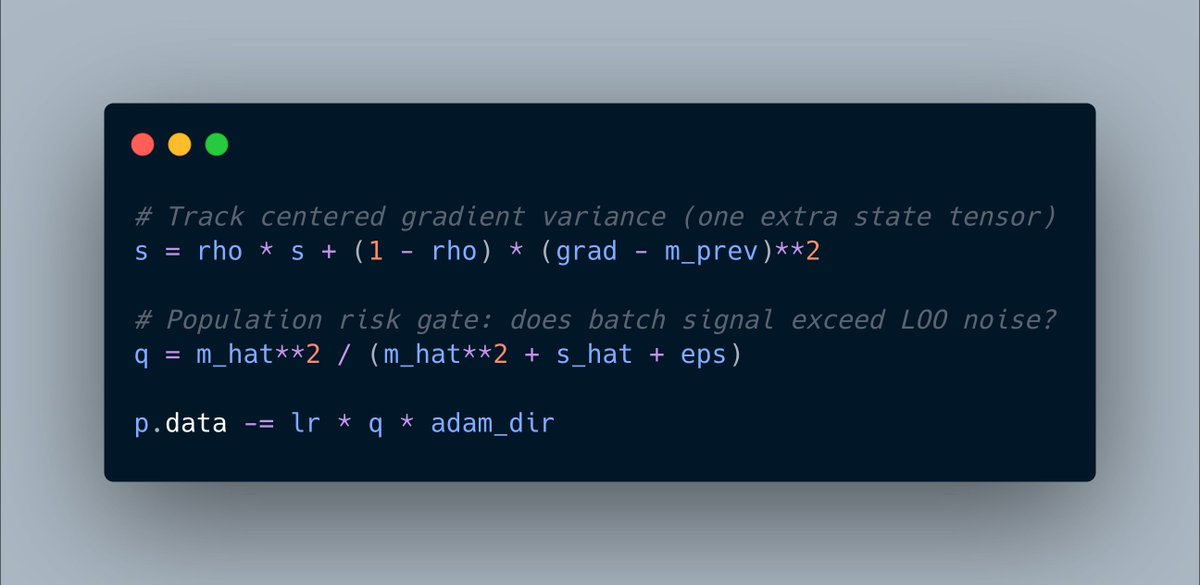

When β_1=β_2, we can first re-write Adam as below, where instead of the standard uncentered 2nd momentum, we have something that looks a weird variance estimator. Fun fact, it is an online estimate of variance! Let me explain ...

Are you interested in the new Muon/Scion/Gluon method for training LLMs? To run Muon, you need to approximate the matrix sign (or polar factor) of the momentum matrix. We've developed an optimal method *The PolarExpress* just for this! If you're interested, climb aboard 1/x

PSGD is indeed kino. I fear not the man who has implemented 10,000 optimizers on a single problem, but I fear the man who relentlessly improved the same optimizer 10,000 times. Xilin Li & Omead P sites.google.com/site/lixilinx/…

We've just finished some work on improving the sensitivity of Muon to the learning rate, and exploring a lot of design choices. If you want to see how we did this, follow me ....1/x (Work lead by the amazing @CrichaelMawshaw)

I’ve run a lot of experiments on Muon and its variants, and I’d bet that in this setting, the Muon baseline will be very hard to beat.

Are you interested in the new Muon/Scion/Gluon method for training LLMs? To run Muon, you need to approximate the matrix sign (or polar factor) of the momentum matrix. We've developed an optimal method *The PolarExpress* just for this! If you're interested, climb aboard 1/x

Why does Muon outperform Adam—and how? 🚀Answer: Muon Outperforms Adam in Tail-End Associative Memory Learning Three Key Findings: > Associative memory parameters are the main beneficiaries of Muon, compared to Adam. > Muon yields more isotropic weights than Adam. > In heavy-tailed tasks, Muon significantly improves tail-class learning compared to Adam. Paper Link: arxiv.org/pdf/2509.26030 A thread 🧵

Are you interested in the new Muon/Scion/Gluon method for training LLMs? To run Muon, you need to approximate the matrix sign (or polar factor) of the momentum matrix. We've developed an optimal method *The PolarExpress* just for this! If you're interested, climb aboard 1/x

Are you interested in the new Muon/Scion/Gluon method for training LLMs? To run Muon, you need to approximate the matrix sign (or polar factor) of the momentum matrix. We've developed an optimal method *The PolarExpress* just for this! If you're interested, climb aboard 1/x

Woah, how did I never hear of this? An optimizer paper that got published in Nature, looks quite substantial

Want to do fundamental ML research in NYC? 🧠 The Center for Computational Mathematics @FlatironInst @SimonsFdn is hiring! – Flatiron Research Fellow (postdoc, by Dec 1): apply.interfolio.com/173401 – Open Rank (by Jan 15): apply.interfolio.com/173640