emi

1.8K posts

emi

@gpuemi

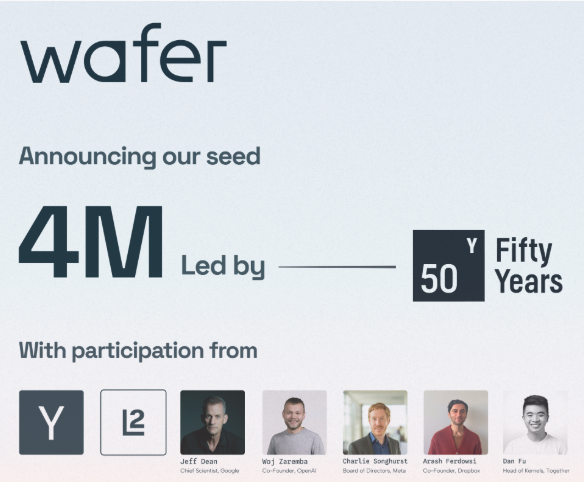

co-founder @wafer_ai (yc s25) -- ai that makes ai chips go faster

Inference Chips for Agent Workflows @sdianahu Most AI chips are designed for "prompt in, response out." Agents don't work that way. They loop, branch, and hold context across dozens of steps, and current GPUs hit 30–40% utilization as a result. That gap is where purpose-built silicon wins.

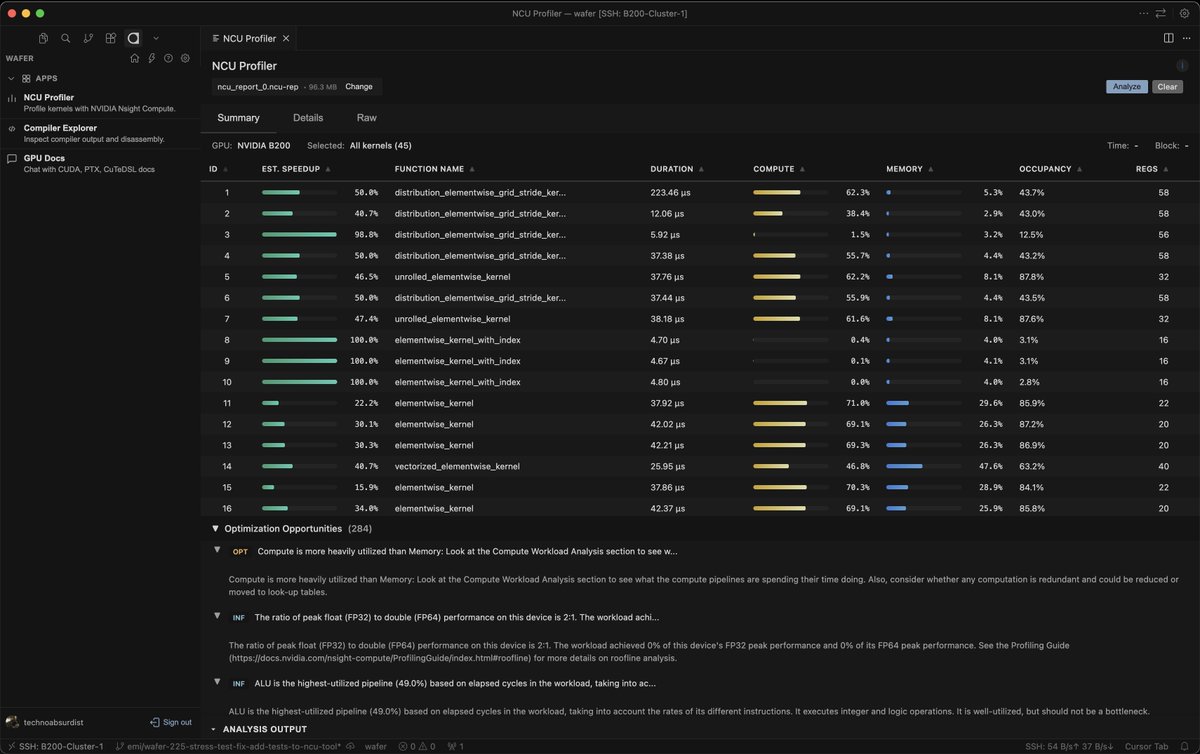

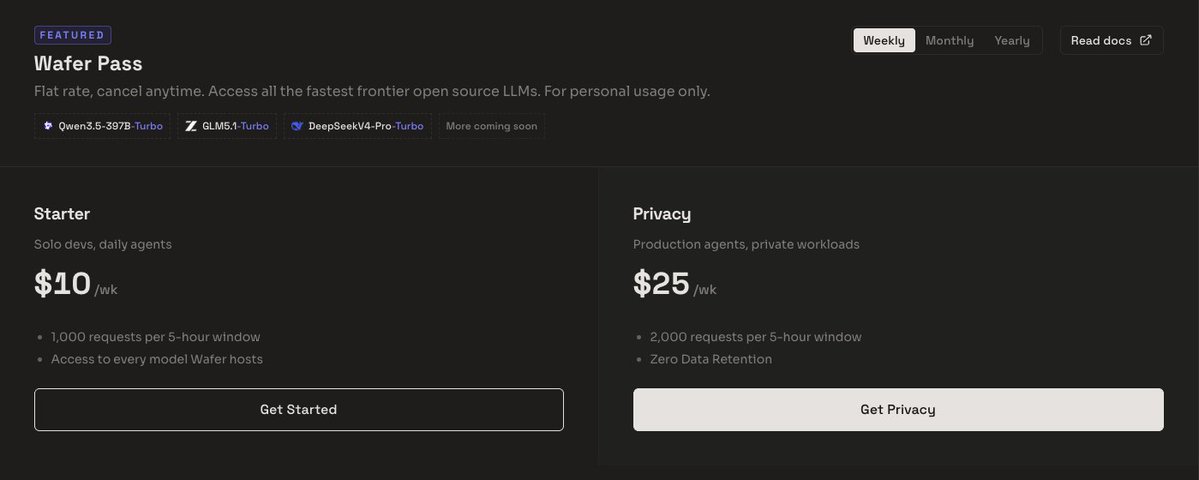

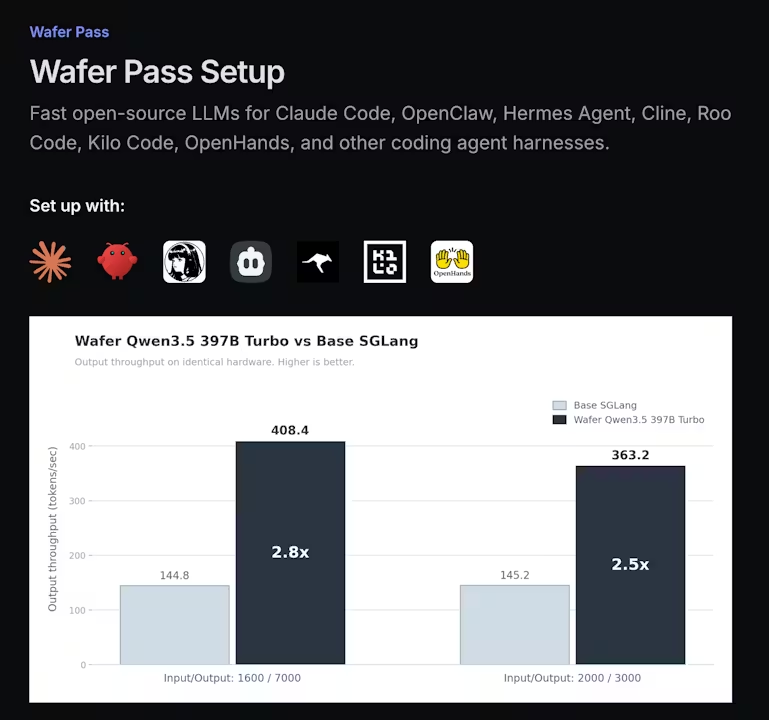

building with ai agents is getting expensive fast. per-token pricing makes it hard to predict cost, slows experimentation, and turns every iteration into a tradeoff. we've used agents to optimize inference pipelines to provide you with the fastest and most affordable inference out there! see below our qwen 3.5 inference against base sglang.

Magical OpenClaw experiences that use frontier models cost $300-1,000/day today, heading to $10,000/day and more. The future shape of the entire technology industry will be how to drive that to $20/month.