Gabriel Franco

49 posts

@gvsfranco

CS PhD student @BUCompSci. Interested in interpretability.

At the #Neurips2025 mechanistic interpretability workshop I gave a brief talk about Venetian glassmaking, since I think we face a similar moment in AI research today. Here is a blog post summarizing the talk: davidbau.com/archives/2025/…

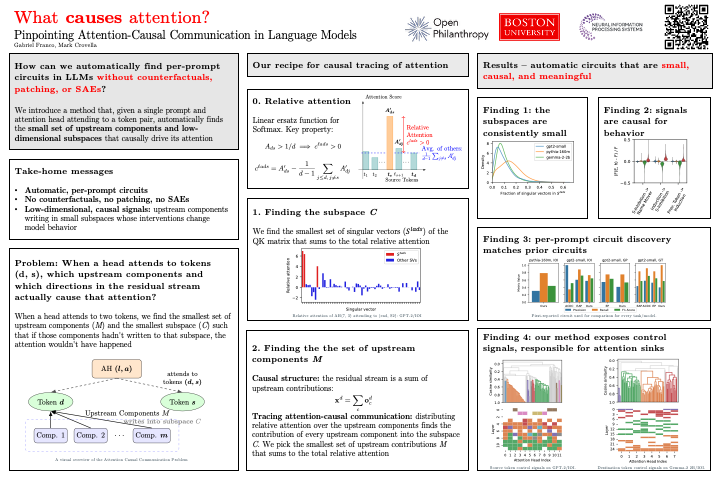

Why do attention heads attend where they do? We can now pinpoint the EXACT features causing attention—without counterfactuals, patching, or SAEs. New @NeurIPSConf 2025 paper with @mcrovella: "Pinpointing Attention-Causal Communication in Language Models"

We also discovered "control signals", which are data-independent signals that coordinate attention across layers. They implement attention sinks at the signal level. Most models use a few distinct control signals, which is a way used by the model to organize heads hierarchically!